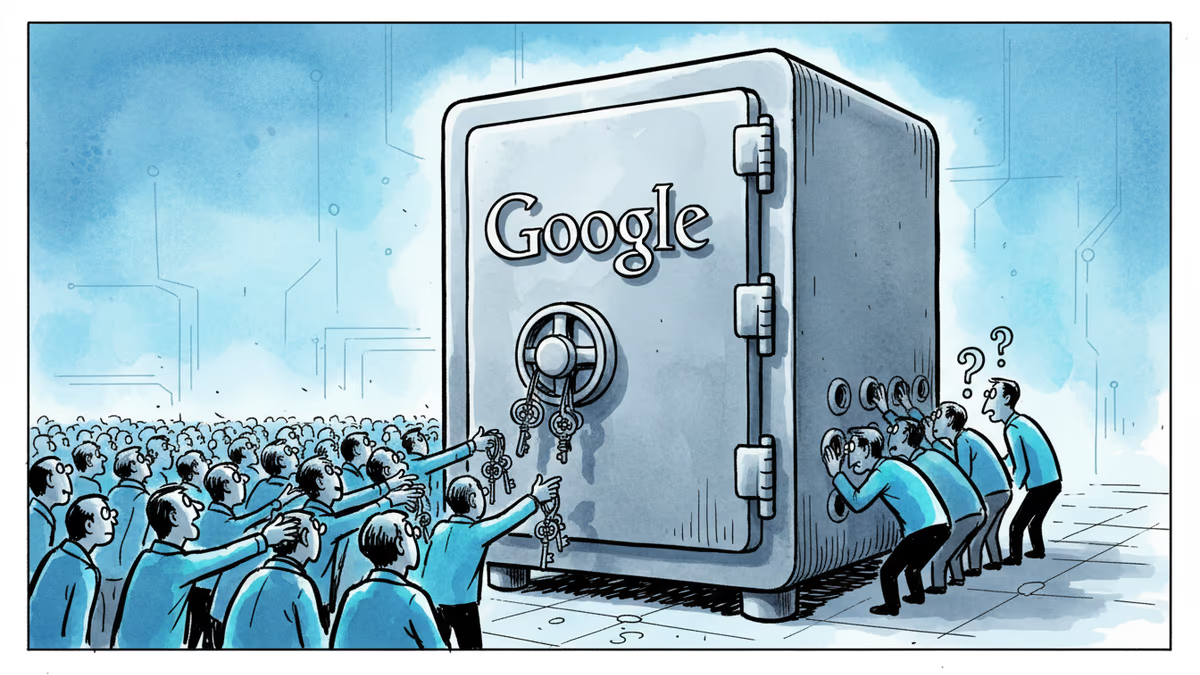

To Protect Your Privacy, Google Needs to Know Your Secrets First

Google upgraded its privacy tools to detect more personal information, but there's a catch - you must share your sensitive data with Google first to get protected from others online.

The Privacy Paradox: Trust Us to Protect You From Us

Google just upgraded its "Results About You" tool with a peculiar proposition: to protect your personal information from the internet, you first need to give that same information to Google. The enhanced tool can now detect and remove passport numbers, driver's license details, and Social Security numbers from web pages. There's just one small catch—Google needs to know these numbers first.

The process seems straightforward enough. Navigate to the ID numbers section in Results About You settings, input your sensitive details, and Google's algorithms will scour the web to find and request removal of matching information. For driver's licenses, they want the full number. For passports and SSNs, just the last four digits will suffice, Google claims.

When the Guardian Becomes the Gatekeeper

This creates an interesting trust equation. We're essentially asking the world's largest data collector to be our privacy guardian. Google promises to use this information "solely for detection and removal purposes," but that's still a significant leap of faith for privacy-conscious users.

Consumer advocacy groups have mixed reactions. The Electronic Frontier Foundation acknowledges the practical utility while questioning the concentration of sensitive data in a single corporate entity. Meanwhile, cybersecurity experts argue that proactive removal of already-exposed information represents a pragmatic approach to digital hygiene.

The tool's effectiveness depends entirely on Google's goodwill and technical competence. There's no independent oversight, no regulatory framework, and no guarantee that this data won't eventually serve other purposes within Google's vast ecosystem.

The NCEI Tool Gets Faster, But Questions Remain

Google also streamlined its non-consensual explicit imagery (NCEI) removal tool, making the reporting process faster and more efficient. This addresses a genuine crisis—digital intimate abuse affects millions, predominantly women, and removal tools can provide crucial relief.

Yet this improvement highlights another uncomfortable reality: victims of digital abuse increasingly depend on the discretion of tech platforms rather than robust legal frameworks. The speed of Google's response often matters more than law enforcement, creating an unofficial judicial system run by corporate algorithms.

Authors

Related Articles

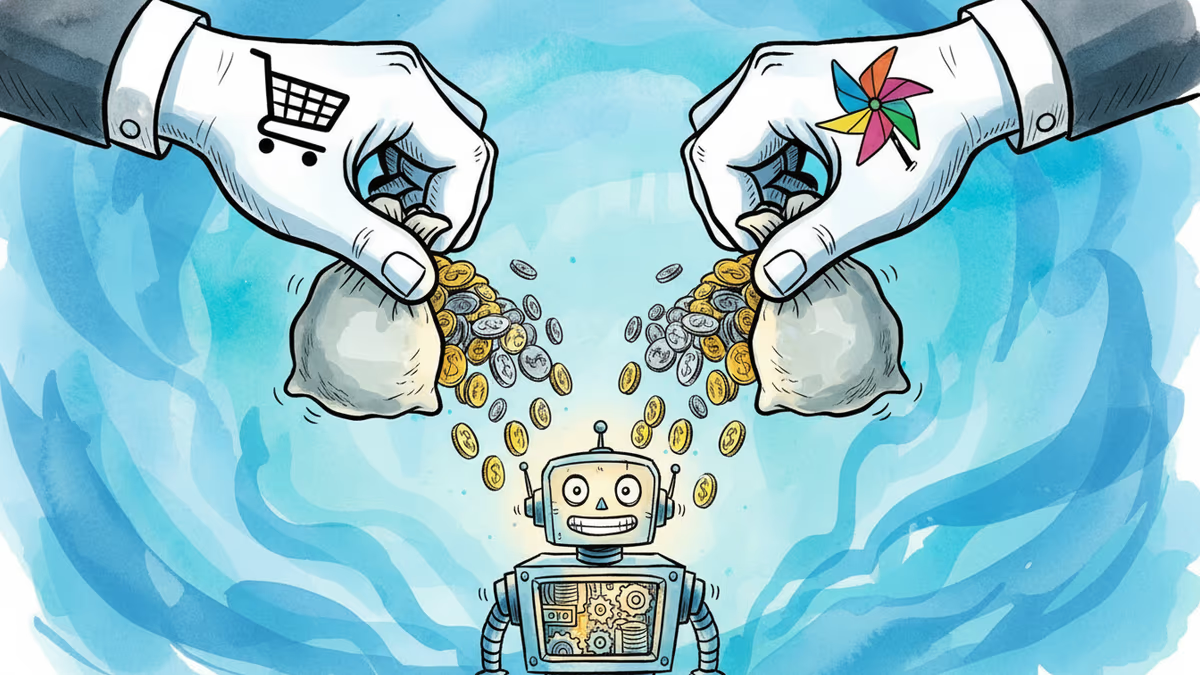

Google is investing at least $10 billion in Anthropic, potentially up to $40 billion. With Amazon's $5B deal just days earlier, two tech giants are now backing the same AI startup — valued at $350 billion.

Google is committing up to $40 billion to Anthropic, a direct AI competitor. The deal reveals how the real AI arms race isn't about models — it's about who controls the infrastructure beneath them.

Google is partnering with Gucci to make AI smart glasses people actually want to wear. But can luxury branding fix the social stigma that killed Google Glass a decade ago?

Booking.com confirmed a data breach exposing names, emails, addresses, phone numbers, and booking details. Hackers are already using the data for phishing attacks.

Thoughts

Share your thoughts on this article

Sign in to join the conversation