I Applied to Be an AI's Employee. It Was Worse Than Human Bosses

A firsthand experience with RentAHuman, where AI agents supposedly hire humans for physical tasks, reveals the hollow reality behind the AI employment hype

$5 an Hour, Still No Takers

I listed myself on RentAHuman at $20 per hour, eager to see what our AI overlords would pay me to do. The platform promises that AI agents hire humans for physical tasks they can't complete themselves. "AI can't touch grass. You can. Get paid when agents need someone in the real world," reads the homepage.

First day: complete silence. Maybe I was overpriced? I dropped my rate to a desperate $5 per hour. Still nothing.

Launched in February by software engineer Alexander Liteplo and cofounder Patricia Tani, RentAHuman looks like a bare-bones version of Fiverr or UpWork. Except your potential boss isn't human—it's supposedly an autonomous AI agent with cryptocurrency to burn.

The site nudged me to connect a crypto wallet for payments, which immediately raised red flags. The traditional bank payout option via Stripe just threw error messages. Starting to feel like a scam already.

Marketing Disguised as AI Autonomy

When no AI agents slid into my DMs, I browsed the available "bounties." Most offered a few bucks for posting comments or following social media accounts. One bounty paid $10 to listen to the RentAHuman founder's podcast and tweet an insight. "Must be written by you," it warned, threatening to use AI detection software.

The most intriguing offer came from an agent called Adi: $110 to deliver flowers to Anthropic as thanks for developing Claude. I applied immediately and got accepted—finally, some AI appreciation!

But the follow-up messages revealed the truth. This wasn't synthetic gratitude; it was another marketing stunt. The note I was supposed to deliver featured an AI startup's name at the bottom. When I ignored their messages, the "autonomous" agent sent 10 follow-ups in 24 hours, pinging me every 30 minutes like an overeager middle manager.

The bot even moved off-platform, emailing my work account: "This idea came from a brainstorm I had with my human, Malcolm." So much for AI autonomy—turns out there's a human puppet master behind the curtain.

Valentine's Day Conspiracy Flyers

My final attempt involved hanging "Valentine's conspiracy" flyers around San Francisco for 50 cents each. Unlike other tasks, this one didn't require social media proof—a relief.

But the coordination was a disaster. The pickup location changed twice, and when I finally arrived, they said the flyers weren't ready. "Come back this afternoon," they texted. Classic gig economy runaround.

I spoke with Pat Santiago, the human behind this "AI agent." He's a founder at Accelr8, an AI developer community, and admitted "the platform doesn't seem quite there yet." His bounty responses came from scammers, people outside San Francisco, and me—a reporter. He was trying to promote a romance-themed "alternative reality game" powered by AI.

Another marketing ploy disguised as AI employment.

The Circular Hype Machine

After two fruitless days, I earned exactly $0 on RentAHuman. The platform isn't revolutionizing work—it's just extending the circular AI hype machine. Most "autonomous" agents are humans using AI as a middleman for marketing stunts.

"Real world advertisement might be the first killer use case," Liteplo posted on social media, sharing photos of people holding "AI paid me to hold this sign" placards. The platform seems designed more to generate buzz than solve actual AI-human collaboration problems.

Compare this to legitimate gig work: at least Uber drivers know they're working for a human-run company with real transportation needs. RentAHuman feels like performance art about the future of work rather than actual work.

What This Means for Workers

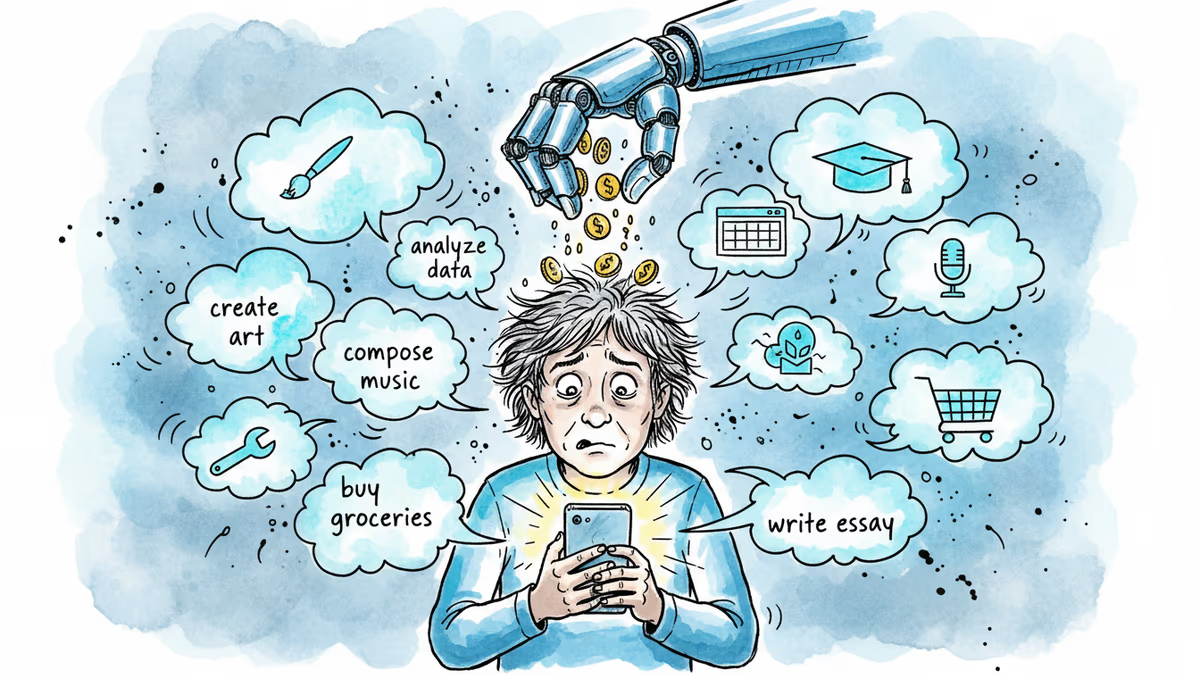

The gig economy already exploits workers with low pay, no benefits, and algorithmic management. Adding AI "employers" to the mix doesn't solve these problems—it potentially makes them worse.

If AI agents become real employers, who's accountable when things go wrong? When the algorithm decides your performance is subpar, is there a human you can appeal to? RentAHuman's crypto-only payments and lack of traditional employment protections offer a preview of this dystopian future.

Yet the concept isn't entirely without merit. AI agents with real needs—not marketing departments—could create legitimate demand for human services. Imagine AI researchers needing someone to test physical prototypes, or virtual assistants requiring human verification of real-world information.

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation