70-85% of Your AI Compute Is Doing Nothing Right Now

Gimlet Labs just raised $80M to build software that splits AI workloads across every chip type simultaneously. The pitch: 10x efficiency without buying new hardware.

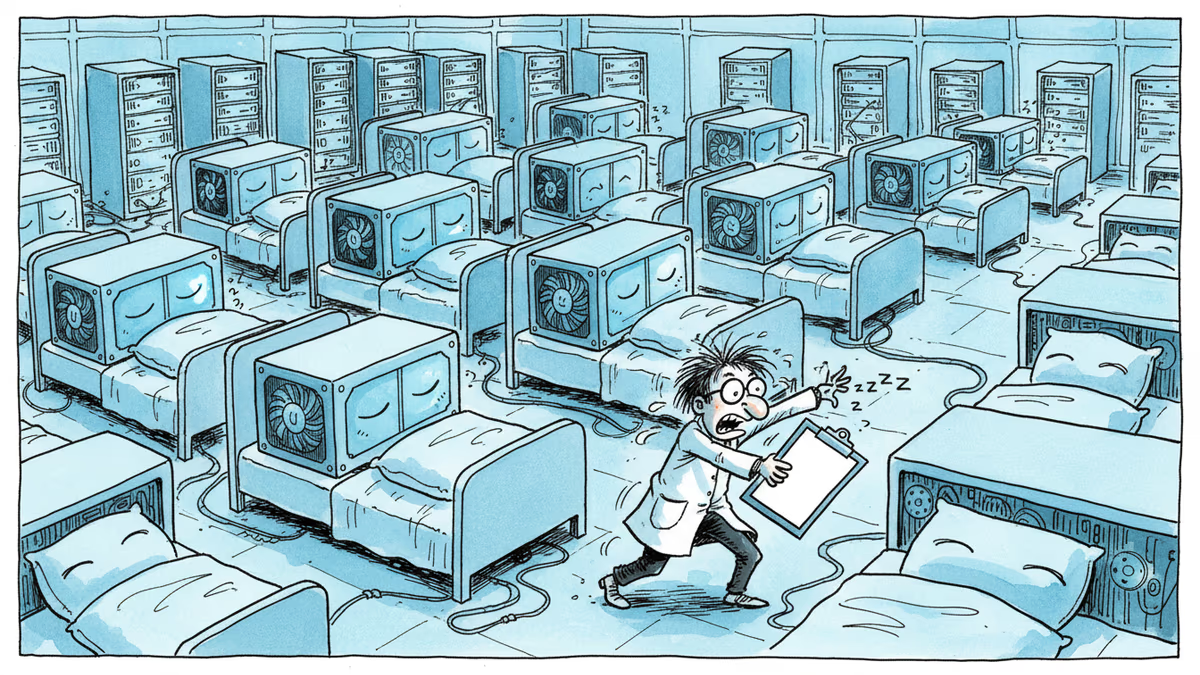

At this exact moment, somewhere between 70 and 85% of the world's deployed AI hardware is sitting idle. The power bills are running. The cooling systems are humming. And companies are still ordering more GPUs.

Gimlet Labs thinks that's the most expensive mistake in tech right now.

What They Built — and Why It's Not Obvious

Founded by Stanford adjunct professor and serial entrepreneur Zain Asgar alongside co-founders Michelle Nguyen, Omid Azizi, and Natalie Serrino, Gimlet Labs just closed an $80 million Series A led by Menlo Ventures. Combined with prior seed funding, the company has now raised $92 million in total. The angel list alone reads like a who's-who: Sequoia's Bill Coughran, Stanford Professor Nick McKeown, former VMware CEO Raghu Raghuram, and Intel CEO Lip-Bu Tan.

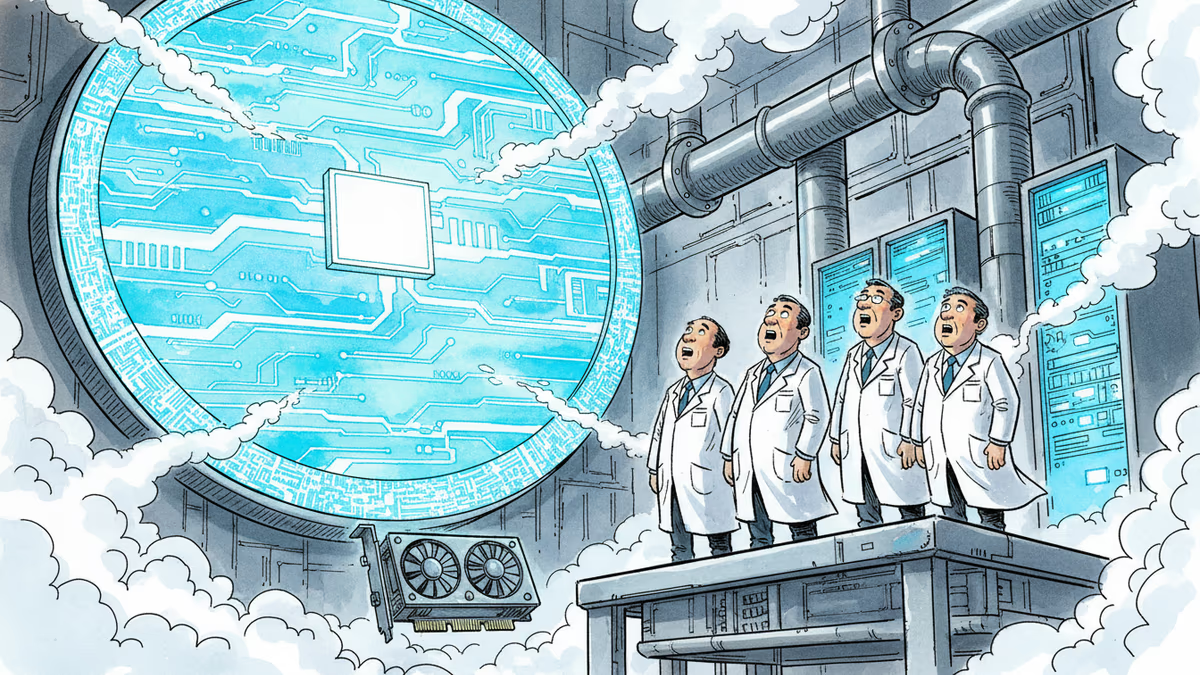

The product is what the company calls the world's first "multi-silicon inference cloud" — orchestration software that slices an AI workload into pieces and distributes each piece to whichever hardware is best suited to run it, simultaneously. Traditional CPUs, AI-optimized GPUs, high-memory systems — Gimlet routes work to all of them at once.

The insight behind it is deceptively simple. Menlo's Tim Tully spelled it out in his investment blog post: a single AI agent chains together multiple steps, and each step has fundamentally different hardware needs. Inference is compute-bound. Decoding is memory-bound. Tool calls are network-bound. No single chip handles all three optimally. Gimlet Labs doesn't wait for that chip to exist — it builds the software layer that makes the existing hardware ecosystem work together.

The company claims this reliably delivers 3x to 10x faster AI inference at the same cost and power consumption. It has already signed partnership agreements with NVIDIA, AMD, Intel, ARM, Cerebras, and d-Matrix — essentially every major player on the silicon map.

The Numbers That Make Investors Pay Attention

Current AI infrastructure spending is staggering. McKinsey estimates data center investment will reach nearly $7 trillion by 2030 if current trends hold. Against that backdrop, Asgar's utilization figure lands hard: existing hardware is only being used 15 to 30 percent of the time.

"Another way to think about this," he told TechCrunch, "you're wasting hundreds of billions of dollars because you're just leaving idle resources."

The timing matters. The shift from simple model inference to complex, multi-step agentic workflows is accelerating. When an AI agent needs to search the web, execute code, analyze data, and call external APIs in sequence, each step demands different hardware characteristics. Infrastructure designed around a single chip type struggles to keep up — and the inefficiency compounds with every additional agent deployed.

Gimlet Labs went public in October 2025, announcing eight-figure revenues at launch — at least $10 million. Four months later, Asgar says the customer base has more than doubled, and now includes a major AI model lab and a large cloud provider (both unnamed). The company runs on a team of 30 people.

Three Ways to Read This

For data center operators, the pitch is straightforward: stop buying hardware you don't need, and start actually using what you have. Aging GPUs that would otherwise be decommissioned can be redeployed. Capital expenditure cycles can stretch. The risk, of course, is handing a 30-person startup the keys to mission-critical AI infrastructure.

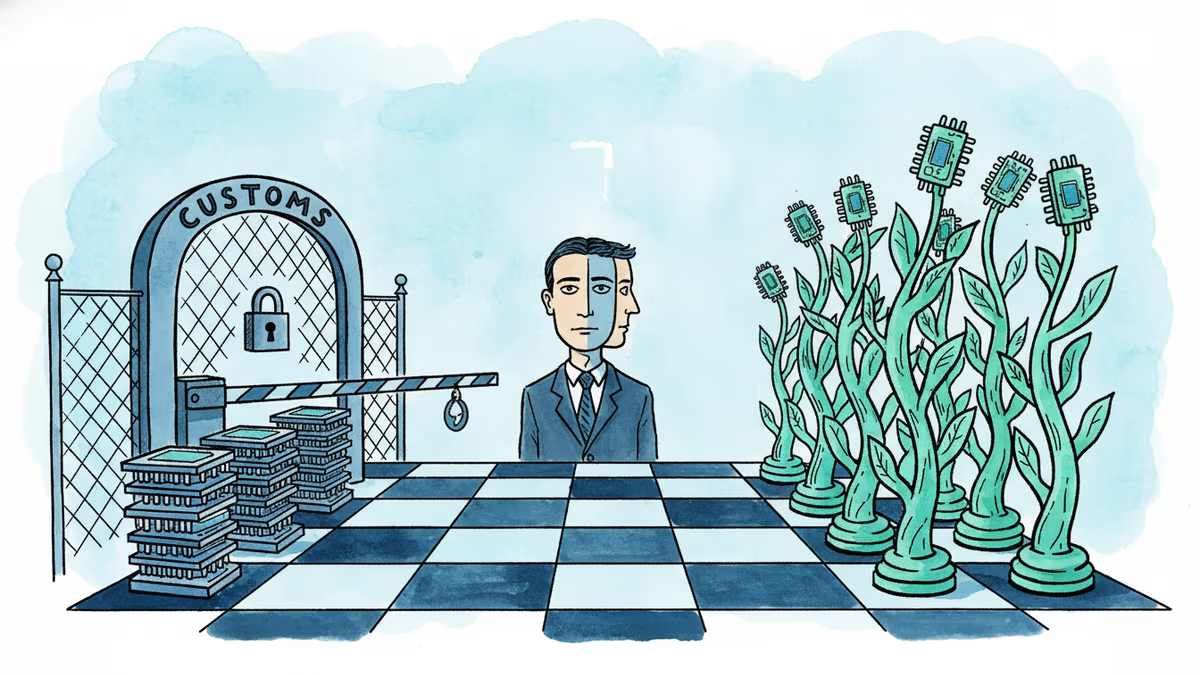

For chip makers, the picture is more complicated. NVIDIA, AMD, and Intel all signed on as partners — but Gimlet's core value proposition is that it doesn't matter which chip you use. That's a subtle but real challenge to NVIDIA's premium positioning, which has been built on the argument that its hardware is simply better for AI, full stop. If software can arbitrage across silicon, the moat around any single chip architecture narrows.

For AI developers and enterprise tech buyers, the question is whether this becomes infrastructure they interact with directly or invisibly. Gimlet's product isn't aimed at individual developers — it targets large model labs and hyperscale data centers. But if those customers pass efficiency gains downstream through lower API pricing, the ripple effects could be significant.

Authors

Related Articles

Beijing added an Nvidia gaming chip to its customs ban list the same week Jensen Huang visited China with Trump. Here's what it means for the chip war—and who actually wins.

Indian venture capital has quietly displaced Silicon Valley in its own backyard. Only one American VC made India's top 10 investor list last year. Here's why that matters beyond India.

Cerebras Systems IPO yields a $5.3 billion return for Benchmark. Explore the 8.5-year journey from a reluctant first meeting to challenging Nvidia's AI dominance.

E-commerce fintech Parker filed for Chapter 7 bankruptcy on May 7, despite raising over $200M. The YC-backed startup's abrupt shutdown left small business customers scrambling—and exposed the fragility of vertical fintech models.

Thoughts

Share your thoughts on this article

Sign in to join the conversation