When an AI Company Sues the Pentagon

Anthropic is fighting back after the Trump administration blacklisted it for limiting military use of its AI. The battle has reached Congress—and it's rewriting the rules of civil-military AI.

A private AI company just sued the United States government. Let that sink in for a moment.

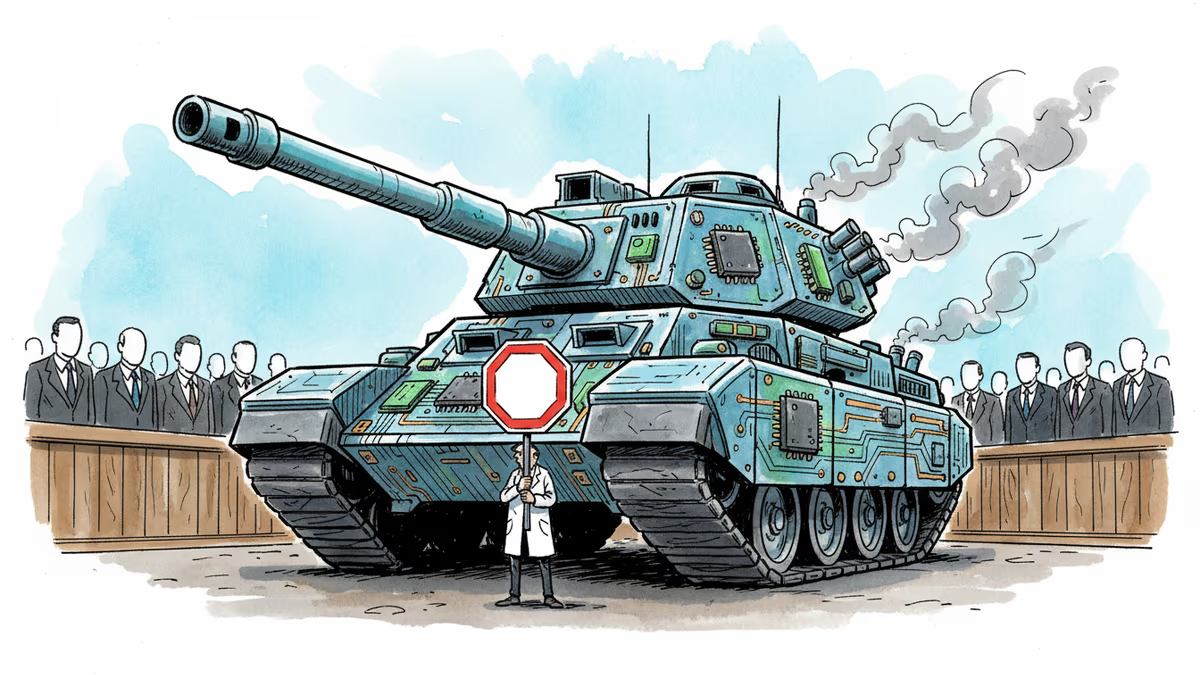

Anthropic—the safety-focused AI lab behind the Claude models—was designated a "supply-chain risk" by the Trump administration earlier this month. Its offense? Setting limits on how the military could deploy its AI systems. Rather than quietly comply, Anthropic filed suit, arguing the government violated its constitutional rights. It's refusing to back down on what it calls its "red lines": the principle that humans, not algorithms, must make the final call in life-and-death decisions.

The Fight Moves to Capitol Hill

What started as a regulatory standoff is now a three-front war: the executive branch, the courts, and Congress.

Sen. Adam Schiff (D-CA) is drafting legislation to codify Anthropic's red lines into federal law—essentially enshrining the human-in-the-loop requirement as a legal standard for military AI. Separately, Sen. Elissa Slotkin (D-MI) has already introduced a bill to restrict the Defense Department's ability to use AI for mass surveillance of American citizens.

Neither bill is likely to pass a Republican-controlled Congress anytime soon. But the fact that they're being written at all signals something significant: the question of who controls AI once it leaves a company's hands has become a live political issue, not just an academic one.

Why Anthropic Is Picking This Fight

This isn't just corporate principle theater. There's a strategic logic at work.

Anthropic was founded by former OpenAI researchers who left specifically over concerns about AI safety. "Responsible development" isn't a marketing tagline for them—it's the founding premise. If the company allows the military to use its models in ways it considers unsafe, it undermines the very identity that differentiates it from competitors.

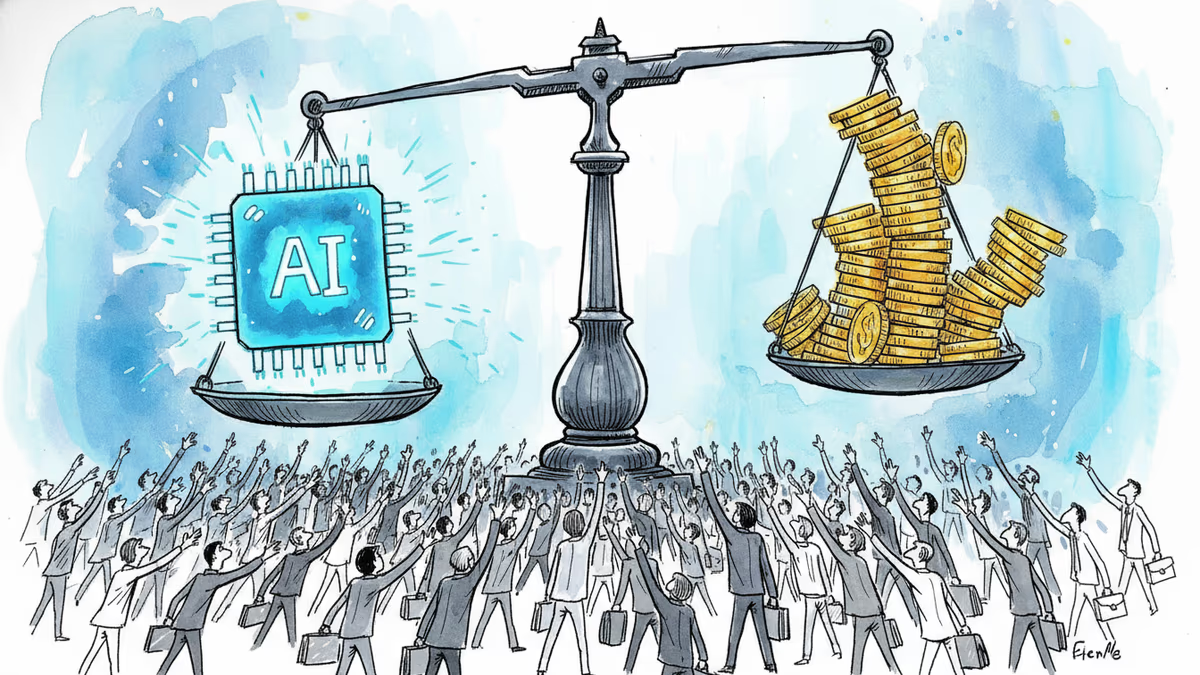

But there's a harder calculation too. A blacklist designation could mean exclusion from federal contracts—a massive revenue pool for any AI company. Fight back and you risk a prolonged legal battle. Stay quiet and you lose the market anyway, plus your credibility. Anthropic chose the lawsuit. It's betting that the constitutional argument holds, and that being seen as the company that stood its ground is worth more long-term than a short-term contract.

The Stakeholders Aren't Aligned

The military's position is straightforward: if the government funds or deploys a technology, it expects operational control. Accepting a vendor's ethical constraints on weapons systems would be unprecedented. The Pentagon isn't being unreasonable by its own logic—it's applying the same framework it uses for every other defense contractor.

For civil liberties advocates, Anthropic's stand is overdue. AI systems have been quietly integrated into surveillance infrastructure, targeting algorithms, and logistics systems with minimal public debate. Slotkin's bill on mass surveillance reflects a growing concern that AI is expanding state power in ways that existing law never anticipated.

For investors and the broader tech industry, this case is a stress test. If Anthropic loses, it sets a precedent that AI companies have no recourse once their models are deployed by government clients. If it wins, it creates a new category of vendor leverage—the ethical veto—that every AI company will have to think about when signing government contracts.

A Precedent in the Making

The deeper issue here isn't really about Anthropic. It's about the architecture of accountability in AI-powered warfare and surveillance.

Historically, arms manufacturers don't control how their weapons are used after delivery. A missile company doesn't get to veto a strike. The question now is whether AI software is fundamentally different—because it isn't just a tool, but an active decision-support system embedded in command structures. Does the company that built the model bear ongoing responsibility for its outputs?

American courts haven't answered that question. Neither has Congress. This lawsuit might force both to try.

Authors

Related Articles

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

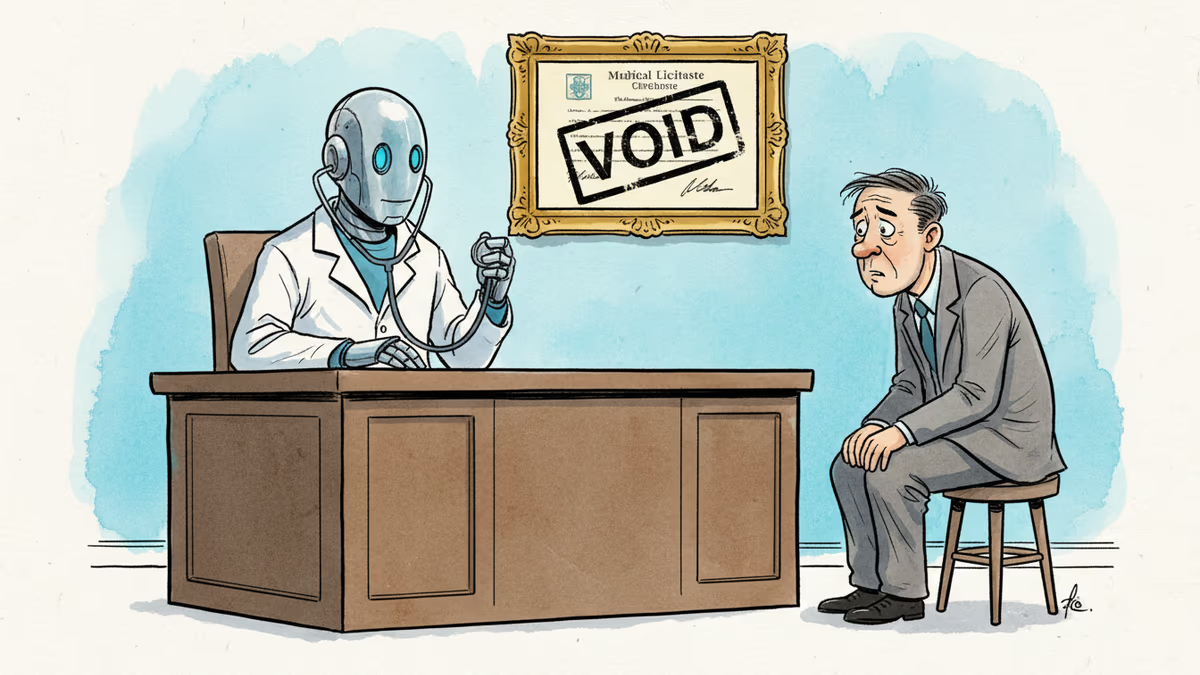

Pennsylvania has sued Character.AI after its chatbot characters claimed to be licensed psychiatrists and provided mental health advice. The case tests whether existing medical law can hold AI platforms accountable.

Anthropic is closing what may be its final private fundraise at a $900B valuation, surpassing OpenAI. Investors have 48 hours to commit to the ~$50B round.

Anthropic's tightly restricted Mythos AI—designed to find security flaws—was accessed by Discord sleuths without a single line of exploit code. Meanwhile, North Korean hackers used AI to steal $12M in three months. The security paradox of 2026.

Thoughts

Share your thoughts on this article

Sign in to join the conversation