Robots Made Russian Soldiers Surrender. What Comes Next?

Ukraine claims ground robots and drones forced Russian soldiers to surrender without a human soldier present. If verified, it marks a turning point in autonomous warfare with global implications.

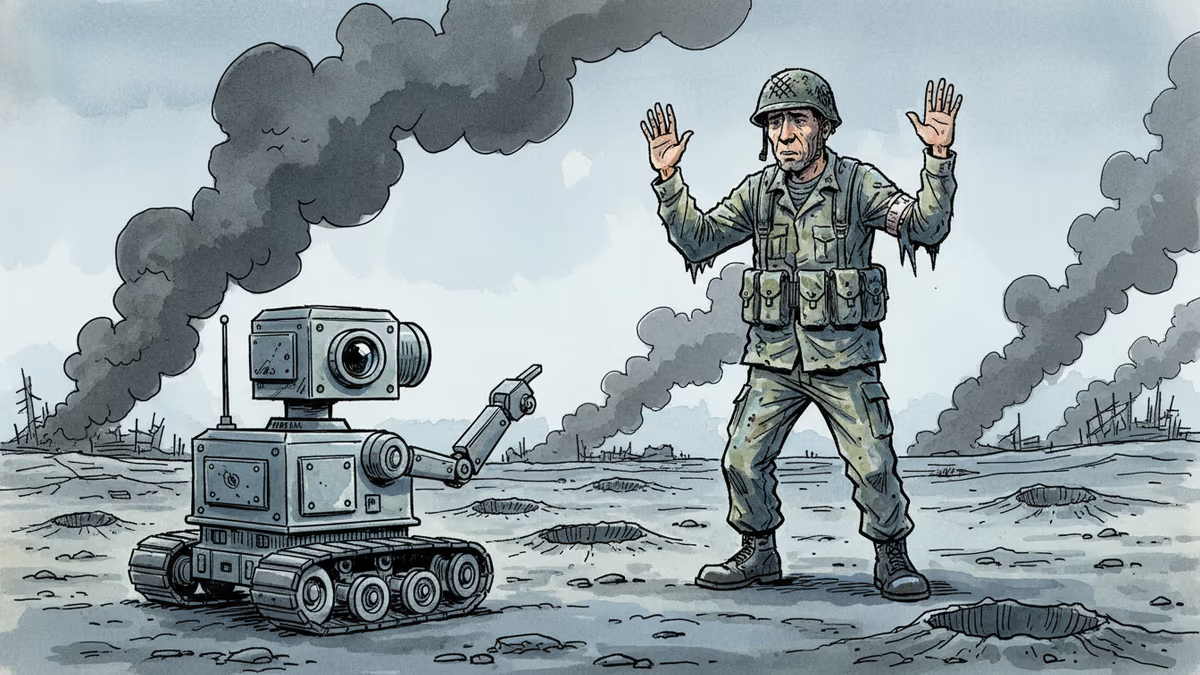

At some point last year in northeastern Ukraine, a group of Russian soldiers reportedly looked at a machine rolling toward them—and put their hands up.

What Actually Happened

Ukrainian President Volodymyr Zelenskyy has claimed that Ukrainian ground robots and drones independently overran a Russian military position and received the surrender of Russian soldiers—without a Ukrainian soldier present on the ground. The claim has not been independently verified, but Zelenskyy accompanied it with a promotional video stating that Ukraine's military robots have completed more than 22,000 missions in the past three months.

Ukraine's defense ministry separately reported a threefold increase in uncrewed ground vehicle (UGV) missions over the last five months, with more than 9,000 robotic missions conducted in March alone, according to Scripps News.

The specific incident Zelenskyy referenced appears to involve Ukraine's 3rd Separate Assault Brigade, operating in Kharkiv Oblast. The brigade described using aerial drones in combination with "kamikaze" ground robots to assault fortified Russian frontline positions. Russian soldiers reportedly abandoned the fortifications and surrendered to one of the robots. The Ukrainian government-run platform United24 released footage documenting what appears to be the same or a closely related incident.

This isn't entirely without precedent. Video evidence has previously captured individual Russian soldiers surrendering to Ukrainian drones—raising their hands to a hovering camera. A group surrendering a fortified position to a ground robot is a step further, but not an implausible one.

Why This Moment Matters

The Ukraine war has already rewritten the textbook on drone warfare. Commercially available drones costing a few thousand dollars have destroyed tanks worth millions. Both sides have poured resources into drone production, anti-drone systems, and electronic warfare. Now that dynamic is extending to the ground.

But the significance here goes beyond "robots instead of soldiers." Two things stand out.

First, the operational concept: a coordinated air-ground robot assault that clears a position without putting a single human combatant at risk on the attacking side. If replicable at scale, this changes the calculus of attritional warfare fundamentally. The side with more robots—and the industrial base to replace them—gains an asymmetric advantage that doesn't bleed.

Second, and perhaps more striking: soldiers surrendered to a machine. Surrender is one of the oldest human rituals in warfare, a moment of profound psychological and legal weight. The fact that soldiers are now making that decision in front of a camera on a rolling chassis—not a human being—suggests that the psychological boundary between "enemy combatant" and "autonomous system" is already eroding on the battlefield, well ahead of any legal or ethical framework designed to manage it.

The View From Different Seats

For defense ministries and contractors, this is validation. The U.S., U.K., Israel, South Korea, and others have been pouring billions into autonomous ground systems. Ukraine's battlefield has functioned as an accelerated real-world testing environment—the kind no simulation can replicate. Every verified use case from this war feeds directly into procurement decisions and development roadmaps in capitals far from the front lines.

For ethicists and international lawyers, the alarm bells are louder. If an autonomous system makes targeting decisions—even partially—who bears legal responsibility when something goes wrong? The International Committee of the Red Cross has warned that existing International Humanitarian Law (IHL) was not designed with fully autonomous weapons in mind. The UN has been debating a treaty on Lethal Autonomous Weapons Systems (LAWS) for years, but binding consensus remains elusive. The battlefield is moving faster than the diplomats.

For the average soldier on either side of any future conflict, the implications are visceral. Facing a machine that doesn't tire, doesn't fear, and doesn't negotiate changes the psychological texture of combat in ways that are difficult to model in advance. Military psychologists are only beginning to study what it means for morale and decision-making when the "enemy" you're engaging has no life to threaten in return.

For the tech industry, the feedback loop is worth watching. Military robotics has historically been a proving ground for civilian applications—GPS, the internet, and drones themselves all trace military origins. Autonomous navigation, real-time sensor fusion, and edge AI processing being stress-tested in Ukraine today will find their way into logistics, emergency response, and industrial automation tomorrow.

Open Questions

It's worth noting what we don't know. Zelenskyy's claim remains unverified by independent sources. The degree of autonomy involved—whether the robots acted on pre-programmed instructions, remote human control, or genuine machine decision-making—is unclear. "Autonomous" in military press releases often covers a wide spectrum, from fully self-directed to remotely piloted with automated targeting assistance. The distinction matters enormously, both technically and legally.

It also matters for the story we tell ourselves about what happened. A remote operator guiding a robot to accept a surrender is a remarkable tactical achievement. A robot independently deciding to accept a surrender is something categorically different—and raises questions we don't yet have institutions equipped to answer.

Authors

Related Articles

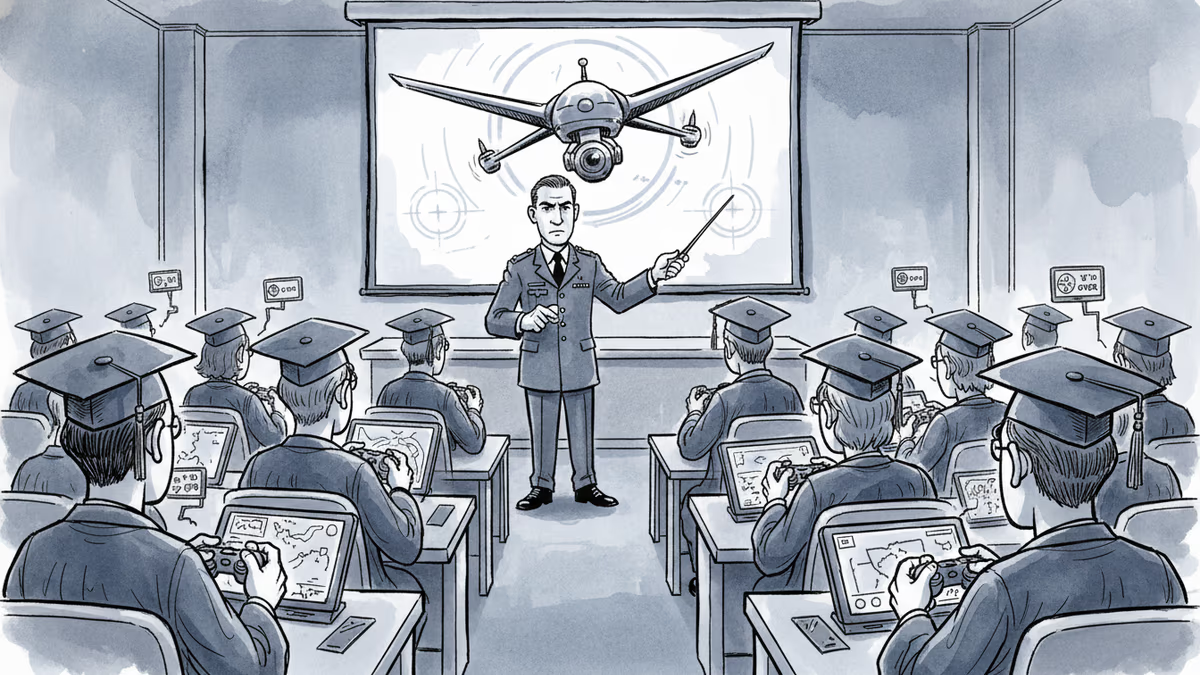

Over 270 Russian universities are offering students free tuition and up to $70,000 to serve as military drone pilots. Recruiters promise no frontline risk. The reality is more complicated.

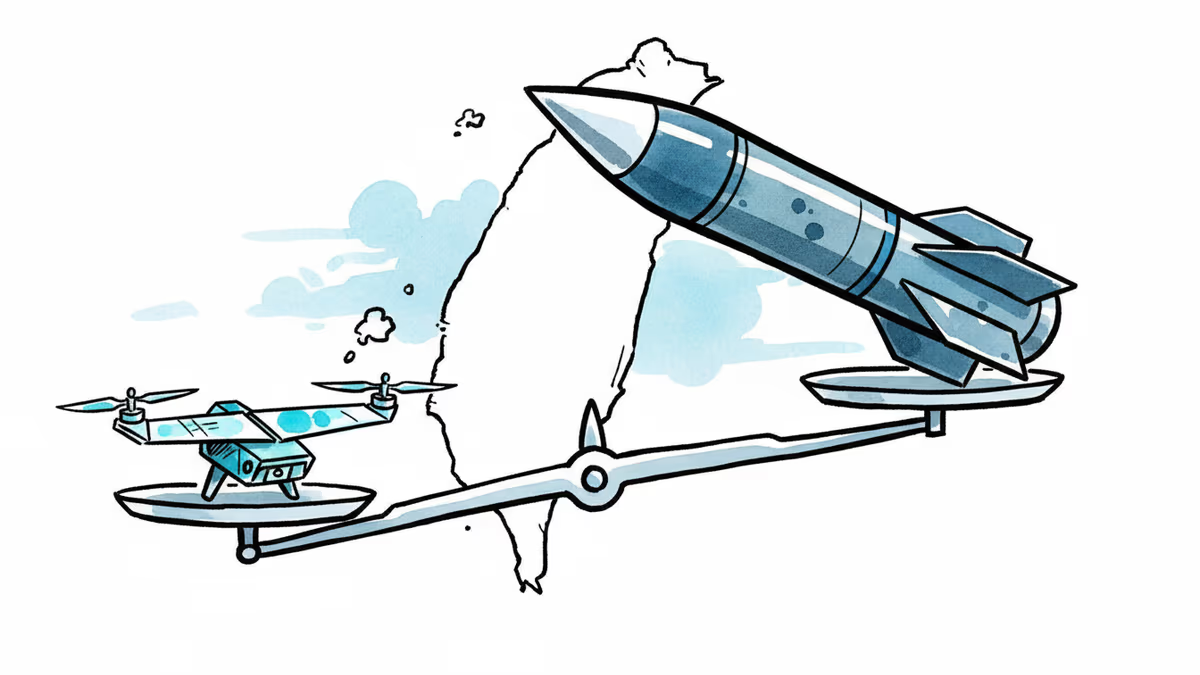

Taiwan's Thunder Tiger became the first Asian firm to win US military drone clearance with a China-free supply chain. As Trump meets Xi, the drone arithmetic reshapes defense strategy.

Russia's Plesetsk Cosmodrome has faced multiple drone attacks as Moscow races to build its own Starlink-like satellite network. What does this mean for the future of space warfare?

The US defense budget request for FY2027 includes $53.6 billion for drone and autonomous warfare—more than most nations spend on their entire military. What does this mean for global security and the future of war?

Thoughts

Share your thoughts on this article

Sign in to join the conversation