Your Vendor's Security Cert Might Be Theater

LiteLLM ditched compliance startup Delve after credential-stealing malware hit its open source tool — and Delve itself faces allegations of generating fake audit data. What this means for third-party security trust.

Millions of developers trusted a tool that had the security certifications to prove it was safe. Last week, that tool got hit by credential-stealing malware. Then it turned out the certifications themselves might not be worth the paper they're printed on.

What Happened

LiteLLM is an open source AI gateway that lets developers route requests across models from OpenAI, Anthropic, Google, and dozens of others through a single interface. It's infrastructure-level software — the kind of tool that sits quietly underneath AI applications and handles authentication, routing, and API key management. Millions of developers use it.

Last week, the open source version of LiteLLM was compromised by credential-stealing malware. The attack vector and full scope haven't been fully disclosed, but the nature of the tool — sitting between developers and their AI API keys — makes any breach particularly serious. Stolen credentials in this context don't just mean one account; they can cascade across every AI service a developer or company has connected.

Bad enough. But the story got more complicated.

Prior to the incident, LiteLLM had obtained two security compliance certifications through Delve, an AI compliance startup. These certifications — think SOC 2 or similar frameworks — are supposed to tell customers and partners: "This company has the controls in place to minimize security incidents." They're a cornerstone of B2B trust, especially in the enterprise software market.

Then an anonymous whistleblower alleged that Delve had been generating fake compliance data and using auditors who rubber-stamped reports without genuine scrutiny. Delve's founder denied the allegations and offered free re-tests to all customers. That denial apparently provoked the whistleblower further: over the weekend, they released what they described as receipts — alleged evidence backing up the original claims.

On Monday, LiteLLM CTO Ishaan Jaffer posted on X. The company would be moving to Vanta, a Delve competitor, for re-certification. They'd also find their own independent third-party auditor. LiteLLM was voting with its feet.

The Deeper Problem: Compliance Theater

The phrase "compliance theater" has circulated in security circles for years. It describes a dynamic where organizations pursue certifications not to improve their actual security posture, but to check a box — to satisfy procurement requirements, close enterprise deals, or signal trustworthiness to investors.

The market dynamics that created Delve are real. Startups face intense pressure to obtain security certifications quickly. SOC 2 audits can take months and cost tens of thousands of dollars. A wave of compliance automation startups — Vanta, Drata, Secureframe, and others — emerged to streamline the process. The pitch: get certified faster, cheaper, with less friction.

That's a legitimate value proposition. But it also creates pressure to optimize for the certificate rather than the underlying security controls. If a compliance vendor competes primarily on speed and price, the incentive structure can quietly shift away from rigor.

The Delve allegations — still unproven in any legal sense — represent the extreme end of that spectrum. But they raise a question that applies more broadly: when you see a security certification on a vendor's website, do you know what it actually verified?

Different Stakeholders, Different Exposures

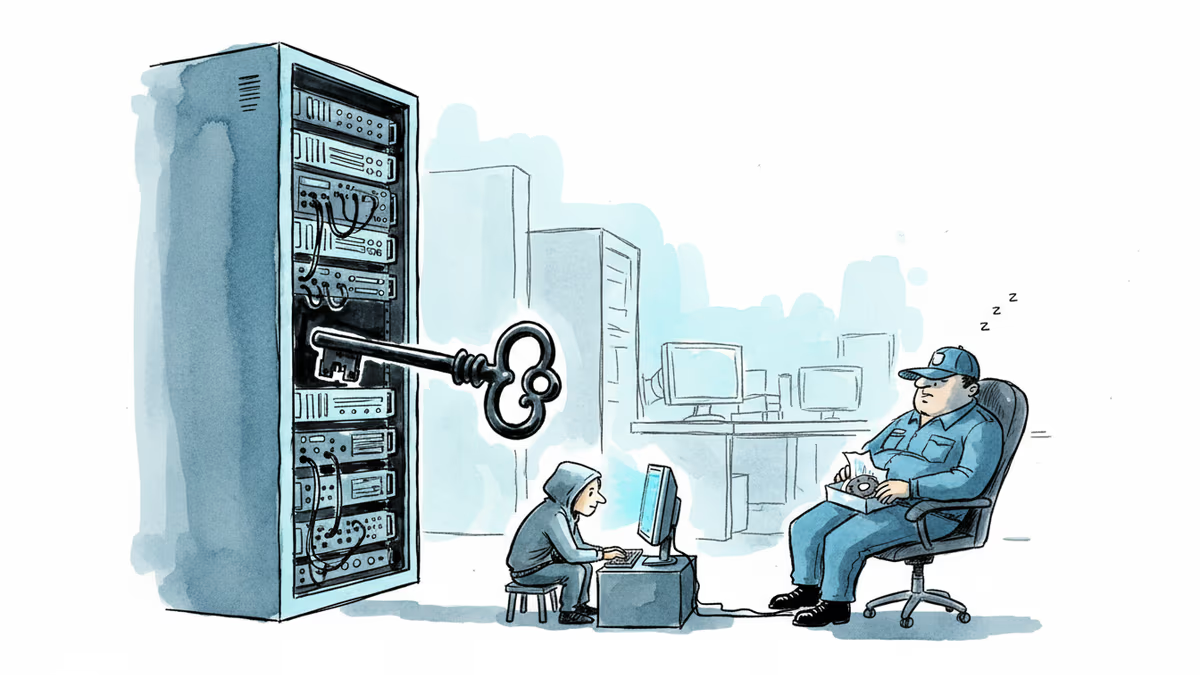

For developers and engineering teams, this week's events are a two-layer trust failure. First, a tool they relied on was compromised. Second, the certification that was supposed to signal safety may have been meaningless. In open source ecosystems, trust is built slowly and lost fast. The LiteLLM team's swift public response helps, but it doesn't eliminate the damage.

For security and procurement teams at enterprises, the implications are more structural. Vendor security certifications are a standard part of due diligence checklists. If those certifications can be obtained through compromised audit processes, the checklist approach breaks down. The uncomfortable question: how many of your current vendors' certifications have you looked behind?

For investors in compliance automation startups, the Delve situation is a reminder that the compliance-as-a-service market is not immune to the same dynamics that affect any fast-growing, competitive market. Vanta may benefit from short-term customer migration. But the broader question of how to structurally ensure auditor independence in automated compliance workflows remains open across the category.

For the AI infrastructure layer specifically, the stakes are higher than in conventional SaaS. Tools like LiteLLM sit at a critical junction — they handle authentication tokens, API keys, and routing logic for AI services that may themselves be processing sensitive data. A compromise here isn't a single point of failure; it's a multiplier.

What Comes Next

LiteLLM's move to Vanta and an independent auditor is the right signal. It acknowledges that the previous certification process was insufficient and commits to a more rigorous path. But re-certification takes time, and in the interim, the team faces the harder work of rebuilding developer confidence through transparency rather than paperwork.

The Delve situation remains legally unresolved. The founder's denial and the whistleblower's counter-evidence are both public, but neither has been adjudicated. Other Delve customers are watching. If more companies follow LiteLLM's lead, it will accelerate pressure on Delve to either substantiate its denials or face broader consequences.

At the industry level, this episode is likely to accelerate conversations about what security certifications should actually require — particularly for AI infrastructure vendors. The question of auditor independence, already a known weakness in the compliance automation model, will get more scrutiny. Regulators in the EU, where AI Act compliance frameworks are still being operationalized, may take note.

Authors

Related Articles

GitHub confirmed hackers stole data from 3,800 internal repositories via a poisoned VS Code extension. Here's why developer tools are now the most dangerous attack surface in tech.

A Utah woman was sentenced to life in prison partly because of her Google searches and deleted texts. The Kouri Richins case reveals how digital footprints have become the courtroom's most reliable witness.

Dirty Frag gives low-privilege users root access on virtually every Linux distro. The exploit code leaked three days ago. Microsoft says attackers are already experimenting with it.

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

Thoughts

Share your thoughts on this article

Sign in to join the conversation