The Pentagon Just Called an American AI Startup a Supply-Chain Risk

After a $200M contract collapse, the Pentagon is building its own LLMs, signed deals with OpenAI and xAI, and labeled Anthropic a supply-chain threat. What this means for AI safety, defense tech, and the industry's ethical calculus.

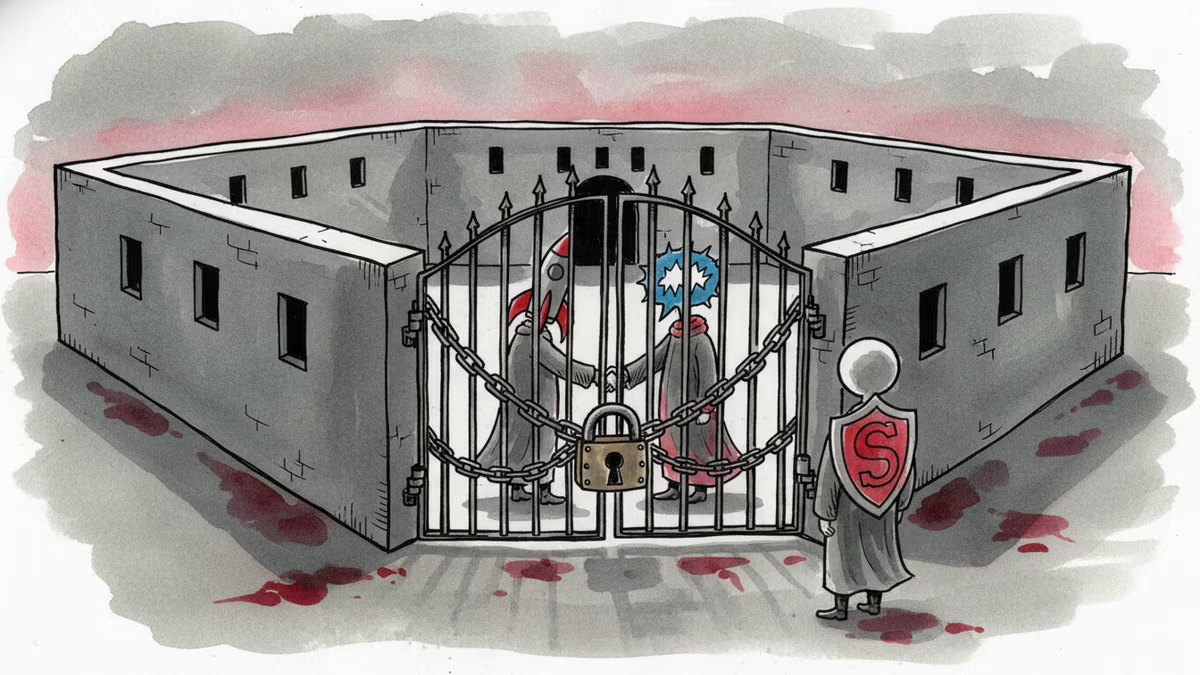

"Supply-chain risk" is the designation Washington reserves for Huawei. For state-linked Russian firms. For foreign adversaries. As of this month, it also applies to Anthropic — a San Francisco AI company founded by former OpenAI researchers who left specifically because they were worried about building AI unsafely.

How a $200M Deal Fell Apart

The breakdown didn't happen overnight. Over recent weeks, Anthropic and the Department of Defense failed to agree on a single core issue: how much control the military would have over Anthropic's AI once deployed.

Anthropic wanted two contractual guardrails baked in. First, a prohibition on using its AI for mass surveillance of American citizens. Second, a ban on deploying it in autonomous weapons systems — ones that can fire without a human in the decision loop. The Pentagon said no. Negotiations collapsed. The $200 million contract evaporated.

What happened next moved fast. OpenAI — which quietly rewrote its own policies prohibiting military use just months ago — stepped in and signed its own agreement with the DoD. Elon Musk's xAI followed, landing a deal to deploy Grok inside classified government networks. And Defense Secretary Pete Hegseth formalized Anthropic's exile by designating it a supply-chain risk, a label that doesn't just bar Anthropic from government work — it bars any Pentagon contractor from working with Anthropic either.

Anthropic is now challenging that designation in court.

Meanwhile, the Pentagon's chief digital and AI officer Cameron Stanley confirmed to Bloomberg that the department isn't waiting around: "The Department is actively pursuing multiple LLMs into the appropriate government-owned environments. Engineering work has begun on these LLMs, and we expect to have them available for operational use very soon."

The Uncomfortable Arithmetic

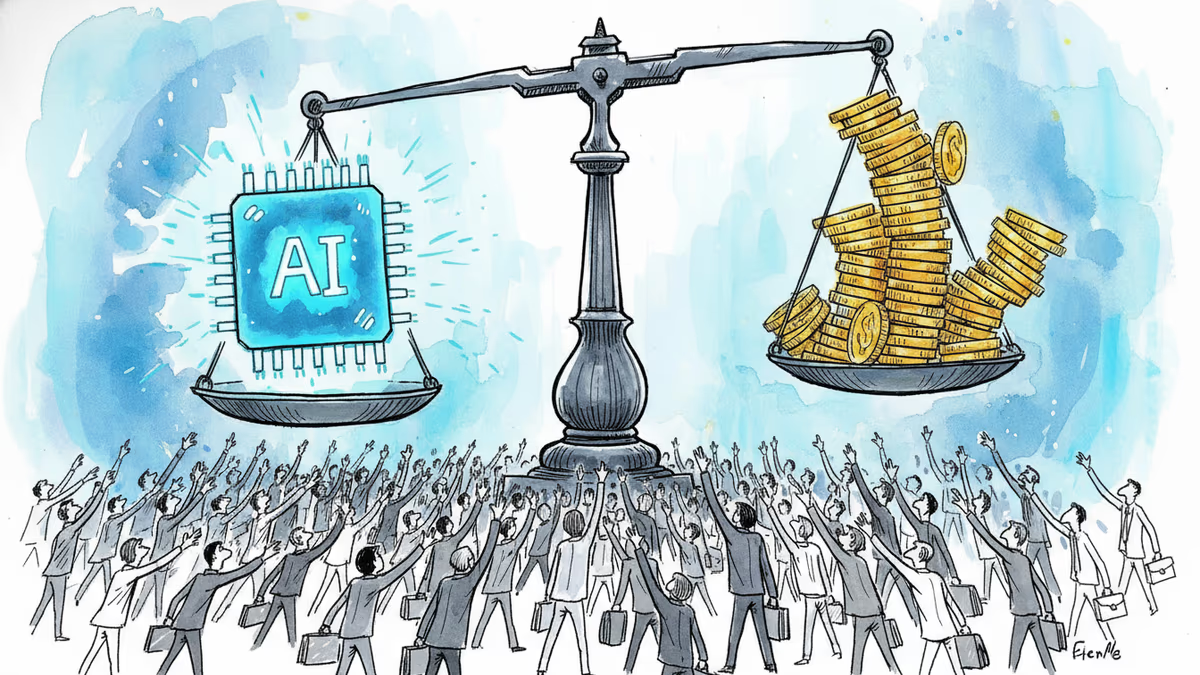

Look at the outcomes side by side. Anthropic held its ethical line and lost a $200 million contract, got labeled a national security threat, and now faces a legal battle. OpenAI adjusted its policies and won. xAI never had such policies to begin with.

If you're the next AI company watching this play out, what lesson do you draw?

This is the question that's making AI safety researchers uncomfortable — not just the immediate outcome, but the precedent it sets. The market signal here isn't subtle: safety constraints cost you government contracts. And government contracts, especially defense ones, are among the largest and most stable revenue streams in the industry.

There's a counterargument worth taking seriously. Anthropic's principled stance could become a genuine competitive differentiator with enterprise clients who are increasingly nervous about AI liability, regulatory exposure, and reputational risk. A company that demonstrably holds the line — even against the Pentagon — may be exactly what risk-averse Fortune 500 legal departments want to see.

Who's Watching, and What They're Thinking

From the Pentagon's perspective, this is straightforward operational logic. When you're running classified systems in contested environments, you cannot have a private company holding veto power over how you use your own tools. The push to build government-owned LLMs is the logical endpoint: eliminate the dependency entirely.

From the AI safety community, the concern runs deeper than one contract. The institutions and norms that govern how AI gets used in lethal systems are still being written. If the largest defense budget in the world is now actively selecting for AI vendors with fewer restrictions, that shapes what the entire industry optimizes for.

For investors, the picture is genuinely mixed. Anthropic has raised billions from Google and Amazon and commands serious enterprise traction. Being locked out of the government market hurts, but it's not fatal. The more interesting question is whether Anthropic's positioning holds up as regulations tighten globally — the EU AI Act, for instance, has provisions that align more closely with Anthropic's stated principles than with the Pentagon's current posture.

For national security analysts, the xAI-Pentagon deal may be the more consequential development. Grok inside classified systems means Elon Musk's company has access to defense infrastructure at a moment when Musk himself holds an unprecedented advisory role in the federal government. Senator Elizabeth Warren has already pressed the Pentagon on this. It's a conflict-of-interest question that hasn't been cleanly answered.

Authors

Related Articles

Meta acquired humanoid robotics startup ARI, adding to a Big Tech arms race in physical AI. The real prize isn't a robot product — it's a new way to train intelligence.

Anthropic is closing what may be its final private fundraise at a $900B valuation, surpassing OpenAI. Investors have 48 hours to commit to the ~$50B round.

At his OpenAI trial, Elon Musk testified under oath about a falling-out with Larry Page over AI safety. The story reveals how personal philosophy shapes billion-dollar industries.

Elon Musk and Sam Altman head to trial this week in a case that could determine whether OpenAI survives as a for-profit company—and who leads it. Here's what's really at stake.

Thoughts

Share your thoughts on this article

Sign in to join the conversation