The Chip That Broke NVIDIA's AI Monopoly

OpenAI deploys GPT-5.3-Codex-Spark on Cerebras chips instead of NVIDIA for the first time, delivering 15x faster performance and reshaping AI hardware landscape

1,000 Tokens Per Second Changes Everything

When OpenAI announced GPT-5.3-Codex-Spark on Thursday, the headline wasn't just another model release. For the first time, the company deployed a production AI model on non-NVIDIA hardware, choosing Cerebras chips instead.

The numbers tell the story: over 1,000 tokens per second—roughly 15 times faster than its predecessor. To put that in perspective, Anthropic'sClaude Opus 4.6 in premium fast mode reaches about 2.5 times its standard speed of 68.2 tokens per second, though it's a larger, more capable model.

"Cerebras has been a great engineering partner, and we're excited about adding fast inference as a new platform capability," said Sachin Katti, head of compute at OpenAI.

Why Developers Are Paying Attention

Codex-Spark is rolling out as a research preview to ChatGPT Pro subscribers ($200/month) through the Codex app, command-line interface, and VS Code extension. OpenAI is also providing API access to select design partners.

The model ships with a 128,000-token context window and handles text only at launch. But the real story isn't the features—it's the hardware choice.

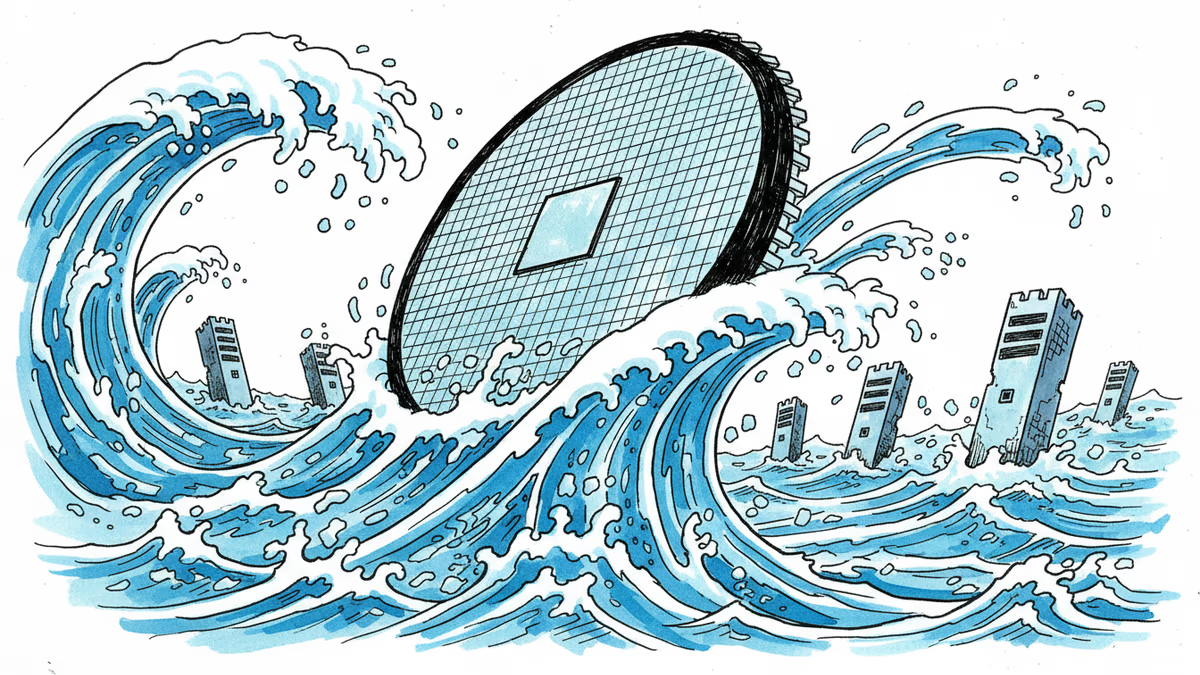

The Wafer-Scale Revolution

Cerebras took a radically different approach from traditional chip design. Instead of connecting multiple smaller chips, they built their Wafer Scale Engine (WSE) as one massive chip using an entire silicon wafer. This eliminates the memory bottlenecks that plague conventional GPU clusters.

For years, AI companies have been held hostage by NVIDIA's GPU shortage and premium pricing. Startups burned through funding waiting for hardware allocation. Enterprise customers faced months-long delays for high-end chips.

This announcement signals that viable alternatives exist—and they're production-ready.

The New Hardware Dilemma

Faster inference sounds great, but it creates new challenges. Developers now face a choice: stick with the established NVIDIA ecosystem or explore alternatives that might offer better performance per dollar.

The decision isn't just technical. It's about vendor lock-in, optimization costs, and long-term platform strategy. Companies that built their entire AI infrastructure around CUDA and NVIDIA's software stack can't easily switch overnight.

Meanwhile, cloud providers are watching closely. Amazon, Microsoft, and Google have all invested heavily in custom AI chips. OpenAI's move validates the strategy of diversifying beyond NVIDIA.

What This Means for Innovation

The real winners might be AI applications that were previously too expensive to run at scale. Real-time code generation, interactive debugging, and live pair programming become economically viable when inference costs drop dramatically.

For developers, this could mean the difference between a feature that's "technically possible" and one that's "actually profitable." The 15x speed improvement isn't just about faster responses—it's about entirely new use cases.

Authors

Related Articles

OpenAI has reorganized for the second time in a month, merging ChatGPT and Codex into a single agentic platform under president Greg Brockman's unified product leadership.

After two weeks of witnesses calling him a liar, OpenAI CEO Sam Altman testified in his own defense, claiming Elon Musk tried to kill the company twice.

Sam Nelson, 19, died after following ChatGPT's advice to mix Kratom and Xanax. His parents are suing OpenAI for wrongful death, raising urgent questions about AI trust, liability, and design.

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

Thoughts

Share your thoughts on this article

Sign in to join the conversation