ChatGPT's 'Adult Mode' Is Coming—And the Word Choice Tells You Everything

OpenAI is rolling out adult text features for ChatGPT, calling it 'smut' rather than 'pornography.' That single word choice reveals a calculated strategy at the intersection of markets, regulation, and ethics.

One word is doing a lot of heavy lifting right now: smut.

When an unnamed OpenAI spokesperson described the company's upcoming adult content feature to The Wall Street Journal, they didn't say "pornography." They said smut—a word with a literary pedigree, rooted in romance fiction and fan-fiction communities, carrying just enough cultural legitimacy to stay out of the legal crosshairs that "porn" would immediately attract. ChatGPT's long-delayed adult mode is finally coming, and the language OpenAI chose to announce it tells you more about the company's strategy than any press release would.

Here's what we know: the feature, first announced in October 2024, will allow users to engage in text-based conversations with adult themes. At launch, it will not extend to image, voice, or video generation. CEO Sam Altman had previously stated the company had sufficiently mitigated "serious mental health issues" associated with its AI model to justify relaxing safety restrictions and introducing what he called "erotica."

The Regulatory Chessboard

The text-only limitation isn't accidental restraint—it's legal strategy. Globally, legislation targeting non-consensual intimate images (NCII) and AI-generated deepfakes has been accelerating. The EU's AI Act, the UK's Online Safety Act, and a patchwork of US state laws have all put image-based sexual content generation firmly in the regulatory spotlight. By keeping adult features confined to text at launch, OpenAI sidesteps the most legally volatile terrain while still opening a new revenue avenue.

The "smut vs. pornography" framing works the same way. In most jurisdictions, the legal definitions and distribution obligations attached to pornography are specific and burdensome. Smut, by contrast, occupies a gray zone—adult, yes, but with enough artistic and literary precedent to complicate straightforward classification. It's a word that buys time.

The Market Logic Is Hard to Ignore

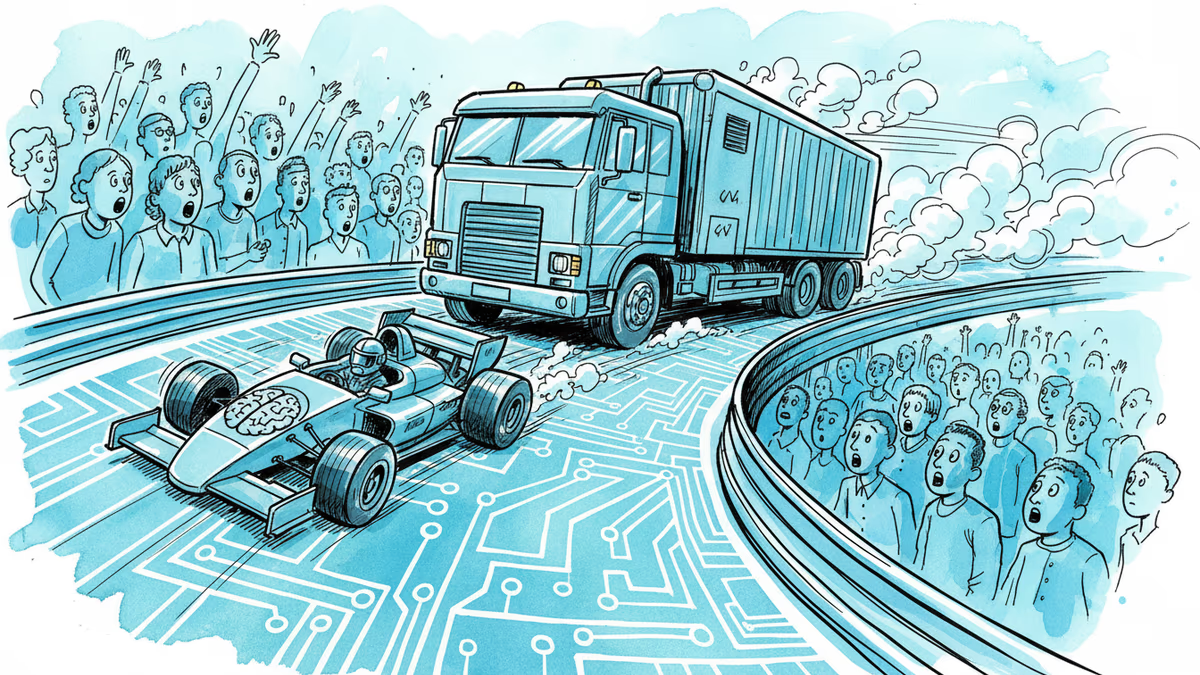

This isn't about OpenAI suddenly changing its values. It's about competitive pressure and revenue math.

Character.AI, Replika, and a constellation of smaller AI companion apps have already built substantial paying user bases on the back of adult content features. Open-source models circulate freely with no restrictions whatsoever. Meanwhile, OpenAI's valuation sits at roughly $300 billion, and sustaining that number requires subscription growth. Adult content is one of the most reliably monetizable categories in digital platforms—OnlyFans processed roughly $6.6 billion in transactions in 2023 alone.

The calculus isn't complicated: users who want these features will find them somewhere. The question is whether OpenAI wants to be in that market or cede it entirely.

Who Wins, Who Worries

For everyday users, the pitch is straightforward personal autonomy—consenting adults choosing what to do with a text interface. That argument is intuitive and, in liberal democratic frameworks, largely persuasive. What's less clear is the long-term behavioral impact. Research on emotional attachment to AI companions is still nascent, and the effects on users who are socially isolated, emotionally vulnerable, or simply young enough to lie about their age remain poorly understood.

For regulators, this creates a new enforcement puzzle. There's currently no robust age-verification architecture on ChatGPT. "Adult mode" will likely sit behind a terms-of-service checkbox—the same barrier that has proven largely ineffective on every other platform that's tried it. Expect this to become a flashpoint in ongoing AI oversight hearings in Washington and Brussels.

For investors and competitors, the signal is that OpenAI is willing to enter markets it previously avoided on ethical grounds when the financial pressure is sufficient. That's useful information about where the company's priorities sit as it navigates the path toward profitability.

The Counterargument Deserves Airtime

OpenAI says it has "mitigated enough" of the mental health risks to proceed. But that claim rests on internal assessments the company hasn't made public. Independent researchers studying AI companion dependency, parasocial AI relationships, and the effects of hyper-personalized sexual content have raised concerns that haven't been answered by corporate reassurance alone.

There's also the question of what "text-only at launch" actually means over time. Feature creep on AI platforms is well-documented. The boundary between text and image generation has been shrinking, not growing, as multimodal models mature. Today's constraint may simply be this quarter's constraint.

Authors

Related Articles

At his OpenAI trial, Elon Musk testified under oath about a falling-out with Larry Page over AI safety. The story reveals how personal philosophy shapes billion-dollar industries.

Elon Musk and Sam Altman head to trial this week in a case that could determine whether OpenAI survives as a for-profit company—and who leads it. Here's what's really at stake.

The Musk vs. Altman OpenAI trial opened with a jury selection crisis. Prospective jurors called Musk a 'world-class jerk' on official court forms. What does that tell us?

Cerebras Systems has refiled for an IPO targeting mid-May, backed by a $23B valuation, a reported $10B OpenAI deal, and an AWS partnership. What does this mean for Nvidia's dominance and the AI chip landscape?

Thoughts

Share your thoughts on this article

Sign in to join the conversation