Jensen Huang Sees $1 Trillion. Should You Believe Him?

At GTC 2026, Nvidia CEO Jensen Huang doubled his chip demand forecast to $1 trillion through 2027. Here's what that number actually means — and what it doesn't.

A year ago, Jensen Huang told a room full of investors that demand for Nvidia's chips would hit $500 billion. The crowd was stunned. On Monday, he came back to the same stage in San Jose — and doubled it.

What He Actually Said

At Nvidia's annual GTC Conference on March 16, CEO Jensen Huang projected that cumulative demand for the company's Blackwell and next-generation Vera Rubin chips would reach at least $1 trillion through 2027. That's up from the $500 billion figure he cited just months ago at GTC DC.

"$500 billion is an enormous amount of revenue," Huang acknowledged mid-keynote. "Well, I'm here to tell you that right now where I stand — a few short months after GTC DC, one year after last GTC — I see through 2027, at least $1 trillion."

The Vera Rubin architecture, first announced in 2024 and officially entering production in January 2026, is Nvidia's answer to what comes after Blackwell. The numbers are striking: 3.5x faster on model-training tasks, 5x faster on inference, and performance reaching up to 50 petaflops. Full production ramp is expected in the second half of 2026.

Why This Number Lands Differently Right Now

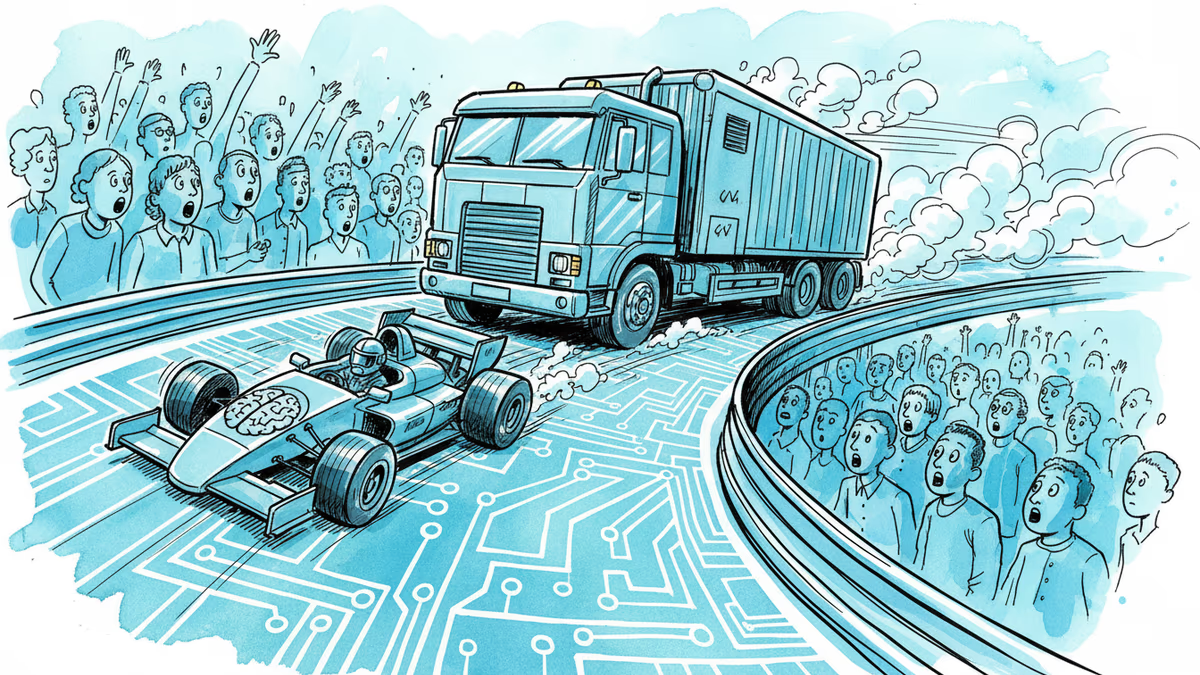

Timing matters here. In early 2025, DeepSeek's low-cost AI models rattled markets and sparked a genuine question: does the AI industry actually need this many expensive chips? Nvidia's stock dropped sharply. The narrative briefly shifted to "efficient AI over expensive hardware."

Huang's $1 trillion projection is, in part, a direct rebuttal to that narrative. His argument — made implicitly through the sheer scale of the number — is that more efficient models don't reduce chip demand. They expand the use cases for AI, which expands demand. It's a version of Jevons' paradox applied to compute: the cheaper and easier AI becomes to run, the more of it we want to run.

The macro backdrop supports his case. Microsoft, Google, Amazon, and Meta collectively pledged over $300 billion in AI infrastructure spending for 2025 alone. That money has to go somewhere — and right now, a significant portion of it flows through Nvidia.

The Skeptic's Corner

Before taking the $1 trillion at face value, a few things are worth noting.

First, this is a demand forecast delivered by a CEO to his own customers and investors at his own conference. "Demand" isn't revenue. Orders can be delayed, reduced, or cancelled — especially if the macroeconomic environment shifts or if AI investment enthusiasm cools.

Second, competition is quietly building. AMD's MI300 series has found real traction in certain workloads. Google's TPUs, Amazon's Trainium, and Microsoft's custom silicon are all designed to reduce dependence on Nvidia. None of them are close to displacing Nvidia at scale — but the $1 trillion market is exactly the kind of prize that accelerates those efforts.

Third, there's a geopolitical wildcard. US export controls on advanced chips to China have already carved out a chunk of potential demand. Any further tightening — or loosening — could significantly alter the actual numbers.

What It Means Beyond the Stock Price

For investors, the headline is clear: Nvidia is signaling sustained dominance in the AI hardware cycle through at least 2027. But the more interesting question is what a $1 trillion chip market means structurally.

The companies writing those checks — hyperscalers and cloud providers — will eventually need to recover those costs. That means AI services won't get cheaper as fast as many consumers hope. Every API call, every AI-generated image, every copilot suggestion carries the embedded cost of the hardware that runs it.

For developers and startups, the gap between those who can afford frontier AI infrastructure and those who can't is widening. The $1 trillion flowing into Nvidia is also $1 trillion not going into open, distributed, or alternative compute models.

Authors

Related Articles

Beijing added an Nvidia gaming chip to its customs ban list the same week Jensen Huang visited China with Trump. Here's what it means for the chip war—and who actually wins.

SpaceX plans to invest at least $55 billion in a Texas AI chip factory called Terafab, with total costs potentially reaching $119 billion. We break down what's real, what's at stake, and who wins or loses.

Cerebras Systems is targeting a $26.6B valuation in what could be 2026's largest tech IPO. But the real story is how deeply OpenAI is embedded in its capital structure—as customer, lender, and potential shareholder.

Cerebras Systems has refiled for an IPO targeting mid-May, backed by a $23B valuation, a reported $10B OpenAI deal, and an AWS partnership. What does this mean for Nvidia's dominance and the AI chip landscape?

Thoughts

Share your thoughts on this article

Sign in to join the conversation