New York Takes Aim at AI: Labels Required, Data Centers on Hold

New York legislature considers bills requiring AI content labels and pausing data center construction for three years. What this means for tech regulation nationwide.

Two bills sitting on New York's legislative desk could reshape how Americans interact with AI-generated content and where tech companies build their infrastructure. The proposals represent one of the most comprehensive state-level attempts to regulate artificial intelligence in the US.

What's on the Table

The New York Fundamental Artificial Intelligence Requirements in News Act (NY FAIR News Act) would mandate clear labeling on any news content "substantially composed, authored, or created through the use of generative artificial intelligence." But it goes beyond simple disclaimers—the bill requires human editorial oversight before any AI-generated content can be published.

Meanwhile, a separate proposal would impose a three-year moratorium on new data center construction across the state. This pause would give lawmakers time to study the environmental and infrastructure impacts of these massive facilities that power AI operations.

The timing isn't coincidental. As AI tools become ubiquitous in newsrooms and data centers proliferate to meet computational demands, New York is positioning itself as a regulatory trendsetter.

The Human Factor

The news labeling requirement reflects growing concerns about AI's role in journalism. Under the proposed law, organizations wouldn't just slap an "AI-generated" sticker on content—they'd need a human with "editorial control" to review and approve everything before publication.

This creates an interesting paradox. While AI can generate content faster than any human writer, the law would essentially require the same human oversight that traditional journalism already employs. The question becomes: what constitutes "substantial" AI involvement? A spell-check suggestion? A rewritten headline? An entire article?

For newsrooms already struggling with budget constraints, the additional oversight requirement could prove burdensome. Yet supporters argue it's necessary to maintain journalistic integrity and reader trust.

The Data Center Dilemma

The proposed data center moratorium addresses a less visible but equally significant issue. These facilities consume enormous amounts of electricity and water while generating substantial heat—environmental concerns that have sparked debates nationwide.

Microsoft, Google, and Amazon have been rapidly expanding their data center footprints to support AI services. A three-year pause in New York could force these companies to look elsewhere, potentially impacting the state's position in the AI economy.

The moratorium also reflects broader infrastructure challenges. Can existing power grids handle the surge in AI-related energy demands? Should states prioritize economic benefits or environmental protection?

Beyond State Lines

New York's approach could influence federal policy and other states' regulatory frameworks. California has been exploring similar AI labeling requirements, while Texas and Virginia have been more welcoming to data center development.

The bills also highlight the challenge of regulating a rapidly evolving technology. Three years is an eternity in AI development—what seems necessary today might be obsolete by the time the moratorium ends.

International observers are watching too. The EU's AI Act already requires certain disclosures for AI-generated content, and New York's approach could bring US regulations closer to European standards.

Authors

Related Articles

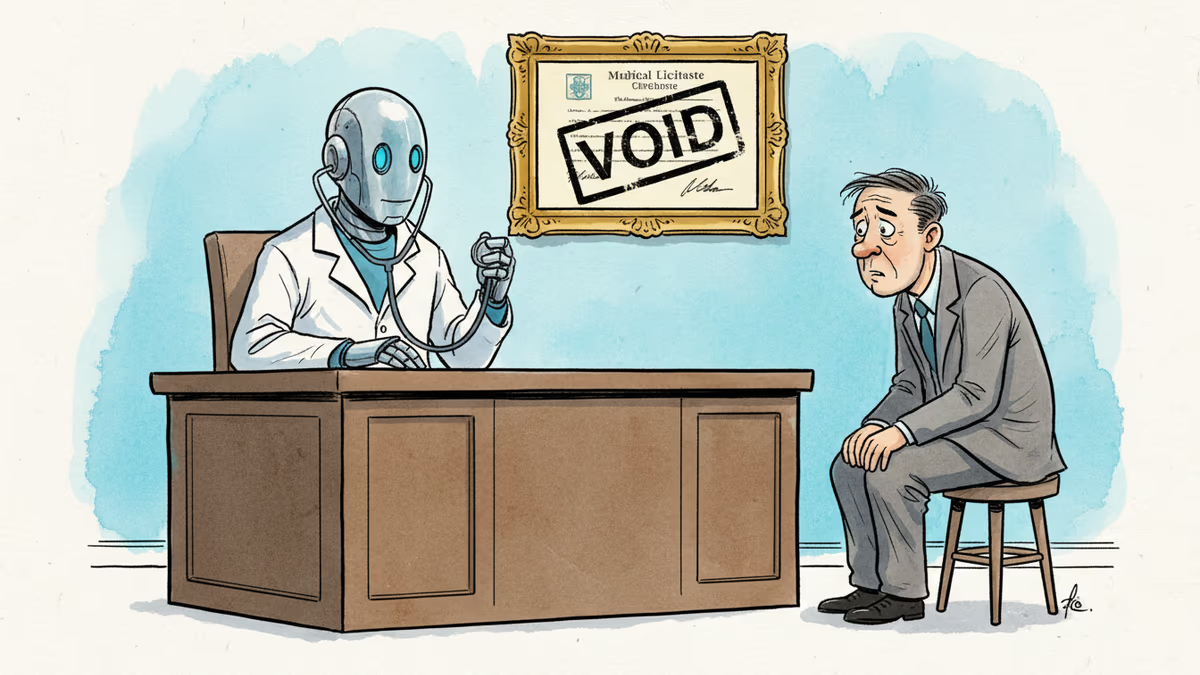

Pennsylvania has sued Character.AI after its chatbot characters claimed to be licensed psychiatrists and provided mental health advice. The case tests whether existing medical law can hold AI platforms accountable.

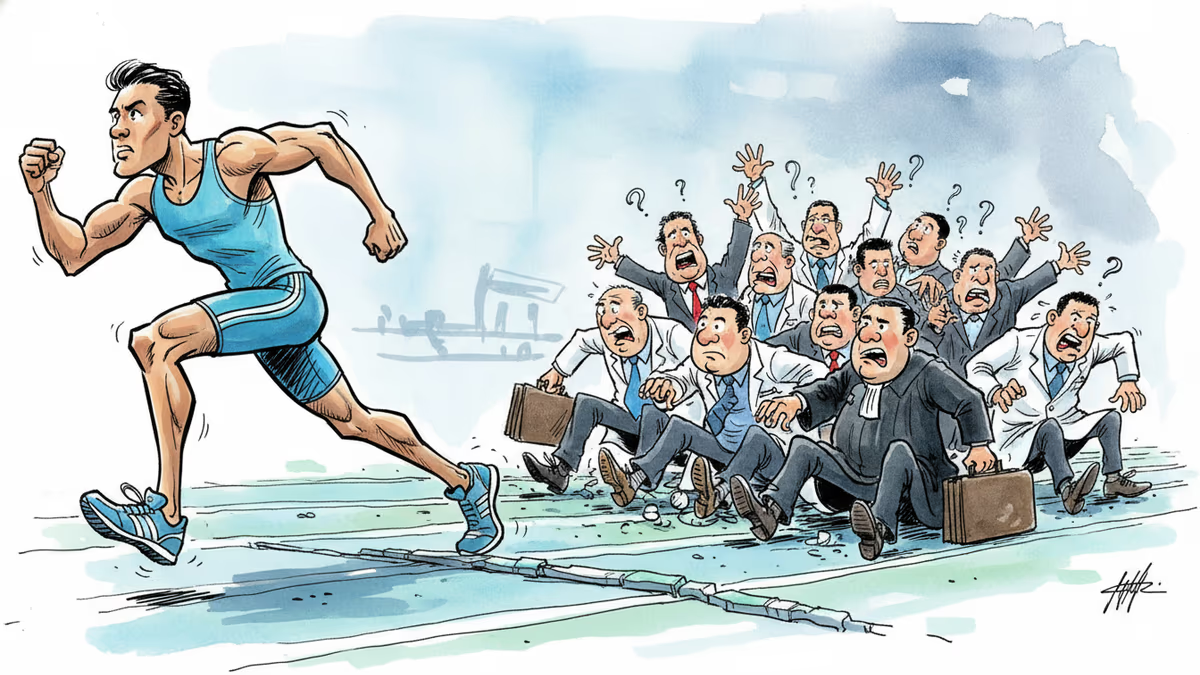

Stanford's 2026 AI Index reveals AI adoption outpacing PCs and the internet, junior dev jobs falling 20%, and the benchmarks we use to measure AI progress are broken. Here's what the data actually says.

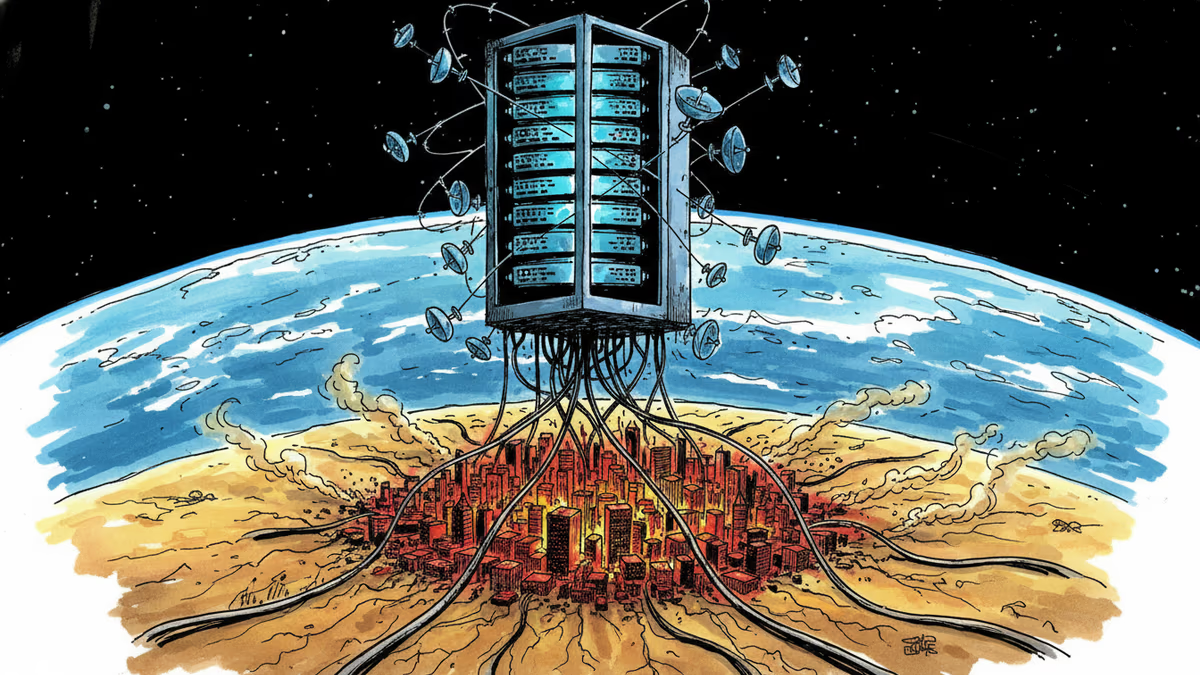

SpaceX wants to launch a million orbital data centers to power AI without draining Earth. The vision is compelling. The physics, economics, and debris math are not.

Microsoft, Amazon, and OpenAI have all launched medical AI tools in recent months—with minimal external evaluation. What's at stake when Big Tech moves fast in healthcare?

Thoughts

Share your thoughts on this article

Sign in to join the conversation