Can You Sue an Algorithm? America's Juries Just Said Yes

Two US juries held Meta liable for hundreds of millions in damages for harming minors. The verdicts challenge Big Tech's long-standing legal shields—and could redraw the rules for every platform on earth.

For decades, Big Tech had two get-out-of-jail-free cards. This week, two separate American juries decided those cards don't cover everything.

What Just Happened

Within days of each other, juries in New Mexico and Los Angeles found Meta liable for harming minors—verdicts that together could run into hundreds of millions of dollars in damages. The Los Angeles jury also held Google's YouTube responsible. Both companies are appealing, and the legal road ahead is long. But the symbolic weight of the verdicts landed immediately.

The surprise isn't just the scale. Meta and Google have long operated behind two powerful legal shields. The first is Section 230 of the Communications Decency Act, which protects platforms from liability for content their users post. The second is the First Amendment, which guards against government interference with speech. For years, these two protections made tech giants effectively lawsuit-proof in cases involving user-generated harm.

So how did plaintiffs get through? By arguing that this isn't really about content at all.

The Argument That Changed Everything

The plaintiffs' legal strategy was precise: don't attack what's on the platform. Attack how the platform was built. Algorithms designed to maximize engagement, notification systems engineered to pull users back, features that reward compulsive scrolling—these are product design decisions, not speech. And Section 230, the argument goes, protects platforms from liability for what users say, not for how the product itself was designed to addict them.

This distinction matters enormously. If courts accept it broadly, every recommendation engine, every infinite scroll, every dopamine-tuned notification system becomes a potential liability. That's not a content moderation problem. That's a business model problem.

The backdrop to these verdicts is well-documented. In 2021, a Meta whistleblower revealed that the company's own internal research showed Instagram was damaging the mental health of teenage girls—and that the company had largely suppressed those findings. Thousands of lawsuits followed. These jury verdicts are among the first to reach a decision.

Three Ways to Read This

For plaintiffs and child safety advocates, this is long-overdue accountability. Platforms profited from engineering compulsion into their products, knew about the downstream harms, and chose revenue over reform. The jury system, when Congress won't act, becomes the corrective mechanism.

For the platforms, the verdicts are alarming but not yet decisive. Appeals courts may overturn the decisions. Proving direct causation between a platform's design choices and a specific individual's psychological harm is genuinely difficult—and that difficulty doesn't disappear on appeal. Meta and Google will argue, not without some legal merit, that the causal chain is too speculative to sustain.

For regulators and policymakers, the timing is pointed. The Trump administration has shown little appetite for aggressive federal tech regulation, and congressional efforts to pass meaningful youth online safety legislation have stalled for years. Into that vacuum, civil juries are stepping. Whether that's democracy working as designed—or a sign that something is broken in the legislative process—depends on who you ask.

What This Could Actually Change

Money alone won't reshape Meta or Google. These are companies that generated a combined $300+ billion in revenue last year. Even a nine-figure jury award is, in corporate terms, a rounding error.

What could change behavior is if the legal theory holds on appeal and scales. If every algorithmic design decision becomes a potential tort liability, the calculus shifts. Companies might actually redesign engagement systems—not out of altruism, but because the actuarial math changes. Insurance, indemnification, and investor pressure tend to move faster than regulation.

Outside the US, the ripple effects are already being watched closely. The EU's Digital Services Act already imposes obligations on platforms regarding algorithmic transparency and minor protections. The UK's Online Safety Act is in implementation. These American verdicts give regulators elsewhere a data point: juries, at least, think the current system has failed.

Authors

Related Articles

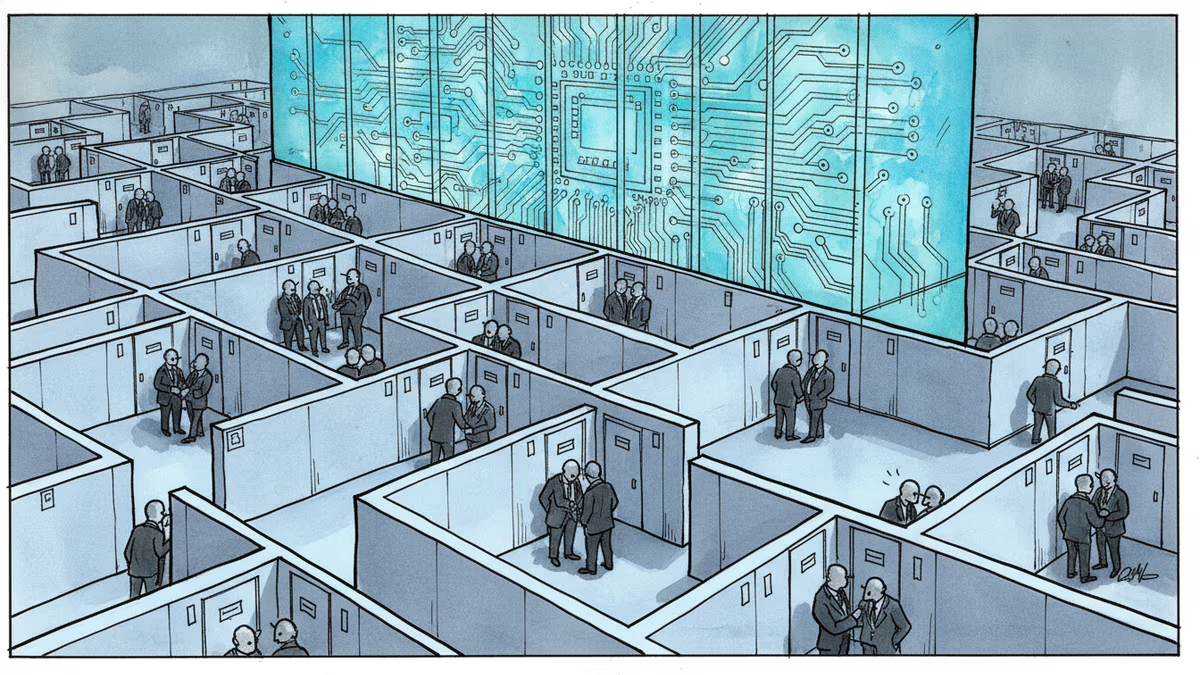

Behind every congressional hearing on Big Tech, there's a quieter room where the real rules get negotiated. As AI regulation, antitrust battles, and privacy law converge on Capitol Hill in 2026, the stakes have never been higher.

Five major publishers and author Scott Turow have filed a class action lawsuit against Meta, alleging the company used illegal pirate sites like LibGen to train its Llama AI models without permission.

New Mexico already won $375 million from Meta. Now it wants something harder to give: a court order forcing Facebook, Instagram, and WhatsApp to redesign themselves. A three-week trial starts Monday.

Meta acquired humanoid robotics startup ARI, adding to a Big Tech arms race in physical AI. The real prize isn't a robot product — it's a new way to train intelligence.

Thoughts

Share your thoughts on this article

Sign in to join the conversation