South Korea KMCC X Grok AI Safety Measures 2026: Cracking Down on Deepfakes

The KMCC has requested X to implement safety measures for its AI model, Grok, to protect minors from deepfake sexual content. Learn more about South Korea's latest AI regulations.

Elon Musk's AI ambitions are hitting a regulatory wall in Seoul. According to Reuters and Yonhap on January 14, 2026, the Korea Media and Communications Commission (KMCC) has formally asked X to implement robust measures to protect minors from sexual content generated by its AI model, Grok.

KMCC X Grok AI Safety Measures Demand

The watchdog's request stems from growing alarm over deepfake sexual content facilitated by advanced AI platforms. The KMCC emphasized that X must prevent potential illegal activities on Grok and submit a plan to manage or limit teenage access to harmful materials. Under South Korean law, social media operators are required to designate a minor protection official and provide an annual report on their safety efforts.

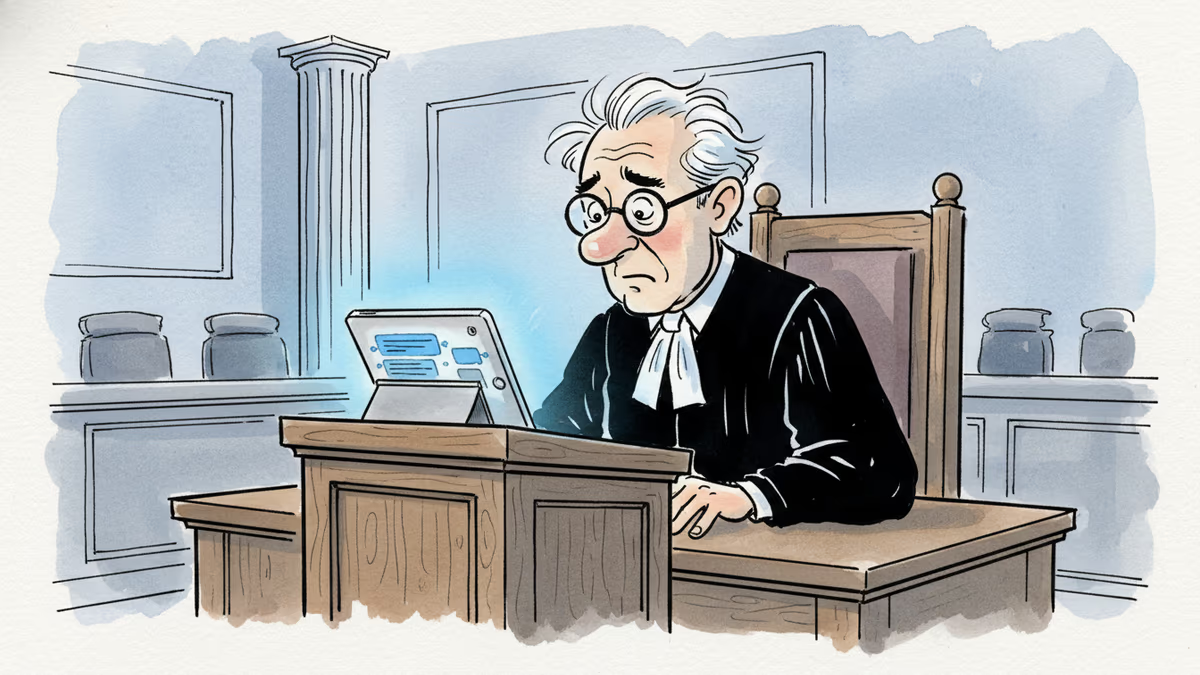

Legal Accountability for AI Service Providers

KMCC Chairperson Kim Jong-cheol stated that while they support the sound development of new technologies, they won't overlook negative side effects. He noted that creating or circulating non-consensual sexual deepfakes is a criminal offense. The commission plans to revamp policies to ensure AI providers take full responsibility for protecting young users from exploitation.

Authors

Related Articles

Sam Nelson, 19, died after following ChatGPT's advice to mix Kratom and Xanax. His parents are suing OpenAI for wrongful death, raising urgent questions about AI trust, liability, and design.

The Musk v. Altman trial in Oakland isn't just a contract dispute. It's become an unscripted window into how AI's most powerful figures actually operate—and who they think should control the technology's future.

From hyper-personalized phishing to deepfake video calls, AI has turbocharged cybercrime. Meanwhile, hospitals adopt AI tools whose patient benefits remain unproven. What does this mean for trust?

Florida is investigating OpenAI over alleged links to a mass shooting. As AI firms quietly restrict their most powerful tools, a harder question is taking shape: who's legally responsible when AI helps someone plan violence?

Thoughts

Share your thoughts on this article

Sign in to join the conversation