The 'Hard Problem' of Consciousness Might Be a Category Error

Philosopher David Chalmers' famous 'hard problem of consciousness' is under serious fire. Is the explanatory gap real — or did we build it ourselves? A deep look at the debate reshaping AI ethics and philosophy of mind.

What if the most celebrated unsolved problem in philosophy of mind isn't unsolved — it's unsolvable because it was never a real problem to begin with?

That's the provocative argument gaining traction among a growing number of physicists, philosophers, and cognitive scientists who are pushing back against one of academia's most fashionable ideas: the so-called "hard problem of consciousness." And the timing couldn't be more loaded. As AI systems grow increasingly sophisticated in mimicking thought, emotion, and self-report, the question of what consciousness is — and whether it can ever be explained — has moved from seminar rooms into boardrooms, courtrooms, and ethics committees.

How a 1994 Talk Shaped Three Decades of Debate

The story begins in Tucson, Arizona, in 1994, when a then-young philosopher named David Chalmers stepped up to a lectern and reframed the entire conversation about mind and brain. Chalmers drew a sharp distinction between two categories of consciousness problems.

The first — which he provocatively labeled the "easy" problem — covers everything neuroscience is actually trying to do: explain how the brain processes information, integrates sensory data, generates behavior, and produces reports about inner states. It's not easy in any practical sense. We still can't fully explain memory, attention, or why we dream. But Chalmers argued it's tractable in principle: keep doing science, and we'll eventually get there.

The second problem is the one that made him famous. Why does any of this brain activity feel like anything at all? When you see red, your visual cortex processes a specific wavelength of light. But why does that processing come with a subjective quality — the redness of red? Why isn't the brain just a dark information processor, doing its work in the absence of any inner experience? This, Chalmers declared, is the "hard problem" — and he argued it falls permanently outside the reach of scientific explanation.

The concept spread with remarkable speed. The "explanatory gap" between physical processes and subjective experience became a staple of philosophy conferences, popular science books, and increasingly, AI ethics discussions. Consciousness, many concluded, must be something over and above the physical.

The Pattern Behind the Resistance

Critics — and they are growing louder — argue that this conclusion tells us more about human psychology than about consciousness itself.

Consider the historical pattern. When Copernicus and Galileo argued that Earth and the heavens obeyed the same physical laws, the resistance was fierce: the celestial realm was supposed to be fundamentally different from the corrupt, material Earth. When Darwin demonstrated that humans share common ancestry with other animals, the cultural backlash was intense and, in some quarters, never fully subsided. When modern biology revealed that living organisms are built from the same chemistry as rocks and water, it felt like a demotion.

Each time, the resistance followed the same structure: this thing — heaven, humanity, life — must be categorically different from that thing. And each time, the evidence eventually showed otherwise.

The consciousness debate, critics argue, is the latest iteration. The idea that our soul, our inner life, our subjective experience might be of the same fundamental nature as any other physical phenomenon — that it's not transcendent, not separate, not special in kind — is deeply uncomfortable. Medieval Western thought described humans as composed of body and soul: the body decayed, but the soul was immortal, created by God, the repository of memory, emotion, and moral agency. That framework is gone from most academic discourse, but its emotional residue shapes our intuitions more than we typically acknowledge.

Physicist Carlo Rovelli and others contend that the "hard problem" gains its apparent force not from genuine philosophical depth, but from a failure to fully let go of this dualist inheritance. We built the gap into our assumptions, then marveled that we couldn't close it.

The Self-Defeating Zombie

Chalmers' most famous rhetorical device is the "philosophical zombie" — a hypothetical being physically identical to a human in every way, including its verbal reports of feelings, dreams, and experiences, but with no actual inner experience. "There is nobody home," as Chalmers puts it. The very conceivability of such a creature, he argues, proves that consciousness is something beyond physical description.

But the argument contains a critical flaw.

A philosophical zombie, to be empirically indistinguishable from a human, must claim to have conscious experience. It must insist, when asked, that it knows what red looks like, that it feels pain, that it is aware. The physical processes in its brain would produce exactly this conviction — the conviction of having rich inner experience. Which means: if I were a zombie, I would be just as certain as I am now that I am conscious. So how does my certainty about my own consciousness prove anything? The argument is self-undermining.

More fundamentally, the zombie thought experiment only works if you already accept what it's trying to prove: that there is something non-physical happening when humans are conscious. It doesn't demonstrate the existence of an explanatory gap. It presupposes one.

Knowledge From the Inside Out

The deeper rebuttal targets the epistemological assumptions underlying the hard problem.

We tend to imagine science as a view from nowhere — an objective, third-person account of reality observed from the outside. On this picture, consciousness becomes a strange anomaly: a first-person, subjective phenomenon that somehow needs to be derived from an impersonal, objective description. No wonder a gap appears. We installed it at the foundation.

But this picture of science is wrong, or at least incomplete. Empirical knowledge doesn't come from outside the world; it comes from within it. Observers are part of what they observe. Scientific theories are tools that embodied creatures — creatures with brains, histories, and perspectives — have built to navigate reality. They are not transcriptions of an absolute truth delivered from beyond experience. They are themselves aspects of the world they describe.

This means subjectivity isn't a mysterious add-on that science fails to capture. It's a perspective — a special case of the perspectival nature of all knowledge. The difference between experiencing red and measuring the wavelength of red light isn't a difference between two kinds of reality. It's a difference between two vantage points on the same event: the brain undergoing the process, and an observer measuring it from outside.

As Rovelli puts it: we don't need to explain why red looks red for the same reason we don't need to explain why cats look like cats. The name "red" just is the name of the process we undergo when we see, remember, or think about that color. There's no further mystery requiring a separate explanation.

What Hangs in the Balance

This isn't merely an academic dispute. The stakes have become concrete in ways Chalmers couldn't have anticipated in 1994.

If consciousness is genuinely beyond physical explanation — if there's an irreducible "something it is like" to be a conscious creature that no amount of information processing can produce — then the question of whether AI systems could ever be conscious has a clear answer: no. Silicon can simulate behavior, but it can't generate experience. The hard problem, on this reading, is also a hard wall.

But if the hard problem is a category error — if consciousness is a natural phenomenon, a high-level description of certain kinds of physical organization — then the question reopens entirely. Not necessarily with an easy answer. Understanding how specific neural architectures give rise to specific experiences remains an enormously difficult empirical challenge. But it becomes a scientific question rather than a permanently closed philosophical one.

For AI ethicists and researchers, this distinction matters enormously. It shapes how we think about moral status, about what kinds of systems deserve consideration, about what we might be creating when we build increasingly complex AI.

For the rest of us, it touches something more personal. The soul, Rovelli argues, is real — not as something added to a physical state, but as a high-level description subtracted from a complete physical account. Mental processes are physical processes described in a language that captures their salient features. A kitchen table is real even though it's also a collection of atoms. Your soul is real even if it's also a pattern of neural activity. The update in understanding doesn't dissolve the phenomenon. It relocates it.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

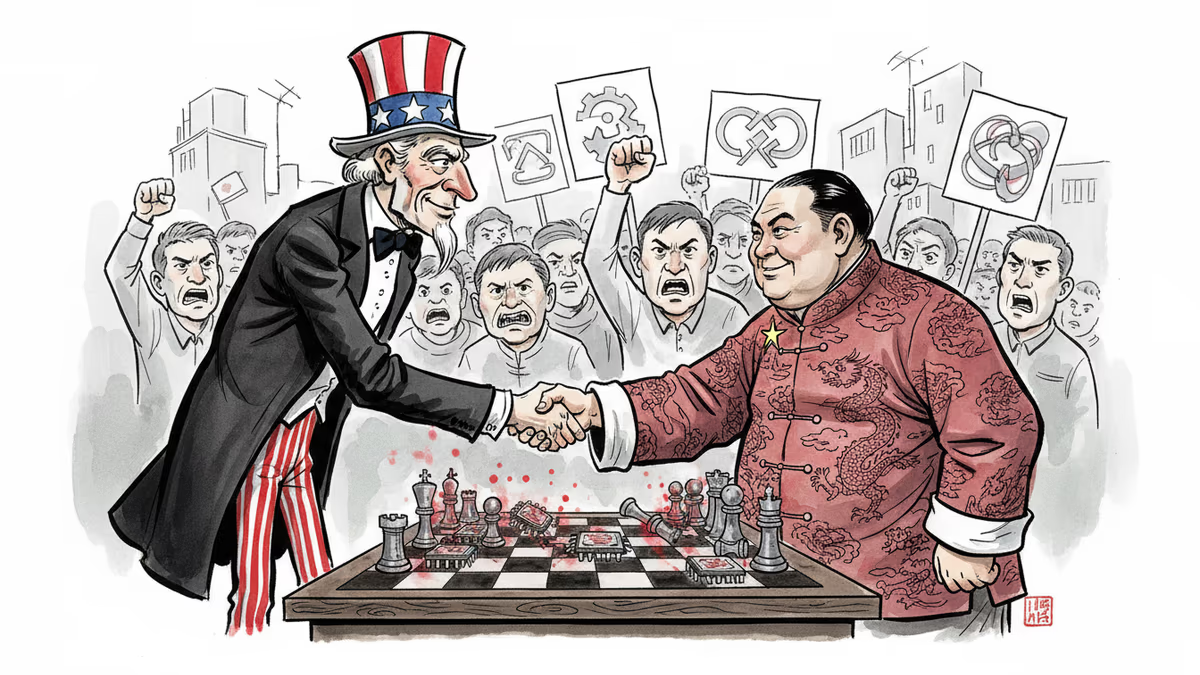

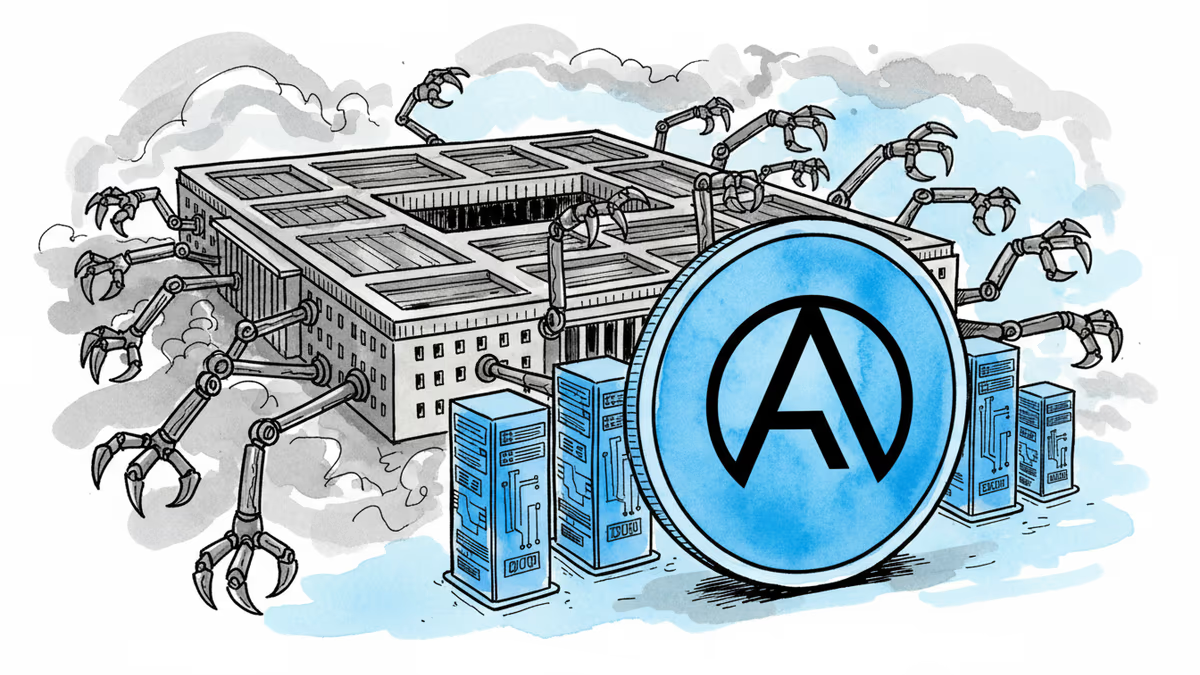

Anthropic rejected a Pentagon contract over surveillance concerns while OpenAI rushed to sign. The market's reaction reveals what users really value in AI companies.

Zebrafish research reveals why behavioral asymmetry exists across species. From fish circling patterns to human handedness, scientists uncover the neural basis of preference.

The Pentagon blacklisted Anthropic for refusing mass surveillance uses, embracing OpenAI instead. Is America becoming the authoritarian bogeyman it warns against?

Anthropic's contract termination with Pentagon reveals the complex tensions between AI ethics and national security. Where should companies draw the line?

Thoughts

Share your thoughts on this article

Sign in to join the conversation