AI Zero-Day Vulnerability Detection 2026: Claude 4.5 and the New Security Frontier

AI models like Claude 4.5 are now detecting zero-day vulnerabilities that humans missed. Explore how AI zero-day vulnerability detection 2026 is reshaping cybersecurity.

Can AI find bugs that don't exist in any known database? It's already happening. Last November 2025, the cybersecurity startup RunSybil discovered a critical flaw in a customer's GraphQL deployment through its AI tool, Sybil. What makes this remarkable is that the issue required deep reasoning across multiple systems—knowledge that simply didn't exist on the public internet at the time.

Advancing AI Zero-Day Vulnerability Detection with Claude 4.5

We've reached an inflection point where frontier models are becoming exceptionally skilled at finding flaws. According to Dawn Song, a computer scientist at UC Berkeley, the combination of simulated reasoning and agentic AI—which can install tools and browse the web—has drastically amped up cyber capabilities. Recent benchmarks show a significant leap in performance over just a few months.

| Model | Date | Vulnerability Detection Rate |

|---|---|---|

| Claude 4 Sonnet | July 2025 | 20% |

| Claude 4.5 | October 2025 | 30% |

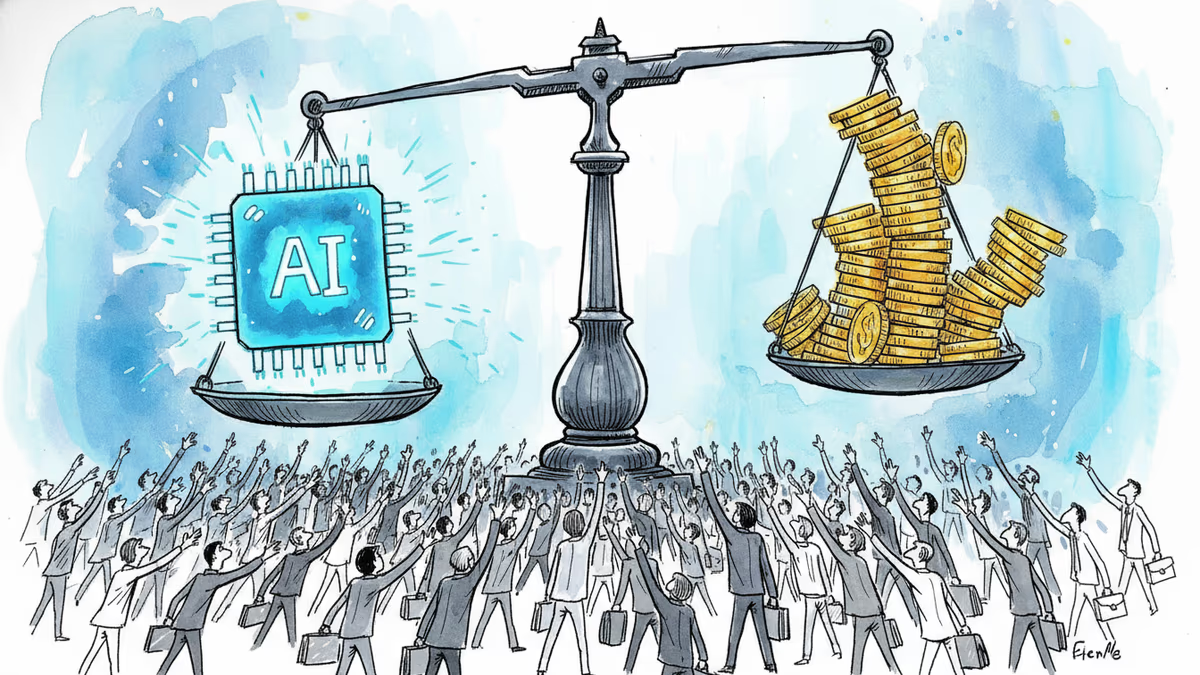

The Race Between Offensive and Defensive AI

In the CyberGym benchmark, which includes 1,507 known vulnerabilities, Anthropic's Claude 4.5 identified 30% of the bugs. While this is a win for security researchers, it's also a warning. The same low-cost intelligence can be used by hackers to generate malicious code and actions. Song suggests that frontier AI companies should share models with researchers early to find bugs before general release.

Authors

Related Articles

A small but growing group of developers has gone all-in on AI coding agents like Claude Code and OpenClaw. History suggests the rest of us won't be far behind.

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

Anthropic is closing what may be its final private fundraise at a $900B valuation, surpassing OpenAI. Investors have 48 hours to commit to the ~$50B round.

Anthropic's tightly restricted Mythos AI—designed to find security flaws—was accessed by Discord sleuths without a single line of exploit code. Meanwhile, North Korean hackers used AI to steal $12M in three months. The security paradox of 2026.

Thoughts

Share your thoughts on this article

Sign in to join the conversation