Everyone Wants AI. Except the People.

Companies are racing to deploy AI everywhere, but consumers keep saying no. What happens when the gap between corporate enthusiasm and public trust keeps widening?

Companies can't stop talking about AI. Consumers can't stop saying no. And the gap between those two realities keeps getting wider.

The Disconnect Is Real—and Growing

Study after study lands on the same finding: people are skeptical of AI, worried about its consequences, and don't think the benefits outweigh the costs. This isn't a fringe reaction. It's a consistent signal across demographics, industries, and geographies. Meanwhile, boardrooms and earnings calls are saturated with AI announcements, AI roadmaps, and AI investment pledges worth hundreds of billions of dollars.

Something doesn't add up.

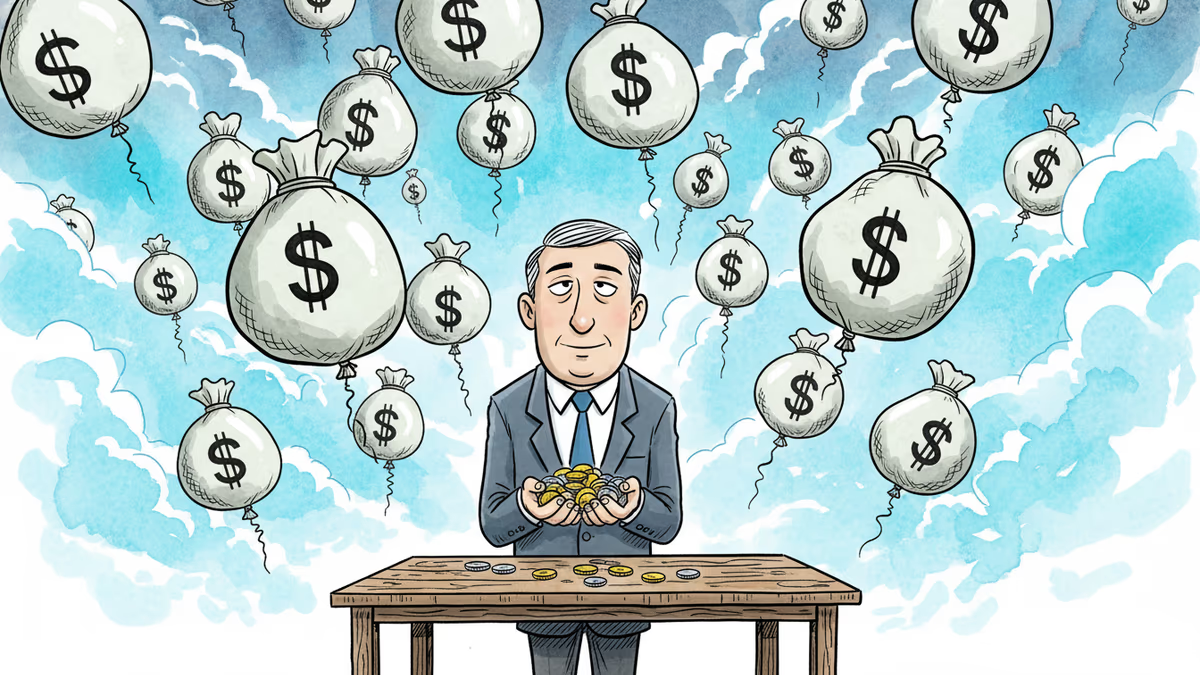

The companies deploying AI aren't ignoring consumer sentiment out of malice. The economics are hard to resist. Automating customer service can cut operational costs by 30–40%. AI-assisted coding tools can compress development timelines significantly. When your competitor is doing it and your investors expect it, waiting for public approval feels like a luxury. So the technology gets embedded quietly—inside search results, recommendation feeds, customer support queues—without anyone explicitly asking users if they want it.

Why People Are Pushing Back

The resistance isn't really about technology. It's about trust—and trust has a specific anatomy here.

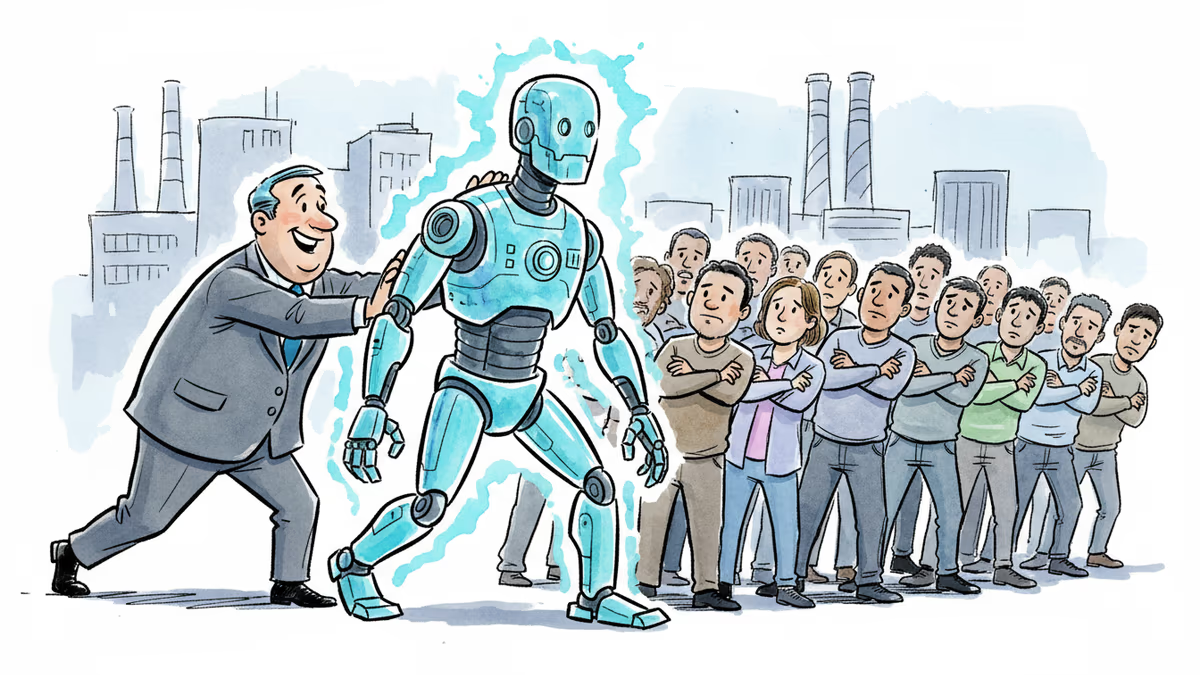

First, there's the job anxiety layer. Automation has always displaced workers, but AI feels different in scope and speed. It's not just factory floors; it's radiologists, paralegals, writers, and coders. When people look at AI, many see a technology designed to replace them, not assist them.

Then there's the data layer. Every AI interaction is a data transaction. People increasingly understand this, even if they can't articulate the technical details. The question "what are you doing with my information?" rarely gets a satisfying answer.

Finally, there's the accountability gap. When an AI system makes a wrong decision—denies your insurance claim, flags your resume, misidentifies you—who do you call? The answer is usually: nobody. That powerlessness is corrosive to trust.

The Business Case Is Running Ahead of the Social Contract

Google, Microsoft, Amazon, Meta—they've all made AI central to their product strategies. The implicit bet is that consumer resistance is a temporary friction, not a structural ceiling. Get people using the product, the argument goes, and skepticism fades.

Sometimes that's true. People resisted online banking, GPS tracking in phones, and algorithmic news feeds—and then adopted all of them. But those technologies offered obvious, immediate personal benefits. The value proposition was clear.

With AI, the calculus is murkier. The efficiency gains often accrue to the company, not the user. You get a slightly faster chatbot; the company eliminates dozens of customer service jobs. That asymmetry is something consumers are picking up on, even if they can't always name it precisely.

Different Stakeholders, Different Realities

For regulators, this gap is a policy problem. The EU's AI Act is one attempt to impose guardrails—transparency requirements, high-risk use case restrictions, human oversight mandates. US regulators are moving more slowly, caught between innovation pressure and consumer protection mandates. The tension is real and unresolved.

For investors, consumer skepticism is a risk factor that hasn't fully priced in yet. If public resistance hardens into regulatory action or active product avoidance, the AI infrastructure buildout—hundreds of billions in data centers and chips—looks different.

For workers, the stakes are existential in ways that quarterly earnings reports don't capture. The question isn't just "will AI take my job" but "who decided that was acceptable, and when did I get a vote?"

For consumers, the frustrating reality is that opting out is increasingly difficult. AI is being woven into services people depend on. Choosing not to use AI-powered tools may soon mean choosing not to use the internet.

Authors

Related Articles

Moonshot AI raised $2B at a $20B valuation. Its Kimi models rank second on OpenRouter. What China's open-weight AI surge means for the global LLM market.

QuTwo, the Finnish AI lab led by former AMD Silo AI CEO Peter Sarlin, raised a $29M angel round at a $380M valuation — deliberately avoiding VC money. Here's the logic behind that bet.

AI is reshaping how citizens know, act, and deliberate together. Three researchers argue democracy's infrastructure wasn't built for this—and the design choices are already being made.

Two days before trial, Elon Musk texted OpenAI's Greg Brockman warning he and Sam Altman would become "the most hated men in America." The judge ruled it inadmissible — but the damage to Musk's narrative may already be done.

Thoughts

Share your thoughts on this article

Sign in to join the conversation