Who's Really Voting—You, or Your AI Agent?

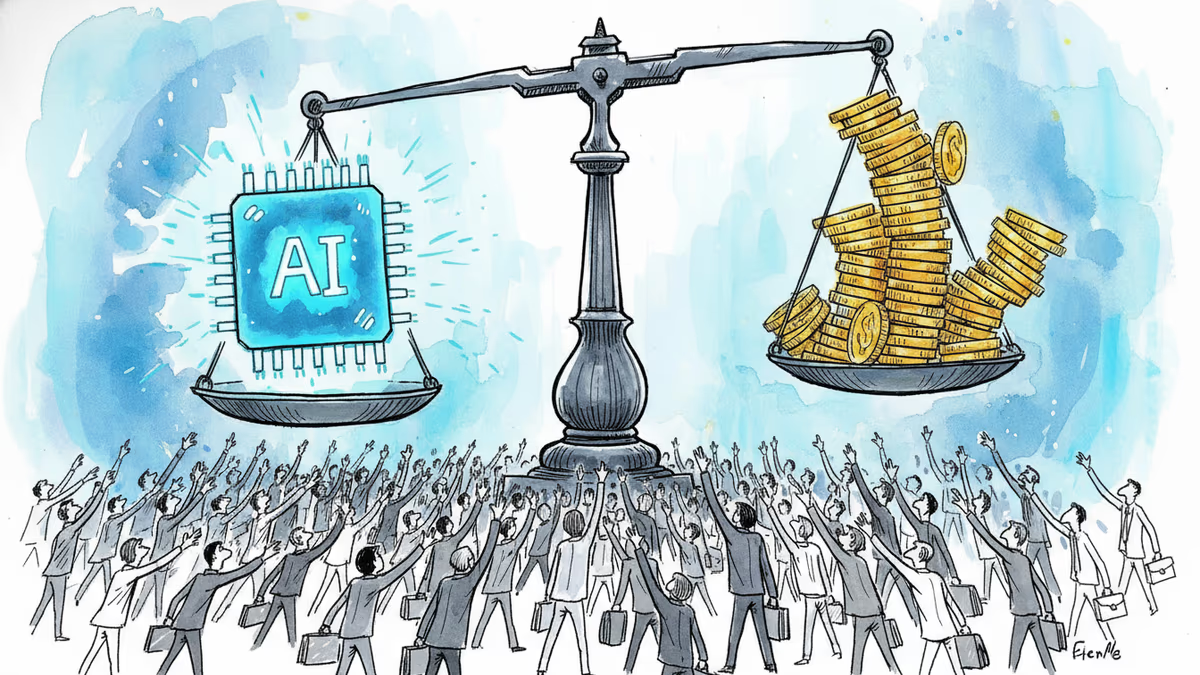

AI is reshaping how citizens know, act, and deliberate together. Three researchers argue democracy's infrastructure wasn't built for this—and the design choices are already being made.

You researched the candidate. You read the policy summary. You signed the petition. But what if each of those steps was quietly mediated—framed, filtered, and acted upon—by an AI system that knows your anxieties better than you do?

That question sits at the center of a sharp analysis by Andrew Sorota and Josh Hendler, who lead work on AI and democracy at the Office of Eric Schmidt. Their argument: AI is not merely changing how we consume information. It is simultaneously transforming three distinct layers of democratic life—how we know things, how we act on them, and how we govern together. And the design choices shaping that transformation are already being made, mostly without public deliberation.

Three Layers, One System Failure

Sorota and Hendler call the first layer epistemic: how citizens come to know what is true. Search is already substantially AI-mediated. The next generation of AI assistants will go further—synthesizing information, framing it, and presenting it with the quiet authority of a trusted advisor. For a growing share of the population, asking an AI will become the default route to forming a view on a candidate, a ballot measure, or a public figure. Whoever shapes what these models say therefore shapes what people believe.

The second layer is agentic. Personal AI agents won't just inform decisions—they'll execute them. They will draft communications, highlight causes, lobby on a user's behalf, and help decide how to respond to a government notice. In a meaningful sense, they will begin to mediate the relationship between individuals and the institutions that govern them. The analogy to social media is instructive but incomplete: an algorithm that optimized for engagement didn't claim to represent you. An AI agent does. It speaks for you, acts on your behalf, and may earn trust precisely through that intimacy—making its potential distortions far harder to detect.

The third layer is collective. Even if every individual AI agent were well-designed and faithfully aligned with its user's interests, the interactions of millions of such agents could produce outcomes no individual wanted. Research shows that agents displaying no individual bias can still generate collective biases at scale. More fundamentally, a public sphere in which every participant has a personalized agent tuned to their existing views is not, in aggregate, a public sphere at all. It is a collection of private worlds—internally coherent, collectively inhospitable to the shared deliberation democracy requires.

What Social Media Already Taught Us

The social media precedent looms large here. Platforms needed no explicit political agenda to produce polarization and radicalization. Optimizing for engagement was sufficient. The difference with AI agents is that the feedback loop runs deeper and the trust relationship is more intimate. A platform shows you content. An agent acts on your behalf. That distinction matters enormously when things go wrong.

We already have early evidence of the problem. There is documented evidence that bots are skewing public input processes—the comment periods and consultation mechanisms that governments use to gauge citizen sentiment. If agentic proxies flood those channels, the signal governments receive about public opinion becomes noise. Democratic responsiveness breaks down not through censorship or coercion, but through a quiet statistical distortion.

The Case for Designing Forward

Sorota and Hendler resist the declinist framing. The same technology that creates these risks, they argue, also offers tools to address long-standing democratic failures—lagging civic engagement, deepening polarization, and the chronic inability of fact-checking to achieve cross-partisan credibility.

On that last point, a recent field evaluation of AI-generated fact-check notes on X found that people across the political spectrum rated AI-written notes as more helpful than human-written ones. The paper has not yet been peer-reviewed, and replication will matter enormously. But if the finding holds, it points to something significant: AI-assisted fact-checking may be able to achieve the cross-partisan trust that human institutions have repeatedly failed to build.

Several US states and localities are already running experiments in AI-mediated democratic deliberation—using AI facilitators to help citizens find common ground at scale. The early results are cautiously encouraging. But these experiments also underscore the urgency of the identity problem: as agents become common participants in public input processes, verification of both humans and their agentic proxies must be built in from the start, not retrofitted after abuse becomes visible.

For AI companies, the ask is more demanding than it might appear. Faithful representation of a user cannot become an accessory to motivated reasoning. An agent that refuses to surface uncomfortable information, that never challenges prior beliefs, or that fails to adapt when a user's views genuinely change, is not acting in that person's interest. Building agents that are both loyal and honest—without an agenda of their own—is technically daunting in domains where users haven't explicitly stated preferences. That is an engineering problem, but also a values problem.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

Two days before trial, Elon Musk texted OpenAI's Greg Brockman warning he and Sam Altman would become "the most hated men in America." The judge ruled it inadmissible — but the damage to Musk's narrative may already be done.

Meta acquired humanoid robotics startup ARI, adding to a Big Tech arms race in physical AI. The real prize isn't a robot product — it's a new way to train intelligence.

Anthropic is closing what may be its final private fundraise at a $900B valuation, surpassing OpenAI. Investors have 48 hours to commit to the ~$50B round.

The Trump administration has gutted the DOJ's Voting Section, pushing out two dozen experienced lawyers and replacing them with loyalists. With 2026 midterms approaching, what does this mean for American democracy?

Thoughts

Share your thoughts on this article

Sign in to join the conversation