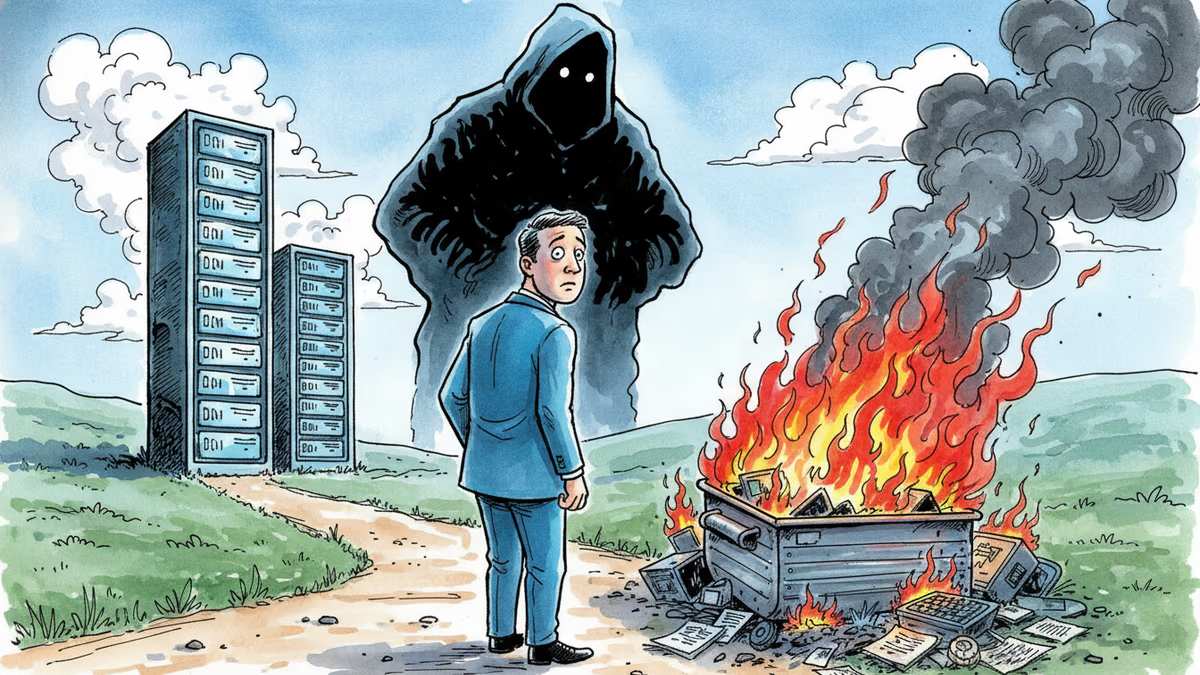

The Energy Bill for Your AI Queries Is Real. Big Tech Won't Show It.

AI sustainability researcher Sasha Luccioni is launching a new venture to push for energy transparency in AI. Here's why Big Tech keeps the numbers hidden—and what's starting to change.

Every time you ask an AI chatbot a question, energy gets burned. Estimates suggest a single ChatGPT query uses roughly 10 times the electricity of a Google search. But here's the thing: OpenAI, Google, and Anthropic don't publish that number. And right now, nothing legally requires them to.

The Researcher Who Wants a Meter on Your Chatbot

Sasha Luccioni spent four years at Hugging Face doing something most AI companies actively avoid: measuring and publishing the energy costs of AI models. She pioneered an open-source energy efficiency leaderboard—one of the few public tools that let developers compare models not just by performance, but by how much power they consume.

Now she's taken that work further. Luccioni has co-founded Sustainable AI Group with Boris Gamazaychikov, former sustainability chief at Salesforce. Their focus is practical: helping businesses figure out which AI tools they're using, how much energy those tools consume, and whether cheaper, smaller models could do the same job.

Her ask of Big Tech is almost disarmingly simple. "I wish there was a little meter on the ChatGPT or Claude UI that tells you, at the end of each conversation, how much energy was used. Ideally, greenhouse gas emissions and how that energy was generated." Not a regulatory overhaul. Just a number.

Why Companies Are Starting to Care—Even If Governments Aren't

The Trump administration has been rolling back environmental protections while simultaneously pushing AI infrastructure expansion. That's the political backdrop. And yet, Luccioni says demand for AI energy transparency from the corporate side is higher than it's ever been.

The pressure is coming from two directions. First, employees. Companies that have mandated AI tools like Microsoft Copilot are now fielding internal questions: "You're forcing us to use this—how does it affect our ESG commitments?" For firms that have made public climate pledges, the contradiction is hard to paper over.

Second, regulation—especially outside the US. The EU AI Act includes sustainability clauses, and the first reporting requirements are coming into effect. Even in Asia, governments are beginning to push back on data center builders, partly because they lack the granular energy data needed to plan future grid capacity. The International Energy Agency has been running into this problem directly: countries want to make 5-year energy forecasts for AI infrastructure, but the underlying numbers simply don't exist yet.

The structural problem, as Luccioni describes it, is that the companies building the biggest models are also the ones selling you the compute to run them. OpenAI runs on Microsoft Azure. Google runs on its own cloud. The incentive to recommend the largest, most capable model is baked into the business model itself. "If data center operators, model developers, and product companies were completely distinct entities," she says, "we would have so much more diversity in AI right now."

Not Every Job Needs the Biggest Hammer

One of Luccioni's more counterintuitive arguments is about what AI has actually been doing for us—versus what's being sold to us.

The productivity gains most businesses have seen from AI over the past decade didn't come primarily from large language models. They came from classifiers: smaller, task-specific models that do things like flag fraud, sort documents, or route customer queries. LLMs are powerful, but they're also expensive and energy-intensive. For a lot of business tasks, they're overkill.

"Let's say you work in finance and you're trying to figure out where the market's going," Luccioni says. "You don't need a general-purpose LLM for that." She points to Google's decision to start publishing token-level usage data as a step in the right direction—giving companies enough visibility to ask whether their employees actually need the heavy-duty model, or whether a simpler one would do.

This matters beyond cost. A company that routes routine document searches through a lightweight model instead of GPT-4 class infrastructure isn't just saving money—it's consuming meaningfully less energy per query, potentially across millions of interactions.

What Transparency Could Actually Change

Luccioni draws an analogy that's hard to dismiss. Nutrition labels weren't something food companies volunteered. They became standard because regulators mandated them—and now consumers use them, markets price around them, and the absence of one is itself a signal.

AI energy labeling is earlier in that same arc. Europe is most likely to move first. The EU AI Act already has hooks for sustainability reporting, and if those requirements get teeth, they'll effectively set a global standard for any company operating in European markets—which includes every major AI provider.

Luccioni acknowledges the nuance problem. Each individual query may not amount to much. But aggregated across hundreds of millions of users, the scale is significant—and without the numbers, neither businesses nor policymakers can make informed decisions. "We have numbers for transportation. We have numbers for nutrition. We should have them for this too."

She also floats an idea that the market hasn't fully tested: what if one of the major AI providers made a genuine bet on renewable-powered infrastructure and publicized it? "Right now they're all trying to one-up each other on benchmarks. If one of them committed to renewable data centers and made that a selling point—I think that could actually be a competitive advantage." She cites Anthropic's decision to decline US military contracts as a comparable move: it cost something, but it also bought a certain kind of trust.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

Emails revealed in the Musk v. Altman trial show Microsoft executives were deeply skeptical of OpenAI in 2017–2018. What actually changed their minds?

Moonshot AI raised $2B at a $20B valuation. Its Kimi models rank second on OpenRouter. What China's open-weight AI surge means for the global LLM market.

At Milken 2026, five AI insiders—from the CEO of ASML to a quantum physicist challenging LLMs—laid out the physical, energy, and geopolitical limits the AI boom is running into.

Palantir co-founder Peter Thiel and other Silicon Valley investors have poured $140 million into Panthalassa, a startup building wave-powered floating AI data centers in the open ocean. Here's what that actually means.

Thoughts

Share your thoughts on this article

Sign in to join the conversation