Why OpenAI Is Making Its Play for Scientists Now

OpenAI launches dedicated science team three years after ChatGPT's debut. What's driving this strategic shift and what it means for the future of scientific research.

Three years after ChatGPT changed everything, OpenAI has found its next target: scientists.

The company that upended work, education, and daily life is now making an explicit play for the research community. Last October, OpenAI announced the launch of OpenAI for Science, a dedicated team exploring how large language models can support scientists and tweaking tools specifically for them.

The Strategic Timing

Why now? Kevin Weil, the OpenAI vice president leading the new team, frames it as a natural evolution. "Our technology has already transformed everyday activities," he explained in an exclusive interview. "Now it's time to help push the boundaries of human knowledge itself."

But the timing reveals something deeper. While OpenAI dominated consumer AI, competitors have been quietly building scientific credibility. Google's DeepMind revolutionized protein folding with AlphaFold. Microsoft has been courting researchers through Azure partnerships. The scientific research market—worth billions in grant funding and institutional contracts—remains largely unconquered territory.

For OpenAI, scientists represent more than just another user base. They're validators. A breakthrough discovery powered by GPT models would cement the company's position as the leader in transformative AI, not just conversational chatbots.

The Promise and the Problem

AI-assisted research offers tantalizing possibilities. Imagine analyzing thousands of papers in minutes, generating novel hypotheses, or designing experiments that humans might never consider. For cash-strapped universities and overworked researchers, AI tools could democratize access to research capabilities once reserved for well-funded labs.

Early adopters are already seeing results. Researchers use large language models to parse complex datasets, generate literature reviews, and even draft grant proposals. The technology promises to accelerate the pace of discovery across fields from drug development to climate science.

But scientists are notoriously skeptical—and for good reason. Research built on AI-generated insights raises fundamental questions about reproducibility and verification. How do you peer-review a hypothesis generated by a black-box algorithm? What happens when AI "hallucinations" creep into scientific literature?

The Bigger Stakes

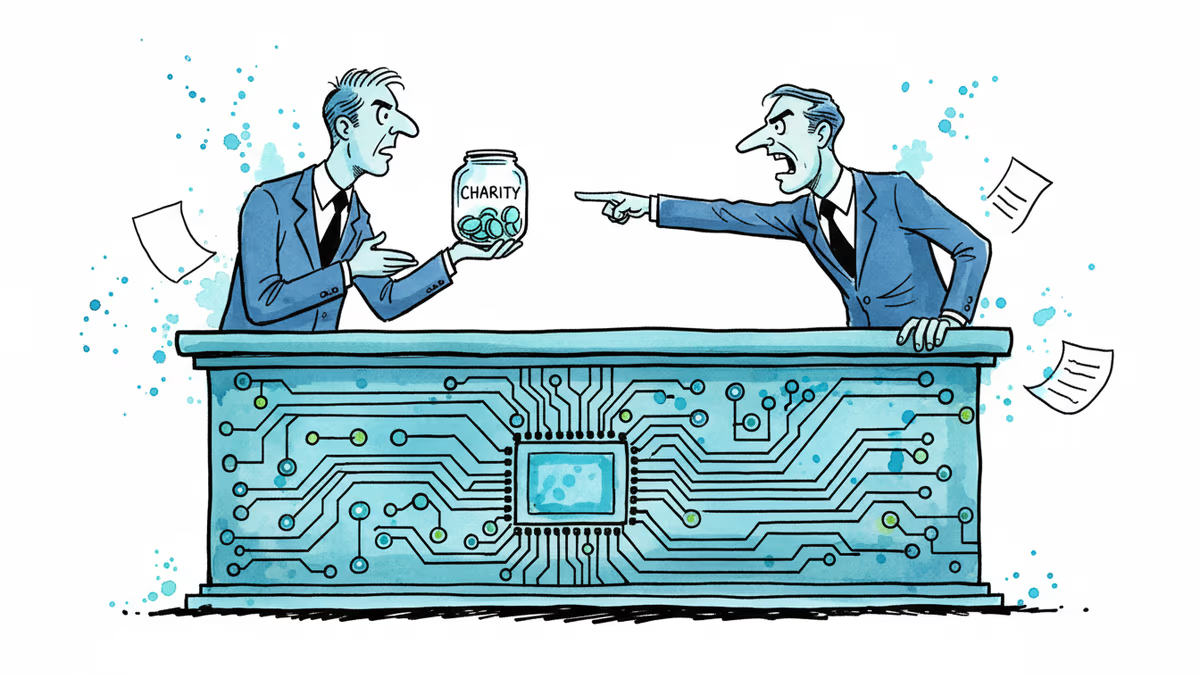

Beyond individual research projects, OpenAI's science push touches on larger questions about who controls knowledge production. If a handful of tech companies provide the tools that shape scientific inquiry, they effectively influence which questions get asked and how answers are found.

This isn't just theoretical. OpenAI's models are trained on existing research, potentially embedding current biases and blind spots into future discoveries. The company's commercial interests—and its need to protect proprietary algorithms—could conflict with science's foundational principles of openness and transparency.

Regulators are taking notice. The EU is already investigating whether AI companies should be required to disclose training data sources. In the US, the National Science Foundation is developing guidelines for AI use in federally funded research.

The Research Community's Dilemma

Scientists find themselves caught between opportunity and caution. Those who embrace AI tools early might gain competitive advantages in publishing and funding. But they also risk becoming dependent on systems they don't fully understand or control.

The academic reward system compounds this tension. Publish-or-perish pressure incentivizes speed, making AI assistance attractive. But the scientific method demands rigor and skepticism—qualities that don't always align with the "move fast and break things" ethos of Silicon Valley.

Some research institutions are developing their own guidelines. MIT now requires disclosure of AI assistance in research papers. Stanford is training faculty on responsible AI use. But these efforts remain fragmented and voluntary.

Authors

Related Articles

OpenAI has reorganized for the second time in a month, merging ChatGPT and Codex into a single agentic platform under president Greg Brockman's unified product leadership.

After two weeks of witnesses calling him a liar, OpenAI CEO Sam Altman testified in his own defense, claiming Elon Musk tried to kill the company twice.

Sam Nelson, 19, died after following ChatGPT's advice to mix Kratom and Xanax. His parents are suing OpenAI for wrongful death, raising urgent questions about AI trust, liability, and design.

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

Thoughts

Share your thoughts on this article

Sign in to join the conversation