Claude Code Ralph Wiggum Plugin: The Rise of Autonomous AI Night Shifts in 2026

Explore how the Claude Code Ralph Wiggum plugin is revolutionizing AI development in 2026. Learn about the 'Stop Hook' mechanism and how it enables autonomous coding night shifts.

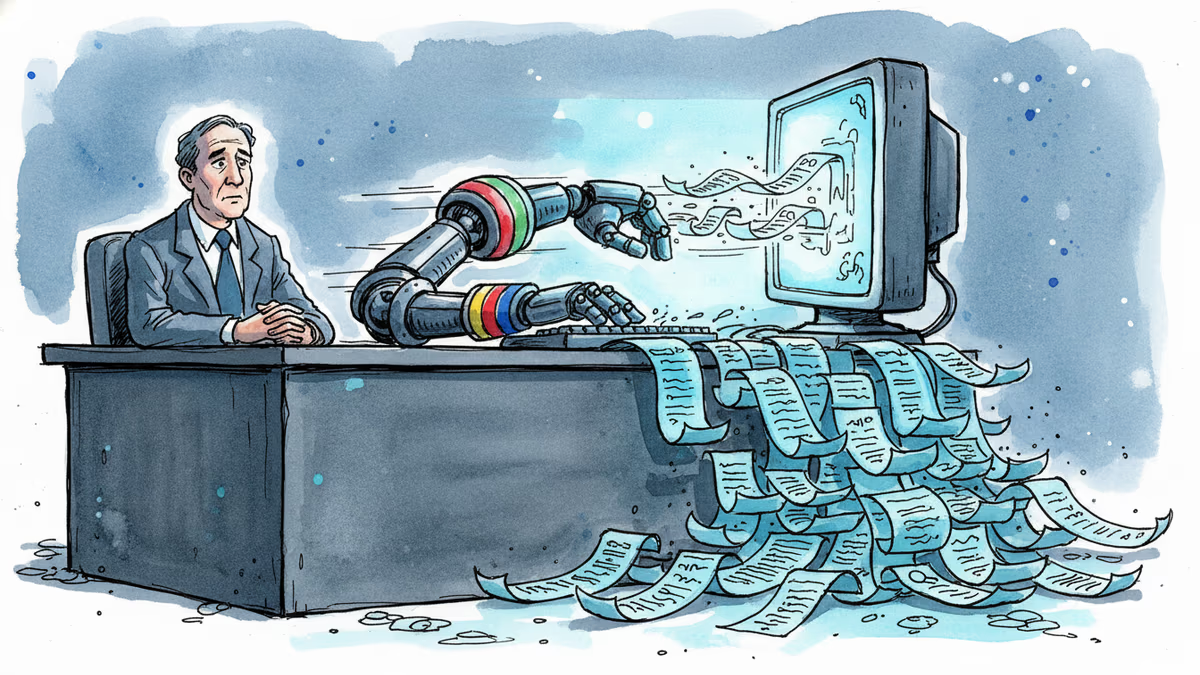

While you were sleeping, an AI agent just finished a $50,000 contract for less than $300 in API costs. This isn't a futurist's dream—it's the reality of the Ralph Wiggum plugin for Claude Code. It's shifting the developer experience from constant hand-holding to managing autonomous 'night shifts' that don't quit until the job is done.

The Engineering Behind Claude Code Ralph Wiggum Plugin

The tool's DNA comes from a surprisingly simple concept: brute force persistence. Originally a 5-line bash script created by Geoffrey Huntley in mid-2025, the 'Ralph' methodology forces the AI to confront its own failures. Instead of stopping at an error, the system pipes the stack traces and hallucinations back into the input stream, creating what Huntley calls a 'contextual pressure cooker'.

By late 2025, Anthropic formalized this into an official plugin. The technical core is the Stop Hook. When Claude attempts to exit the terminal, the hook intercepts the signal and checks for a predefined 'Completion Promise'—like passing all unit tests. If the promise isn't met, the hook injects the failure data and forces another iteration.

Real-World Impact and Efficiency Gains

The productivity gains are reaching legendary status among the dev community. One user on X reported an autonomous 14-hour session that successfully upgraded a stale codebase from React v16 to v19 without any human input. During a Y Combinator hackathon, the tool generated 6 full repositories overnight, essentially providing the output of a small engineering team for the price of a few cups of coffee.

Authors

Related Articles

A critical vulnerability in Starlette—downloaded 325 million times per week—puts millions of AI agent servers at risk, exposing stored credentials for email, databases, and third-party services.

A small but growing group of developers has gone all-in on AI coding agents like Claude Code and OpenClaw. History suggests the rest of us won't be far behind.

Google is building AI agents that search the web proactively, without user prompting. That's not just a product update — it's a fundamental shift in who controls the information you receive.

OpenAI has reorganized for the second time in a month, merging ChatGPT and Codex into a single agentic platform under president Greg Brockman's unified product leadership.

Thoughts

Share your thoughts on this article

Sign in to join the conversation