Claude Code's Auto Mode Wants to Be Your AI Safety Net

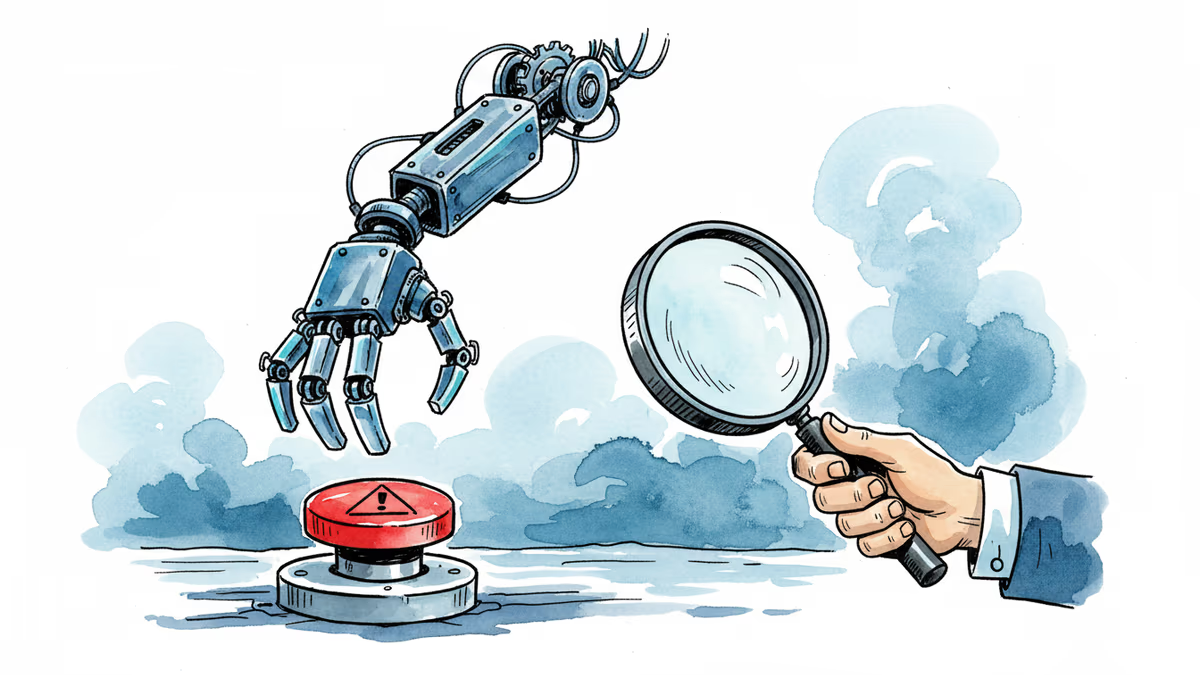

Anthropic's new Auto Mode for Claude Code lets AI flag and block risky actions before they run. It's a clever fix for agentic AI's biggest problem — but who decides what "risky" means?

The Problem With Giving AI the Keys

Every developer who's used an agentic AI tool has felt it — that moment of hesitation before hitting enter. Will it just edit the file, or will it delete the whole directory?Anthropic is betting that hesitation is costing developers too much time, and its answer is Auto Mode for Claude Code.

Launched this week, Auto Mode sits between two uncomfortable extremes: approving every single AI action manually (tedious) or handing the model broad permissions and hoping for the best (dangerous). The feature promises to flag and block potentially risky actions before they execute — things like deleting files, exfiltrating sensitive data, or running malicious instructions hidden inside code — and gives the agent a chance to find a safer path instead.

On paper, it's elegant. In practice, it raises a question that goes well beyond developer tooling.

What Auto Mode Actually Does

Claude Code is already one of the more capable agentic coding assistants on the market. It can write, edit, and execute code independently, connect to external services, and carry out multi-step tasks with minimal hand-holding. That's exactly what makes it useful for the growing wave of vibe coders — developers (and non-developers) who direct AI at a high level and let it handle the details.

But autonomy cuts both ways. The same capability that lets Claude Code spin up a deployment script can, under the wrong conditions, execute a command that wipes a working directory or leaks credentials. Auto Mode is designed to intercept those moments. According to Anthropic, the system evaluates actions before they run, identifies those that cross a risk threshold, blocks them, and surfaces the issue to the user.

The company frames this as a middle path — safer than full autonomy, less friction than constant approval prompts.

Who Trusts This, and Who Doesn't

The developer community's reaction breaks along predictable lines. Productivity-first engineers see Auto Mode as a genuine quality-of-life improvement. Complex, multi-step tasks can run with fewer interruptions, and there's a backstop against the most catastrophic mistakes. For teams already using Claude Code in production workflows, this reduces one of the biggest operational risks.

Security-focused developers are asking harder questions. Who defines what counts as "risky"?Anthropic sets those parameters, not the user. A deployment script that looks dangerous in isolation might be perfectly routine in context. Conversely, a subtle data exfiltration buried in a seemingly innocuous function call might not trip the filter at all. The opacity of the risk-detection logic is, itself, a risk.

There's also the question of adversarial inputs. Claude Code operates in environments where it might process untrusted content — think code repositories, user-generated inputs, third-party APIs. Prompt injection attacks, where malicious instructions are hidden inside data the model processes, are a known attack vector for agentic AI. Auto Mode is partly designed to catch these. Whether it catches them reliably, and under what conditions it fails, isn't yet publicly documented.

The Bigger Shift: Delegating Judgment

Auto Mode isn't just a feature — it's a design philosophy. And that philosophy is spreading fast.

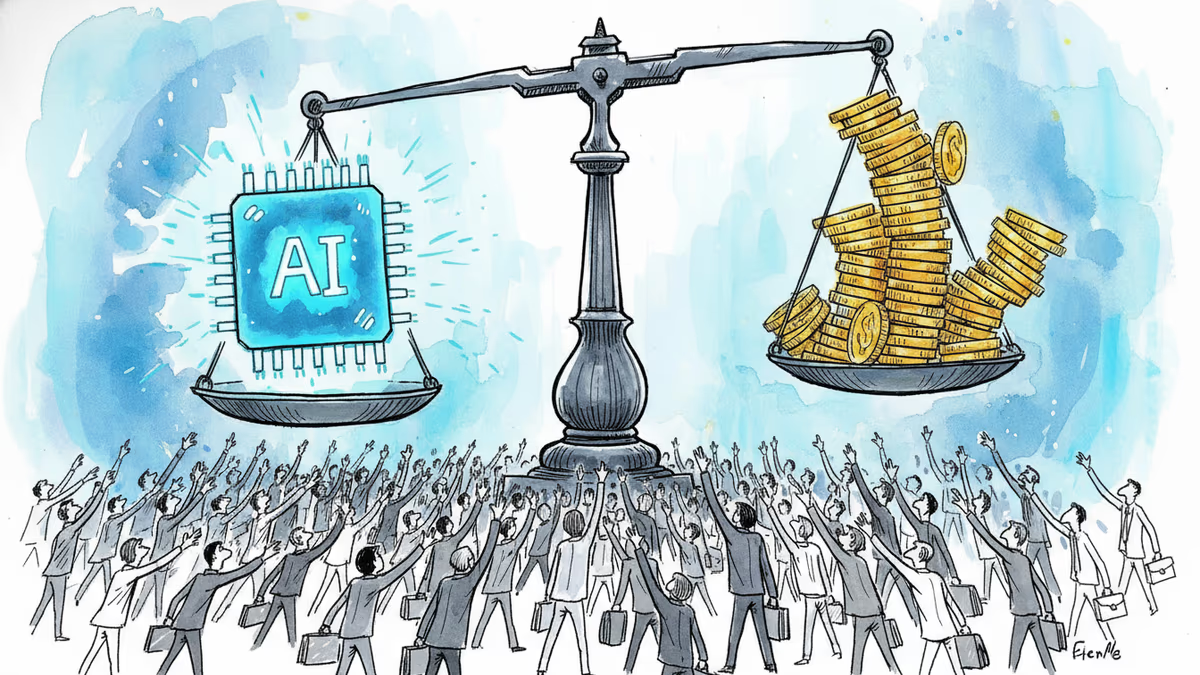

Google, Microsoft, and OpenAI are all building similar guardrail systems into their agentic products. The underlying assumption is the same: as AI agents take on more consequential actions, the agents themselves need to carry some of the safety logic. You can't rely on users to catch every dangerous command in a fast-moving workflow.

But this creates a structural shift in how accountability works. When a human developer makes a bad call, the chain of responsibility is clear. When an AI agent makes a bad call that its own safety filter didn't catch, the picture gets murkier. Is the liability with Anthropic? The enterprise IT team that deployed Claude Code? The individual developer who enabled Auto Mode?

Regulators in the EU, already grappling with the AI Act's provisions on high-risk AI systems, will eventually have to answer that question. So will corporate legal teams evaluating whether to let agentic AI tools run in sensitive environments.

Authors

Related Articles

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

Anthropic is closing what may be its final private fundraise at a $900B valuation, surpassing OpenAI. Investors have 48 hours to commit to the ~$50B round.

Anthropic's tightly restricted Mythos AI—designed to find security flaws—was accessed by Discord sleuths without a single line of exploit code. Meanwhile, North Korean hackers used AI to steal $12M in three months. The security paradox of 2026.

Google is investing at least $10 billion in Anthropic, potentially up to $40 billion. With Amazon's $5B deal just days earlier, two tech giants are now backing the same AI startup — valued at $350 billion.

Thoughts

Share your thoughts on this article

Sign in to join the conversation