America's Top Cybersecurity Chief Lost His Job Over ChatGPT

The head of CISA was replaced just one month after reports surfaced about uploading sensitive documents to ChatGPT, exposing a critical blind spot in government AI use.

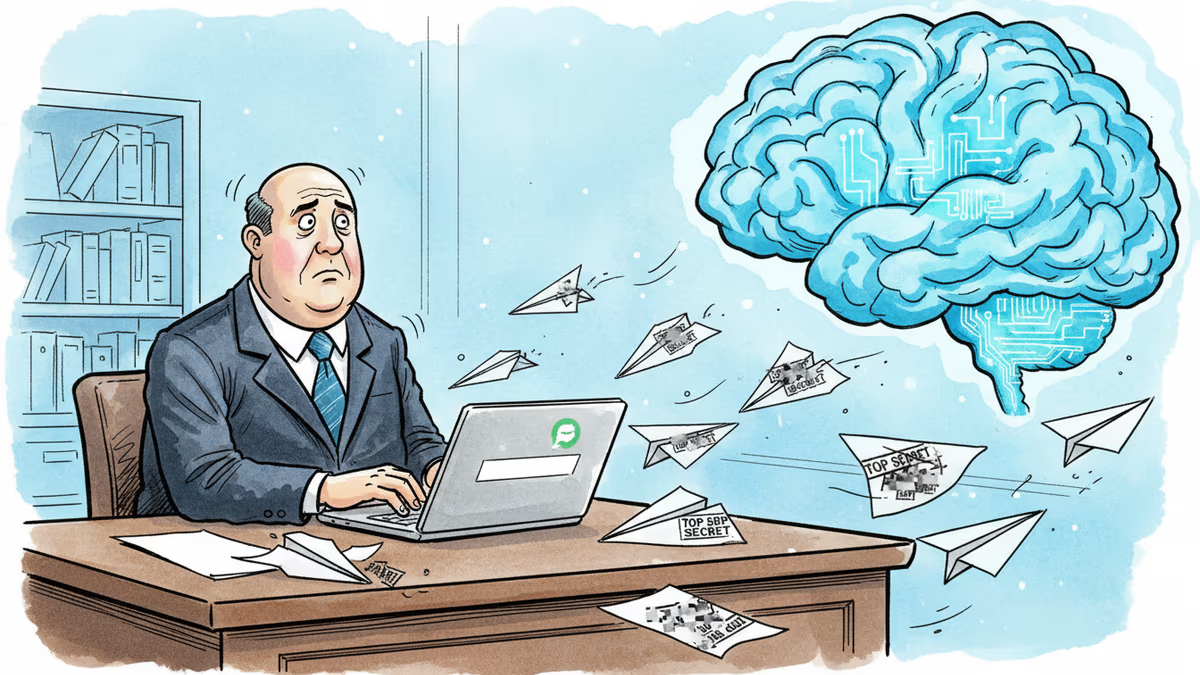

The person responsible for America's cybersecurity just got fired for a cybersecurity mistake. Madhu Gottumukkala, acting director of the Cybersecurity and Infrastructure Security Agency (CISA), was replaced one month after reports emerged that he uploaded sensitive government documents to ChatGPT.

The Ultimate Irony

Gottumukkala took charge of CISA in May 2025—barely nine months ago. His job? Protecting America's digital infrastructure from cyber threats. His downfall? Using the very AI tools that security experts warn about.

Nick Andersen, CISA's executive assistant director for cybersecurity, will now serve as acting director. Meanwhile, Gottumukkala gets reassigned to "director of strategic implementation" at the Department of Homeland Security—a title that sounds like corporate exile.

The details remain murky. We don't know what documents he uploaded, whether he had special permission, or if the information was classified. But the swift leadership change speaks volumes.

The Shadow AI Problem

This incident exposes a dirty secret in government: officials are using commercial AI tools despite official restrictions. Federal agencies have policies against using services like ChatGPT for sensitive work, but enforcement is spotty and temptation is high.

Why? Because AI works. It writes better emails, summarizes lengthy reports, and speeds up routine tasks. A mid-level bureaucrat facing a deadline might think, "What's the harm in getting help with this draft?"

The harm, as Gottumukkala discovered, can be career-ending. When you feed documents to ChatGPT, you're essentially sharing them with OpenAI. That data might be used for training, stored on servers, or potentially accessed by bad actors.

The Productivity vs. Security Dilemma

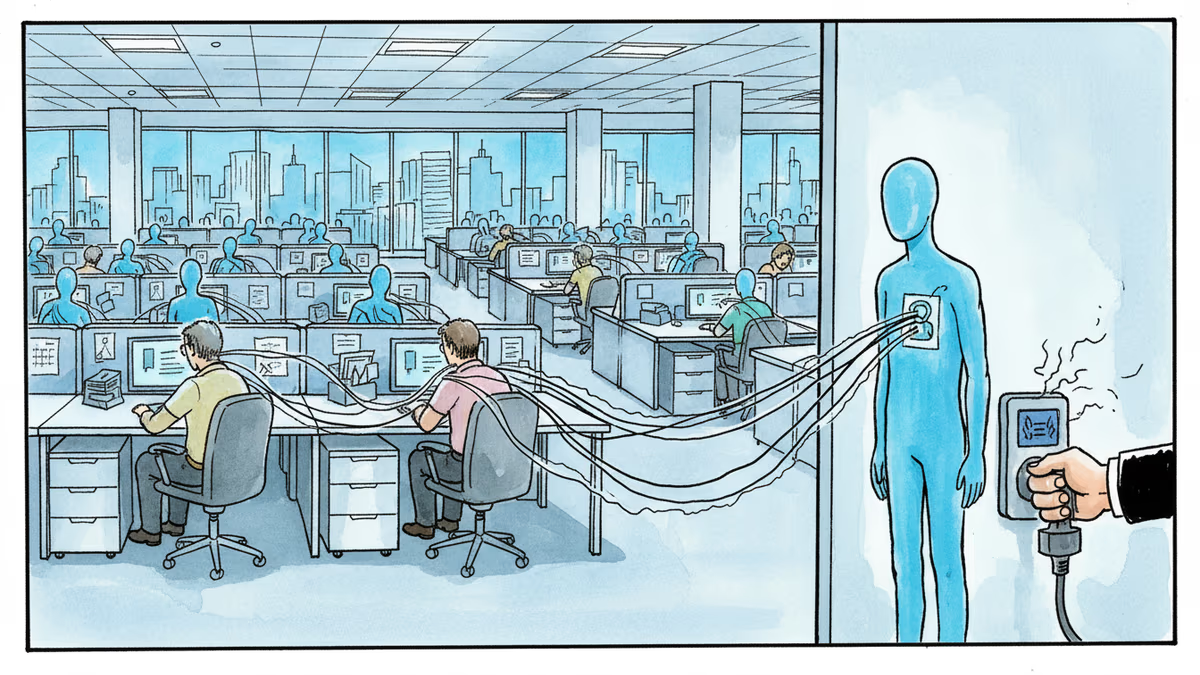

Government workers face an impossible choice: stay productive or stay secure. Official AI tools are either nonexistent, slow to deploy, or inferior to commercial alternatives. So they go rogue.

This creates a new category of insider threat—not malicious actors, but well-meaning employees who prioritize getting work done over following security protocols. It's the digital equivalent of propping open a fire door because the keycard reader is broken.

Private companies face similar challenges, but they can move faster to implement secure AI solutions. Government bureaucracy moves at glacial speed, leaving workers to improvise.

Authors

Related Articles

UK Visa Portal, a private immigration service mistaken for an official government site, has been exposing passport scans and selfies of over 100,000 applicants. The breach remains unpatched.

Okta CEO Todd McKinnon on why AI agents need identity management, the SaaSpocalypse threat, and why the kill switch might be the most important button in enterprise tech.

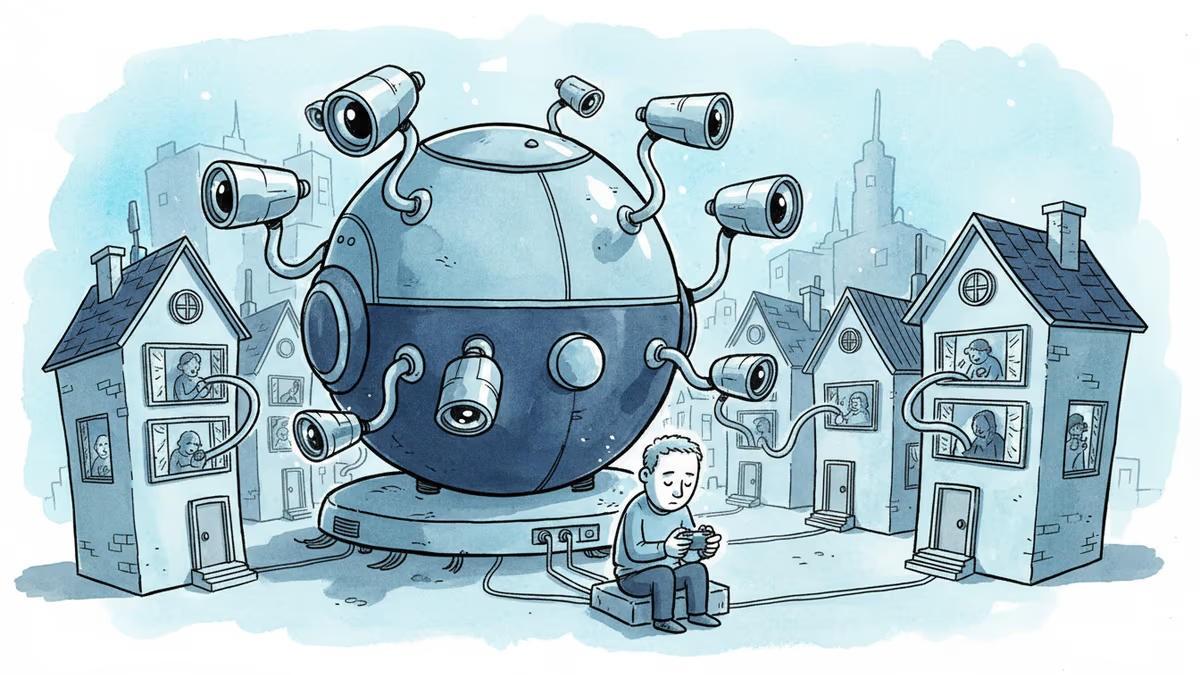

Iran and Israel are hacking civilian security cameras for military reconnaissance. How consumer surveillance devices became weapons of war.

A security researcher discovered he could access 7,000 DJI robot vacuums and peek into strangers' homes. This Valentine's Day revelation exposes the hidden privacy risks of our smart home obsession.

Thoughts

Share your thoughts on this article

Sign in to join the conversation