Wikipedia’s AI Red Flags Turn into a Guide: The Claude Humanizer Plugin

Siqi Chen has released 'Humanizer,' an open-source plugin for Anthropic's Claude Code that uses Wikipedia's AI detection patterns to make AI writing sound more human and avoid detection.

Is your AI writing too robotic? Tech entrepreneur Siqi Chen just released an open-source solution that uses Wikipedia's own 'cheat sheet' to hide chatbot fingerprints. The tool, called Humanizer, is a plugin for Anthropic's Claude Code assistant that instructs the AI to stop following the predictable patterns usually associated with Large Language Models.

Anthropic Claude Humanizer: Leveraging Wikipedia’s Red Flags

The plugin functions by feeding Claude a specific list of 24 language and formatting patterns. These aren't just random guesses; they are the exact markers that Wikipedia editors use to flag AI-generated content. According to Chen, it's incredibly effective to tell an LLM exactly what behaviors to avoid to sound more authentic. The plugin has already gained over 1,600 stars on GitHub as of Monday.

The Origins: WikiProject AI Cleanup

The source material for this 'humanizing' prompt comes from WikiProject AI Cleanup, a volunteer group founded by French editor Ilyas Lebleu in late 2023. These editors have tagged more than 500 articles for review. In August 2025, they formalized their findings into a list of AI 'giveaways.' What was intended as a shield for encyclopedia integrity has now become a sword for those looking to bypass detection.

Authors

Related Articles

A small but growing group of developers has gone all-in on AI coding agents like Claude Code and OpenClaw. History suggests the rest of us won't be far behind.

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

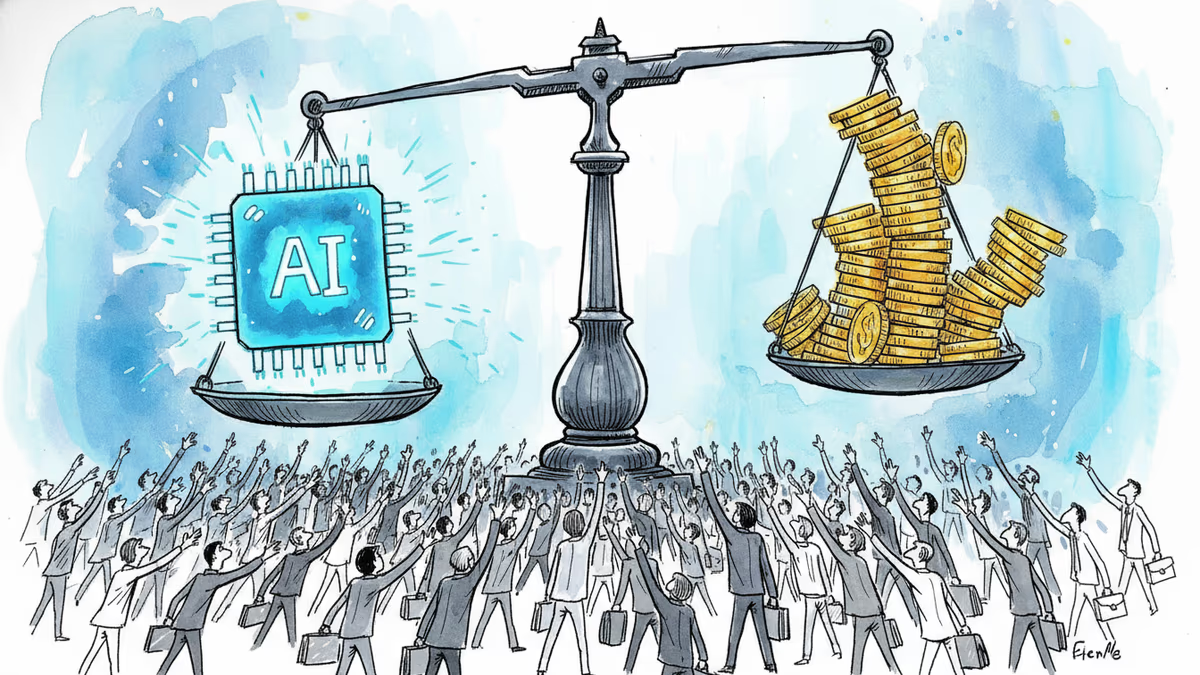

Anthropic is closing what may be its final private fundraise at a $900B valuation, surpassing OpenAI. Investors have 48 hours to commit to the ~$50B round.

Anthropic's tightly restricted Mythos AI—designed to find security flaws—was accessed by Discord sleuths without a single line of exploit code. Meanwhile, North Korean hackers used AI to steal $12M in three months. The security paradox of 2026.

Thoughts

Share your thoughts on this article

Sign in to join the conversation