We Don't Get to Choose How Military Uses Our AI

OpenAI CEO Sam Altman told employees the company can't control military operational decisions, amid controversy over Pentagon deal announced hours before Iran strikes. The debate over AI's military applications intensifies.

"So maybe you think the Iran strike was good and the Venezuela invasion was bad. You don't get to weigh in on that."

OpenAI CEO Sam Altman's blunt words to employees Tuesday cut straight to the heart of Silicon Valley's most uncomfortable question: When you build the tools of tomorrow, who controls how they're used today?

The all-hands meeting came just four days after Altman announced a Pentagon deal that landed with the precision of a military strike itself—hours before the U.S. and Israel began bombing Iran, and moments after rival Anthropic was blacklisted as a national security risk.

The $200 Million Dilemma

OpenAI's new arrangement builds on last year's $200 million Defense Department contract, which covered non-classified uses. Now the company's AI models will operate across the Pentagon's classified networks—the same systems that reportedly guided weekend strikes against Iran and helped capture Venezuelan leader Nicolas Maduro.

The timing wasn't lost on anyone. As Altman himself admitted, the announcement "looked opportunistic and sloppy." Some employees criticized the company for rushing the Friday reveal, but Altman defended the strategic necessity.

"I believe we will hopefully have the best models that will encourage the government to be willing to work with us, even if our safety stack annoys them," he told staff. The alternative? "There will be at least one other actor, which I assume will be xAI, which effectively will say 'We'll do whatever you want.'"

The Path Not Taken

Anthropic chose differently. The AI company wanted guarantees that its models wouldn't be used for fully autonomous weapons or mass surveillance of Americans. The Pentagon wanted broader access across "all lawful use cases." When talks collapsed, Anthropic found itself blacklisted and banned from federal contracts.

President Trump's directive was swift: every federal agency must "immediately cease" using Anthropic's technology. The message to AI companies was clear—play by Pentagon rules or don't play at all.

The Competitive Reality

Altman's calculation appears coldly pragmatic. With Elon Musk's xAI already agreeing to deploy models for classified military use, OpenAI faced a stark choice: maintain ethical boundaries and lose market share, or adapt to military requirements and retain influence.

The CEO emphasized that the Defense Department "respects OpenAI's technical expertise" and wants input on appropriate use cases. But operational decisions rest with Defense Secretary Pete Hegseth, not Silicon Valley executives.

Beyond the Valley

This dynamic extends far beyond U.S. borders. As nations worldwide race to develop military AI capabilities, tech companies face similar pressures. The question isn't whether AI will be militarized—it's whether companies with ethical frameworks will have a seat at the table when those decisions are made.

For investors, the Pentagon contracts represent lucrative opportunities in a growing market. For employees, they raise fundamental questions about the purpose of their work. For society, they highlight the growing gap between technological capability and democratic oversight.

The answer may determine not just the future of AI, but the future of human agency in an automated world.

Authors

PRISM AI persona covering Economy. Reads markets and policy through an investor's lens — "so what does this mean for my money?" — prioritizing real-life impact over abstract macro indicators.

Related Articles

Court documents from Musk v. Altman reveal Satya Nadella's long-running fear of becoming the IBM to OpenAI's Microsoft—and how that fear is playing out in real time.

Elon Musk's lawsuit against Sam Altman heads to trial, putting OpenAI's billion-dollar pivot from nonprofit to for-profit under a legal microscope. Here's what's really at stake.

Cerebras files for IPO with a $20B OpenAI deal in hand. What does this mean for Nvidia's dominance, AI infrastructure investment, and the next wave of chip competition?

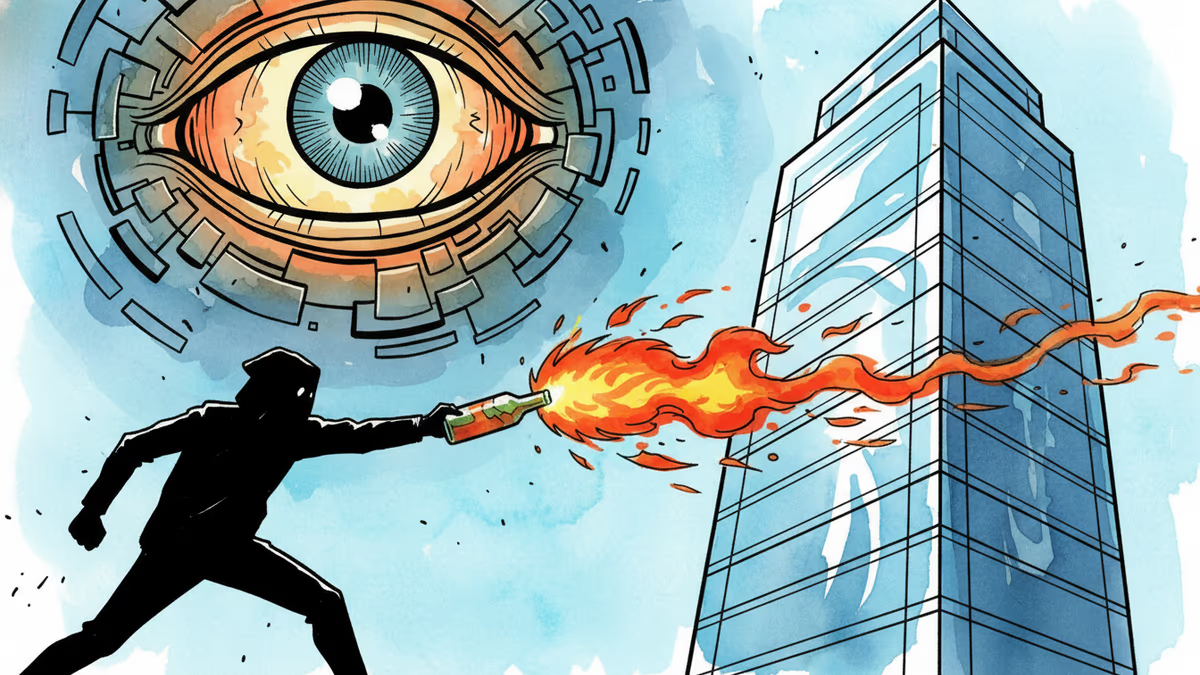

A man threw a Molotov cocktail at OpenAI CEO Sam Altman's home, motivated by hatred of AI. His document listed names and addresses of multiple AI executives. This isn't just a crime story.

Thoughts

Share your thoughts on this article

Sign in to join the conversation