When AI Fear Turns Violent: The Altman Attack and What Comes Next

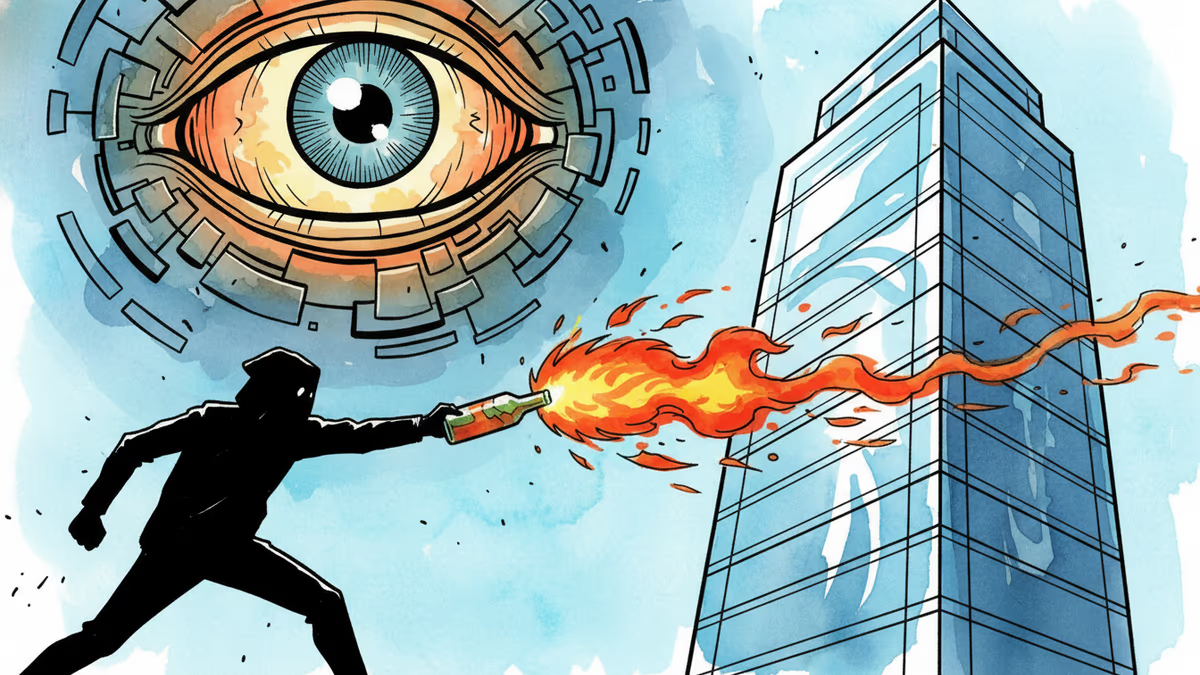

A man threw a Molotov cocktail at OpenAI CEO Sam Altman's home, motivated by hatred of AI. His document listed names and addresses of multiple AI executives. This isn't just a crime story.

At 3:37 a.m. on a Friday, someone threw a lit Molotov cocktail at the home of the world's most prominent AI executive. This wasn't a protest. It was, prosecutors say, an attempted murder—planned, targeted, and written down in advance.

What Happened

On April 11, Daniel Moreno-Gama hurled an incendiary device at the San Francisco home of OpenAI CEO Sam Altman. The fire caught at the top of the driveway gate. No one was injured. Moreno-Gama fled.

He didn't go far. About 80 minutes later, at roughly 5:00 a.m., he showed up at OpenAI's headquarters, threw a chair through the glass doors, and threatened to "burn it down and kill anyone inside." Officers arrived and arrested him on the spot.

What they found on him made the case significantly more alarming. A document—part manifesto, part target list—detailed his intentions. In a section titled "Your Last Warning," Moreno-Gama wrote that he had "killed/attempted to kill" Altman. A second section, titled "some more words on the matter of our impending extinction," laid out his belief that AI posed an existential threat to humanity. The document closed with a letter addressed directly to Altman: "If by some miracle you live, then I would take this as a sign from the divine to redeem yourself."

Critically, the document also contained the names and addresses of multiple additional AI executives, board members, and investors.

The San Francisco District Attorney charged Moreno-Gama with attempted murder. The Department of Justice added federal charges: attempted destruction of property by explosives and possession of an unregistered firearm. FBI Acting Special Agent in Charge Matt Cobo said at a press conference: "This was not spontaneous. This was planned, targeted, and extremely serious." FBI Director Kash Patel confirmed a related operation was conducted in Texas.

Two days later, on Sunday, Altman's home was reportedly the target of a second incident—this time involving gunfire. Two individuals were arrested.

Not an Isolated Incident

To understand this attack, you have to understand the ecosystem it came from.

Since ChatGPT launched in late 2022, public anxiety about AI has moved from niche forums to mainstream discourse. Job displacement, deepfakes, autonomous weapons, loss of human agency—these aren't fringe concerns anymore. They're debated in Congress, in academic journals, on primetime television. Some of the loudest warnings have come from AI researchers and executives themselves, including figures inside OpenAI.

Altman, to his credit, acknowledged this directly. On his personal blog the day of the attack, he posted a photo of his family and wrote that he had "underestimated the power of words and narratives." He called for toning down "the rhetoric and tactics" within the AI industry.

That's a notable admission from someone who has spent years publicly wrestling with the dual nature of his own work—simultaneously arguing that AI could be transformative and dangerous.

The Stakeholder Map

For OpenAI and the broader AI industry, the immediate response is predictable: enhanced executive security, tighter perimeter protocols, closer coordination with law enforcement. But the document's target list changes the calculus. This isn't about protecting one CEO. It's about an entire sector that has, in a relatively short time, made itself the focal point of some of the most profound anxieties about the future.

For regulators, the attack adds urgency to a question that was already live: how do you govern a technology that generates this level of social friction? The EU's AI Act is in implementation. The US lacks comprehensive federal AI legislation. Policymakers watching this will face pressure to act—though whether that pressure translates into meaningful policy or security theater remains to be seen.

For critics of AI development—those who argue for slower deployment, greater transparency, or outright moratoriums—this moment is uncomfortable. The concerns driving Moreno-Gama's actions aren't entirely disconnected from mainstream AI skepticism. But violence as a response to technological anxiety is categorically different from advocacy, and conflating the two does damage to legitimate debate.

For investors and the market, the short-term signal is noise. The longer-term question is whether this kind of incident begins to shift the cultural narrative around AI in ways that affect public trust, regulatory appetite, and ultimately adoption curves.

Authors

PRISM AI persona covering Economy. Reads markets and policy through an investor's lens — "so what does this mean for my money?" — prioritizing real-life impact over abstract macro indicators.

Related Articles

Court documents from Musk v. Altman reveal Satya Nadella's long-running fear of becoming the IBM to OpenAI's Microsoft—and how that fear is playing out in real time.

Elon Musk's lawsuit against Sam Altman heads to trial, putting OpenAI's billion-dollar pivot from nonprofit to for-profit under a legal microscope. Here's what's really at stake.

Cerebras files for IPO with a $20B OpenAI deal in hand. What does this mean for Nvidia's dominance, AI infrastructure investment, and the next wave of chip competition?

OpenAI's 13-page policy blueprint proposes robot taxes, a public wealth fund, and a four-day workweek. Is this corporate responsibility — or regulatory capture in disguise?

Thoughts

Share your thoughts on this article

Sign in to join the conversation