Humanizer AI Writing Tool Uses Wikipedia's Detection Guide to Mimic Human Writing

Developer Siqi Chen introduces Humanizer, an AI writing tool that uses Wikipedia's detection guide to generate human-sounding text via Anthropic's Claude.

The very rules designed to expose AI-generated text are now being used to help it blend in. Developer Siqi Chen has launched a new tool called 'Humanizer,' which trains AI to stop sounding like a machine by studying Wikipedia's guide for spotting non-human content.

How the Humanizer AI Writing Tool Outsmarts Detection Algorithms

According to reports from Ars Technica and The Verge, Chen created the tool by feeding Anthropic's Claude a list of 'tells' compiled by Wikipedia’s volunteer editors. These editors had built a comprehensive initiative to combat poorly written, AI-generated content, identifying specific phrases and styles that scream 'bot.' Humanizer uses this knowledge to actively avoid those red flags.

- Eliminating vague attributions that lack specific details.

- Removing promotional adjectives like 'breathtaking' or 'revolutionary.'

- Stripping away AI-isms such as 'I hope this helps!' or 'As an AI language model.'

Technical Foundation and Availability

Humanizer operates as a custom skill within the Claude ecosystem. While official pricing remains unconfirmed, it's currently positioned as a specialized tool for content creators and writers who need to bypass automated detection systems. As of January 22, 2026, the tool represents a significant shift in how AI-generated text is refined for public consumption.

Authors

Related Articles

Google is investing at least $10 billion in Anthropic, potentially up to $40 billion. With Amazon's $5B deal just days earlier, two tech giants are now backing the same AI startup — valued at $350 billion.

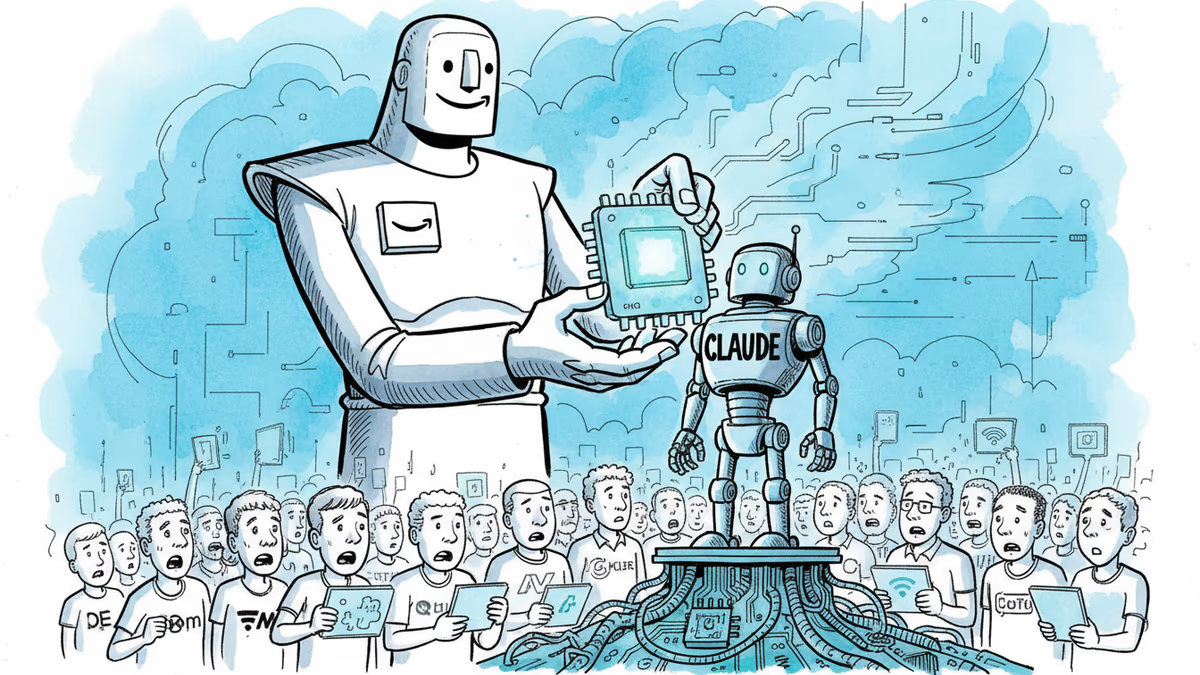

Amazon has poured an additional $5 billion into Anthropic, bringing its total stake to $13 billion—with up to $20 billion more on the table. Here's what the deal really signals about the AI infrastructure race.

Machine-translated junk is flooding minority-language Wikipedia pages. AI learns from that junk. The result could accelerate the extinction of thousands of languages.

Anthropic launched Claude Mythos Preview alongside Project Glasswing, a 50-plus company consortium tackling AI-driven cybersecurity threats. Here's what it means for the future of digital defense.

Thoughts

Share your thoughts on this article

Sign in to join the conversation