The Pentagon Wants AI to Learn Its Secrets

The Pentagon is exploring training AI models like OpenAI and xAI on classified military data. As tensions with Iran escalate, the plan raises urgent questions about security, accountability, and the future of AI in warfare.

An AI model is already helping rank targets in Iran. The Pentagon now wants to go further — it wants to teach the AI everything it knows.

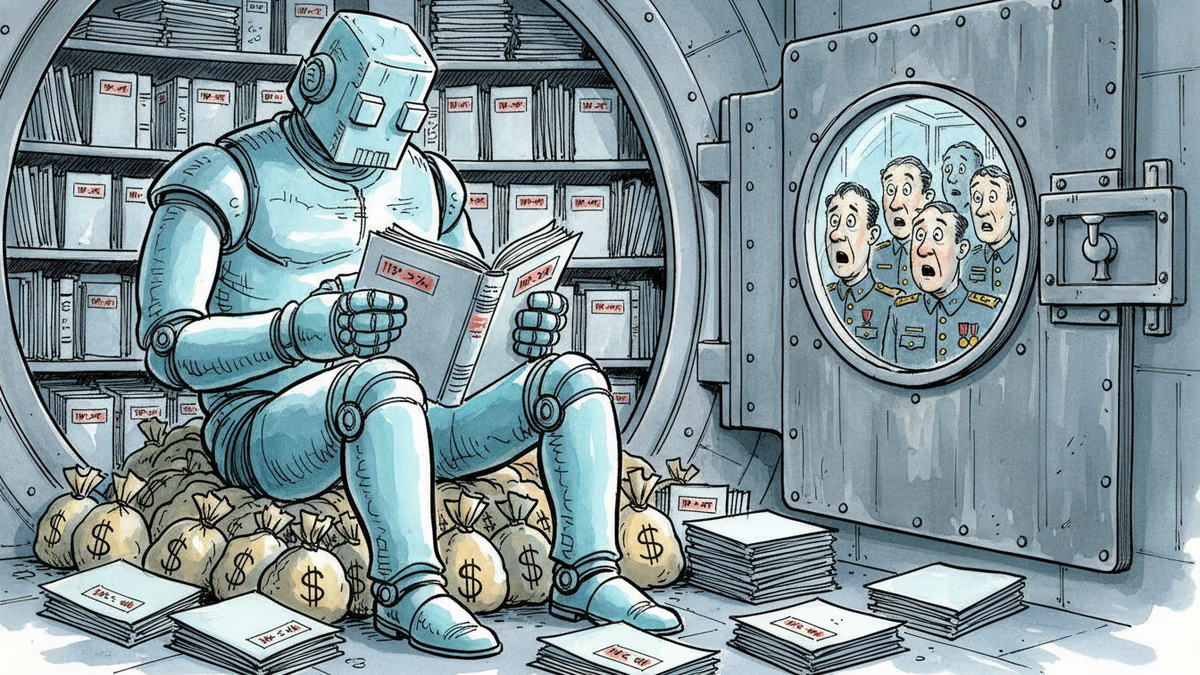

According to reporting by MIT Technology Review, the U.S. Department of Defense is in active discussions to build secure environments where generative AI companies — including OpenAI and Elon Musk's xAI — could train military-specific versions of their models directly on classified data. Not just query it. Train on it.

The distinction matters more than it might seem.

What's Actually Being Proposed

Today's setup already pushes boundaries. Anthropic's Claude is used in classified settings, including for analyzing potential targets in Iran. Security firm Palantir has won major contracts building infrastructure that lets military officials query AI models about classified topics without the data flowing back to the AI companies' servers. Anthropic released Claude Gov, a version fine-tuned for government work across multiple languages and secure environments.

But all of that is essentially a read-only relationship. The AI answers questions about classified information; it doesn't absorb it.

What the Pentagon is now considering is fundamentally different. Under the proposed framework, a copy of an AI model would be placed inside an accredited secure data center alongside classified data — surveillance reports, battlefield assessments, signals intelligence — and trained on it. That information wouldn't just pass through the model. It would become part of the model.

The Defense Department would retain ownership of the data. AI company personnel with appropriate security clearances might access it in limited circumstances. And before going live with classified data, the Pentagon says it intends to first benchmark models trained on unclassified material, like commercially available satellite imagery, to establish a baseline for accuracy.

Why Now

The timing is deliberate. Defense Secretary Pete Hegseth issued a memo in January directing the Pentagon to become an "AI-first warfighting force." The department has since moved quickly: AI is now being used to draft contracts, generate reports, and — most consequentially — rank airstrike targets and recommend which to hit first.

The demand for more capable models is accelerating alongside real-world conflict. With Iran tensions rising, the military's appetite for AI that understands its specific operational context — not just general-purpose AI — is growing. General models trained on the open internet can only take you so far. A model that has internalized decades of classified signals intelligence is a different instrument entirely.

Aalok Mehta, who directs the Wadhwani AI Center at CSIS and previously led AI policy at Google and OpenAI, frames the appeal clearly: classified training could enable AI to do things currently done by human analysts — spotting subtle patterns in imagery, connecting new intelligence to historical precedent — at a scale and speed no human team can match.

The Problem No One Has Solved

But here's the risk that makes this genuinely hard: AI models don't forget on command.

When a human analyst loses their security clearance, you revoke their access. When an AI model trains on a piece of sensitive intelligence — say, the identity of a covert operative — that information becomes encoded in the model's weights. There's no clean way to surgically remove it afterward. The field of "machine unlearning" exists precisely because this problem is unsolved.

Mehta identifies the most acute danger not as external leakage — "if you set this up right, you will have very little risk of that data being surfaced on the general internet or back to OpenAI" — but as internal cross-contamination. Different military departments operate at different classification levels and have different information access rights. If they share the same AI model, those walls effectively dissolve.

"You can imagine a model that has access to some sort of sensitive human intelligence — like the name of an operative — leaking that information to a part of the Defense Department that isn't supposed to have access to that information," Mehta says. The operative's safety could be at risk, and there's no perfect technical fix if the model is shared across units.

Three Perspectives Worth Holding Simultaneously

The AI companies are in an uncomfortable position. Classified military contracts represent enormous revenue — and strategic leverage in Washington. But OpenAI and xAI are not Lockheed Martin. They employ researchers and engineers who joined to build products for the public, not classified weapons systems. Google walked away from Project Maven in 2018 after employee protests. OpenAI has since moved in the opposite direction. Whether that shift reflects genuine strategic conviction or financial pragmatism is a question worth asking.

Adversaries are watching. China's military-civil fusion strategy already integrates civilian AI development into defense applications. If the U.S. standardizes classified AI training, it doesn't just upgrade American capabilities — it signals a new phase of AI-enabled military competition. The question isn't whether this arms race is happening; it's how fast the gap widens, and in which direction.

Civil society and oversight bodies are largely on the outside. There are no public frameworks governing what happens when a classified AI model is decommissioned, how errors in AI-assisted targeting decisions are reviewed, or what accountability looks like when an AI recommendation contributes to a strike that kills civilians. The technology is moving faster than the governance structures designed to contain it.

Authors

Related Articles

Meta acquired humanoid robotics startup ARI, adding to a Big Tech arms race in physical AI. The real prize isn't a robot product — it's a new way to train intelligence.

Anthropic is closing what may be its final private fundraise at a $900B valuation, surpassing OpenAI. Investors have 48 hours to commit to the ~$50B round.

At his OpenAI trial, Elon Musk testified under oath about a falling-out with Larry Page over AI safety. The story reveals how personal philosophy shapes billion-dollar industries.

Elon Musk and Sam Altman head to trial this week in a case that could determine whether OpenAI survives as a for-profit company—and who leads it. Here's what's really at stake.

Thoughts

Share your thoughts on this article

Sign in to join the conversation