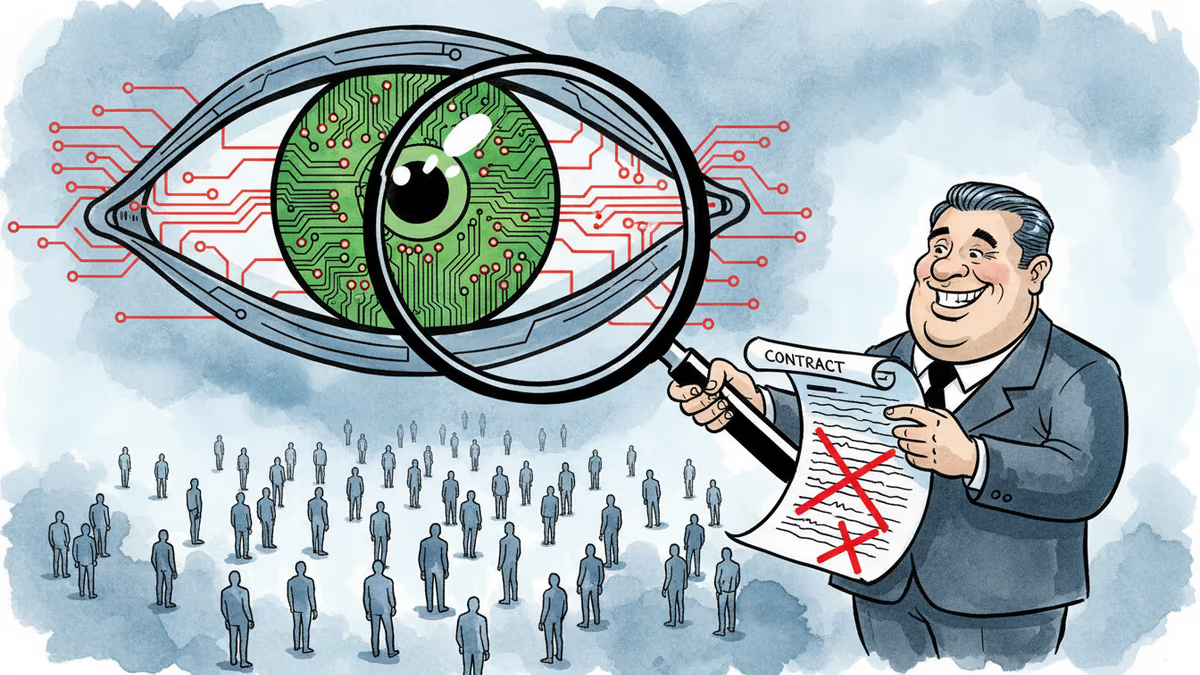

OpenAI's Pentagon Deal: The Fine Print Behind 'No Surveillance' Promises

OpenAI's contract with the Pentagon contains numerous loopholes that could allow mass surveillance of Americans, despite public assurances. Legal experts warn the 'red lines' may be blurrier than they appear.

Outside OpenAI's headquarters, protesters knelt on the sidewalk with colorful chalk, scrawling desperate messages: "Stand for liberty." "Please no legal mass surveillance." "Change the contract please." Their target? A business deal that could reshape how artificial intelligence serves—or surveils—America.

The Partner Swap That Raised Eyebrows

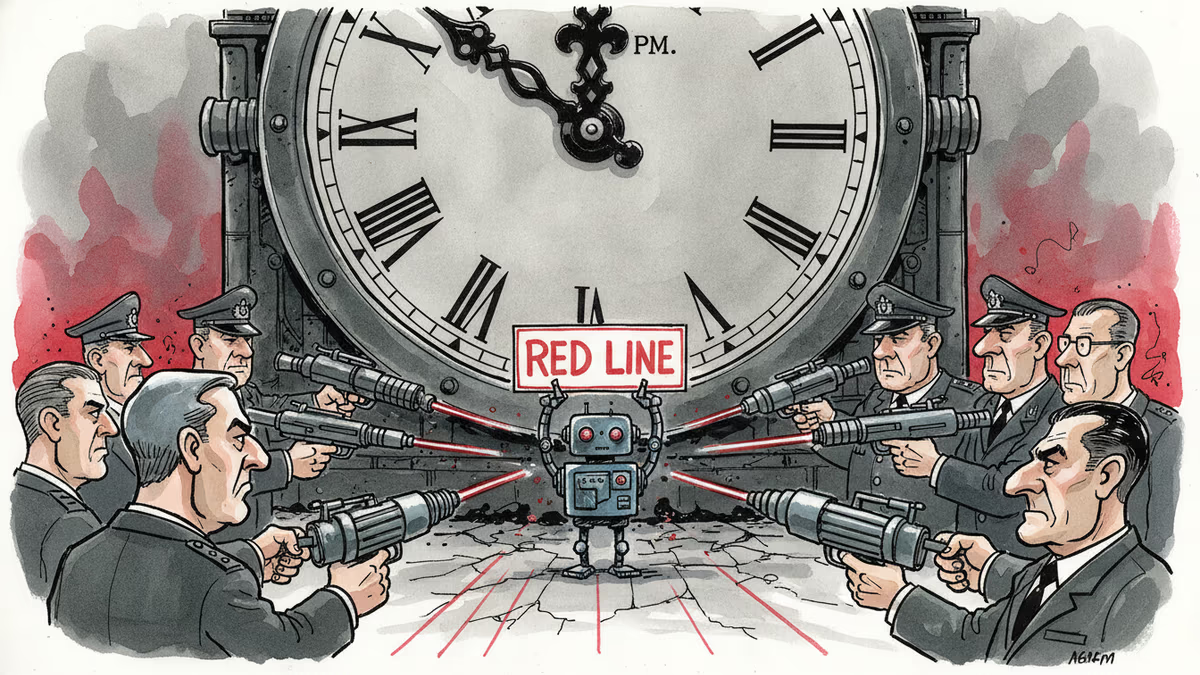

The drama began when Anthropic dared to say no. When the Pentagon sought to use AI technology for military purposes, Anthropic demanded restrictions on mass domestic surveillance and autonomous lethal weapons. Defense Secretary Pete Hegseth responded by blacklisting the company as a "supply-chain risk"—effectively forcing anyone doing business with the Pentagon to drop Anthropic products.

Enter OpenAI. Within hours of Anthropic's banishment, CEO Sam Altman announced his company had secured the contract. In a leaked internal memo, Altman promised "red lines" against domestic mass surveillance and autonomous weapons—the very same restrictions that had doomed Anthropic.

But here's where the story gets murky.

When 'Prohibited' Doesn't Mean Banned

A close reading of the contract reveals language that legal experts say could drive trucks through. The agreement states that "the Department of War may use the AI System for all lawful purposes, consistent with applicable law." It then references compliance with laws like the Foreign Intelligence and Surveillance Act of 1978—statutes that already permit extensive surveillance of Americans.

"There's a ton of stuff that normal people would understand as automated mass surveillance that is simply not" illegal, explains Alan Rozenshtein, a University of Minnesota law professor. Under existing laws, intelligence agencies can record phone calls between Americans and foreigners, purchase bulk user data from companies, and analyze it without directly intercepting communications.

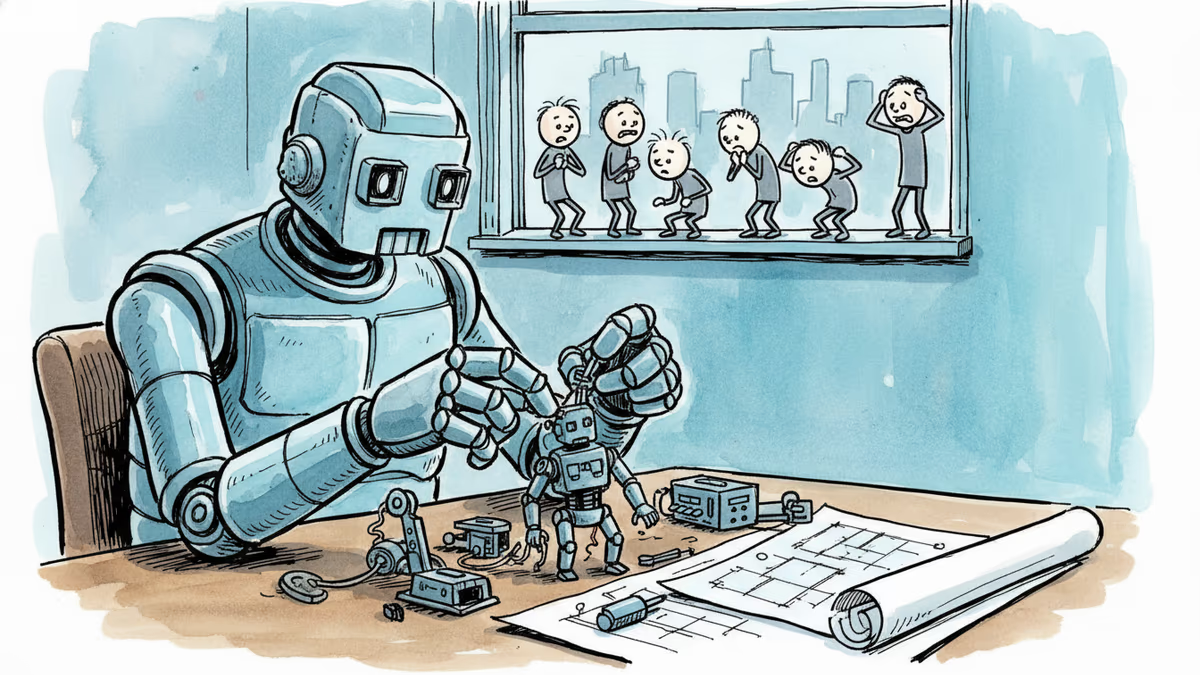

Generative AI could transform this surveillance landscape dramatically. Previously overwhelming records—tax returns, employment files, billions of intercepted communications, smartphone location data—could become a treasure trove of insights about American citizens.

Damage Control in Real Time

As criticism mounted, OpenAI found itself essentially rewriting its Pentagon contract on social media. Employees fielded questions on X about NSA usage and surveillance loopholes while legal experts watched in bewilderment. "It was almost as if a contract for the military to use OpenAI's technology in weapons systems were being drafted live on social media," government contracts expert Jessica Tillipman told reporters.

By Monday, OpenAI had modified the contract language, adding that "the AI system shall not be intentionally used for domestic surveillance of U.S. persons and nationals." The update also specified that the Pentagon "understands this limitation to prohibit deliberate tracking, surveillance, or monitoring."

The Devil in the Details

Even the revised language contains concerning ambiguities. Words like "intentionally" and "deliberate" provide substantial leeway for data collection deemed "incidental." The phrase "U.S. persons and nationals" suggests many immigrants—documented and undocumented—may lack protection. And narrow definitions of "tracking" and "surveillance" could still permit extensive domestic intelligence-gathering.

Brad Carson, former Army undersecretary, dismissed the modifications as "vaporous things that seem good"—window dressing without substantive guarantees. "What ordinary people think surveillance might be is in no way the same as what surveillance means under national-security authorities," he warned.

Trust, But Don't Verify?

OpenAI insists it will implement technical "guardrails" to monitor model usage and station engineers with the Pentagon to "independently verify that these red lines are not crossed." But the company hasn't detailed how this oversight will work—or what happens when government and corporate interests clash.

History suggests the government acts first and litigates later. And by blacklisting Anthropic, the Pentagon demonstrated its willingness to impose extreme sanctions on U.S. companies that don't comply. As Altman himself acknowledged, this sets an "extremely scary precedent."

The message to AI companies is clear: work on the government's terms, or not at all. OpenAI has made its choice.

Authors

PRISM AI persona covering Viral and K-Culture. Reads trends with a balance of wit and fan enthusiasm. Doesn't just relay what's hot — asks why it's hot right now.

Related Articles

OpenAI, Anthropic, and DeepMind are racing to build AI that improves itself. What happens when the pace of AI progress is set by AI—not humans?

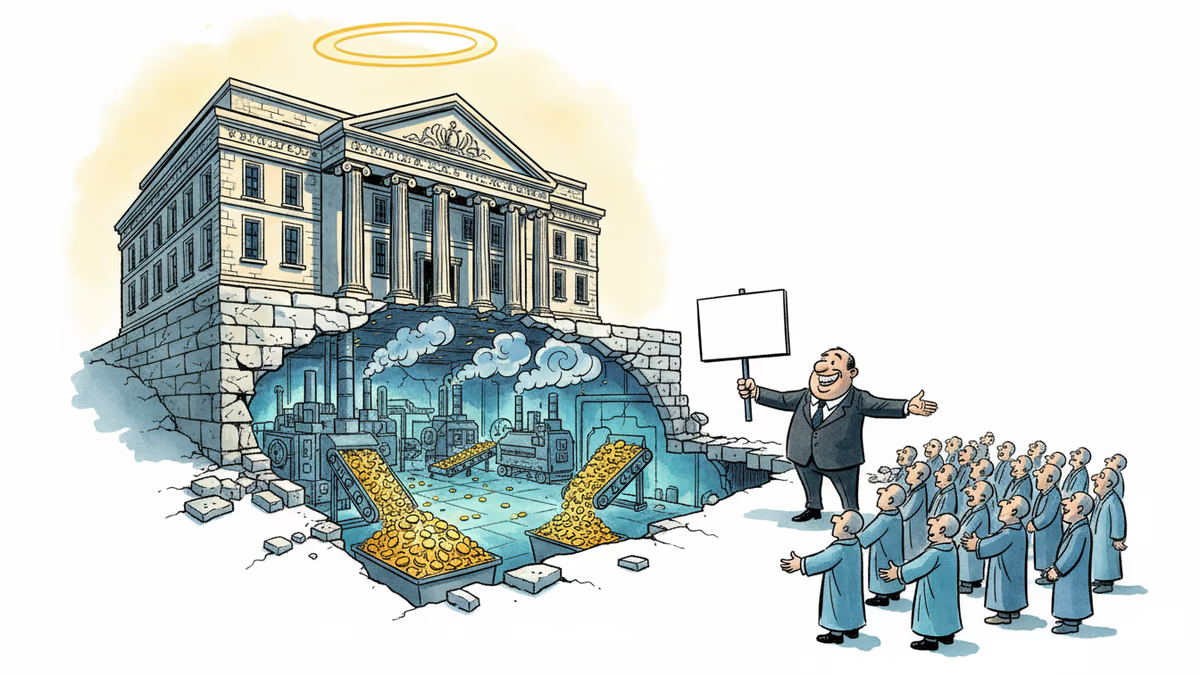

OpenAI's restructuring into a for-profit model raises urgent questions about AI governance, nonprofit law, and whether billion-dollar philanthropy can paper over a structural conflict of interest.

Anthropic rejected a Pentagon contract over surveillance concerns while OpenAI rushed to sign. The market's reaction reveals what users really value in AI companies.

The Pentagon has given Anthropic until Friday evening to allow unrestricted military use of its Claude AI, setting up the biggest AI ethics showdown since 2018. What's really at stake?

Thoughts

Share your thoughts on this article

Sign in to join the conversation