AI Agent Reliability in 2026: Can Mathematical Proofs Fix LLM Hallucinations?

Explore the debate on AI agent reliability in 2026. From Vishal Sikka's mathematical skepticism to Harmonic's formal verification, we analyze if LLM hallucinations can be fixed.

The industry promised that 2025 would be the year of the AI agent, but it mostly ended up being the year of talk. As we move into 2026, a fundamental question remains: Can we ever truly trust these digital workers, or is the dream of full automation a mathematical impossibility?

The Mathematical Limits of AI Agent Reliability

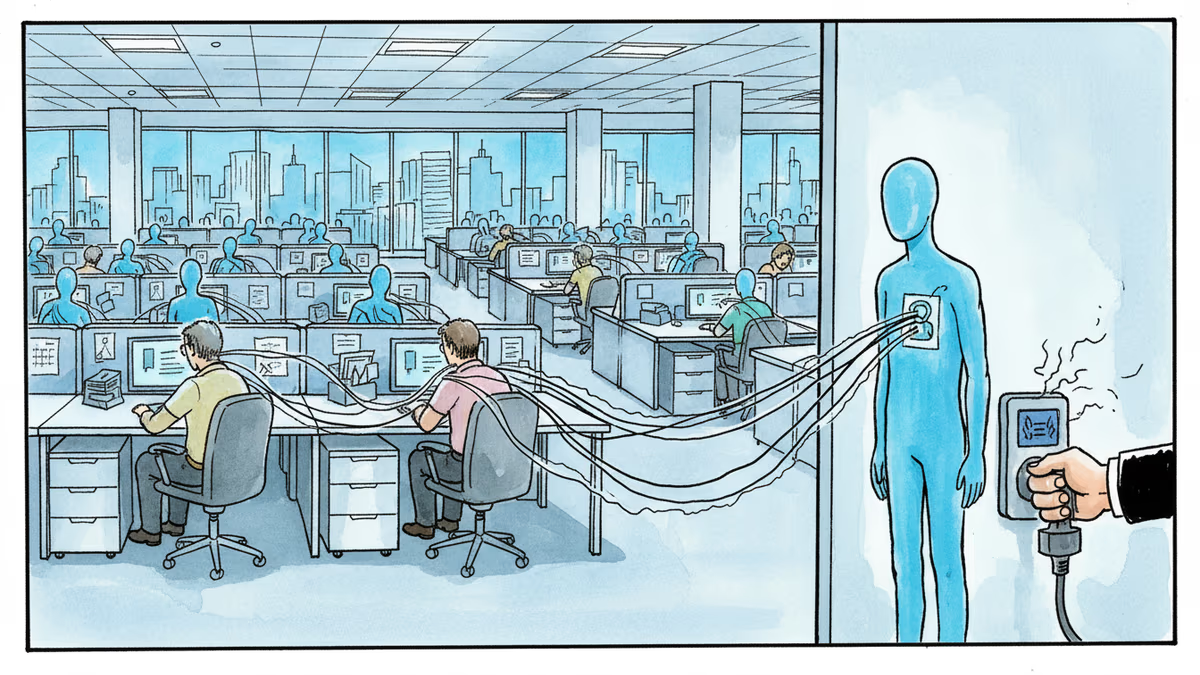

A provocative paper titled 'Hallucination Stations' recently challenged the core of the AI narrative. Authored by former SAP CTO Vishal Sikka, the study argues that Transformer-based LLMs are mathematically incapable of handling agentic tasks beyond a certain complexity. Even OpenAI admitted in late 2025 that accuracy will likely never reach 100%, leaving critical tasks like running power plants out of AI's reach for now.

Formal Verification: The Path Forward for AI Agent Trust

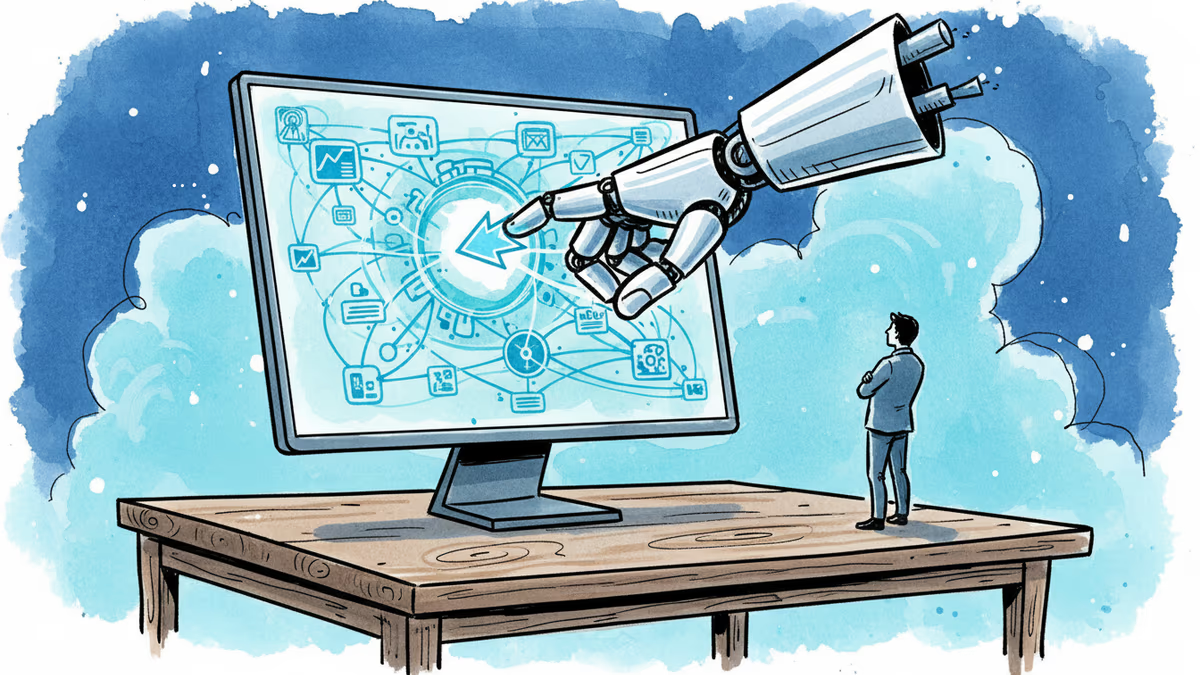

While skeptics point to math as a barrier, others use it as a solution. Harmonic, a startup co-founded by Robinhood CEO Vlad Tenev, is pioneering 'mathematical superintelligence.' Their product, Aristotle, encodes AI outputs in the Lean programming language to verify correctness. This approach doesn't eliminate hallucinations but builds a mathematical guardrail that ensures only verified results reach the user.

Authors

Related Articles

Okta CEO Todd McKinnon on why AI agents need identity management, the SaaSpocalypse threat, and why the kill switch might be the most important button in enterprise tech.

Anthropic's Claude Code and Cowork can now directly control your Mac desktop—clicking, scrolling, and navigating files. As AI agents race to take over local computers, what are the real implications?

OpenAI's GPT-5.4 can now control mouse and keyboard directly. Is this the end of office work as we know it?

OpenAI's GPT-5.4 introduces native computer control, marking a shift from AI creators to AI operators. What happens when machines start clicking our mice?

Thoughts

Share your thoughts on this article

Sign in to join the conversation