AI Can Read Your X-Ray. But Is It Actually Helping You?

65% of US hospitals use AI diagnostic tools, but almost no one is measuring whether patients actually get better. Accuracy and effectiveness aren't the same thing.

The Tool Works. But Does the Patient?

65% of US hospitals are already using AI-powered predictive tools. Of those, only two-thirds have evaluated whether those tools are accurate. Even fewer have checked them for bias. That's from a study published in January 2025 by researchers at the University of Minnesota. The technology spread fast. The scrutiny didn't.

This week, Jenna Wiens, a computer scientist at the University of Michigan, and Anna Goldenberg of the University of Toronto published a paper in Nature Medicine that puts a name to the gap. Their argument is deceptively simple: a tool being accurate and a tool actually improving patient health are two entirely different claims. Right now, we're measuring the first and mostly ignoring the second.

Doctors Love It. Patients… We're Not Sure.

The fastest-spreading AI tool in hospitals right now is the ambient AI scribe. It listens to doctor-patient conversations, then automatically transcribes and summarizes them. Physicians get their notes done without lifting a finger. They can look patients in the eye instead of staring at a screen.

A staffer at a major New York medical center told Wiens that doctors are, anecdotally, "overjoyed" by the technology. Early studies back this up: clinician burnout is down, satisfaction is up. These are real benefits. No one is dismissing them.

But Wiens stops the celebration there. "Researchers have evaluated provider and patient satisfaction," she says, "but not really how these tools are affecting clinical decision-making. We just don't know."

The Quiet Risk Nobody's Talking About

Consider a concrete scenario. AI speeds up the reading of a chest X-ray. Does that lead to better treatment? It depends entirely on how much the doctor trusts the AI's read — and that varies by hospital, by department, by how many years a physician has been practicing. A tool that works brilliantly in one clinical workflow might quietly underperform in another.

There's a subtler concern too. Research on AI use in education suggests that these tools can change how people cognitively process information. Could an AI scribe gradually alter how a doctor internalizes a patient's story? Could medical students trained alongside AI scribes develop different — perhaps weaker — habits of clinical reasoning? Wiens thinks these questions need urgent exploration. "We like things that save us time," she says, "but we have to think about the unintended consequences."

This isn't a fringe worry. It sits at the heart of a broader tension in health-tech: the gap between what a product demo shows and what happens at 3am in a busy ER when a junior resident leans on an AI recommendation a little too heavily.

Who's Actually Checking?

The companies building these tools have obvious incentives to demonstrate accuracy. Independent verification of real-world patient outcomes is a different matter — slower, more expensive, and nobody's immediate priority when adoption is booming.

Wiens is clear that she isn't calling for a slowdown. "I do believe in the potential of AI to really improve clinical care," she says. What she wants is for hospitals — not just the vendors selling the tools — to rigorously evaluate how well they work in their specific settings. There's a possibility, she notes, that some tools could leave patients worse off. More likely, she thinks, is that many AI tools are simply less beneficial than health-care providers assume.

For investors and health-tech companies, this is worth sitting with. A regulatory or liability reckoning — driven by a high-profile case where AI-assisted care led to a poor outcome — could arrive faster than the industry expects. The FDA has been tightening its framework for AI medical devices, but approval and real-world effectiveness remain different standards.

For patients, the question is more immediate: when your doctor uses an AI tool to help manage your care, do you have any way of knowing whether that tool has been evaluated for people like you? In most cases, the honest answer is no.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

US health systems are launching branded AI chatbots, framing them as safer alternatives to ChatGPT for medical advice. But convenience and conflict of interest may be harder to separate than they appear.

Northeastern University researchers manipulated AI agents into divulging data, crashing systems, and spiraling into loops—just by exploiting their built-in good behavior.

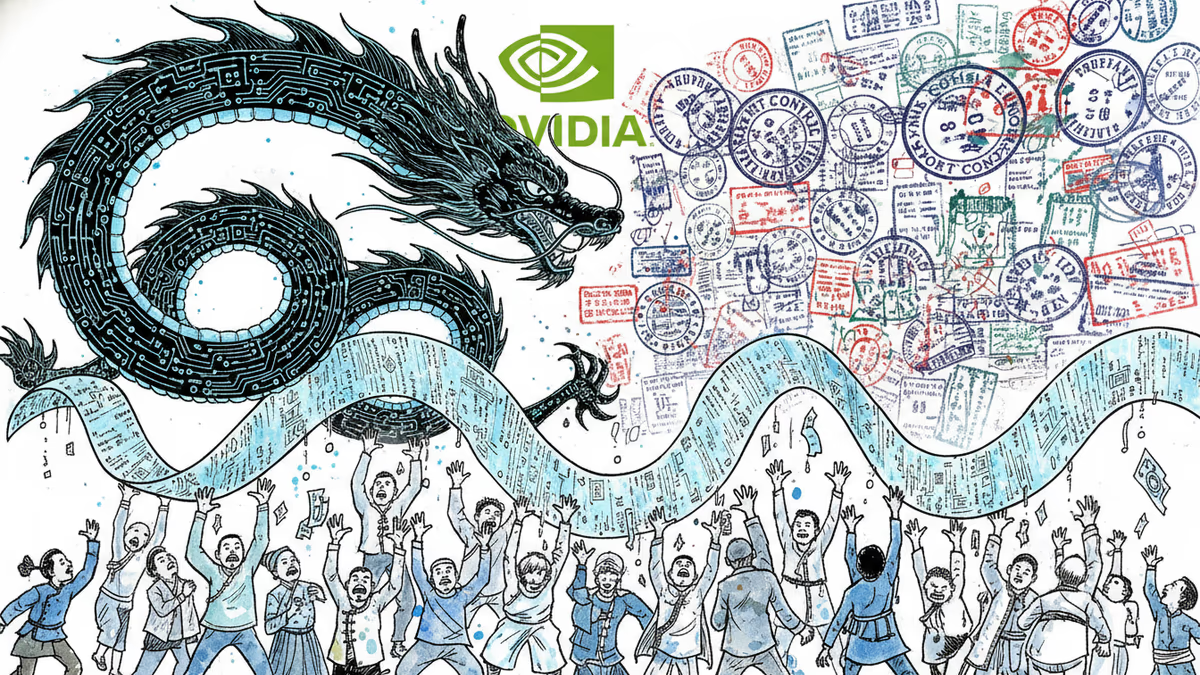

DeepSeek's V4 won't replicate the R1 shock—but it redraws the open-source AI map on pricing, long-context efficiency, and China's push to ditch Nvidia. Here's what's worth watching.

Palantir has become the tech backbone of Trump's immigration enforcement. Former employees are calling it a 'descent into fascism.' What happens when the people who build surveillance tools start asking uncomfortable questions?

Thoughts

Share your thoughts on this article

Sign in to join the conversation