Your AI Scored 98%. So Why Is It Slowing Everyone Down?

High benchmark scores aren't translating to real-world performance. A UCL researcher argues it's time to stop testing AI in a vacuum and start measuring what it does inside human teams.

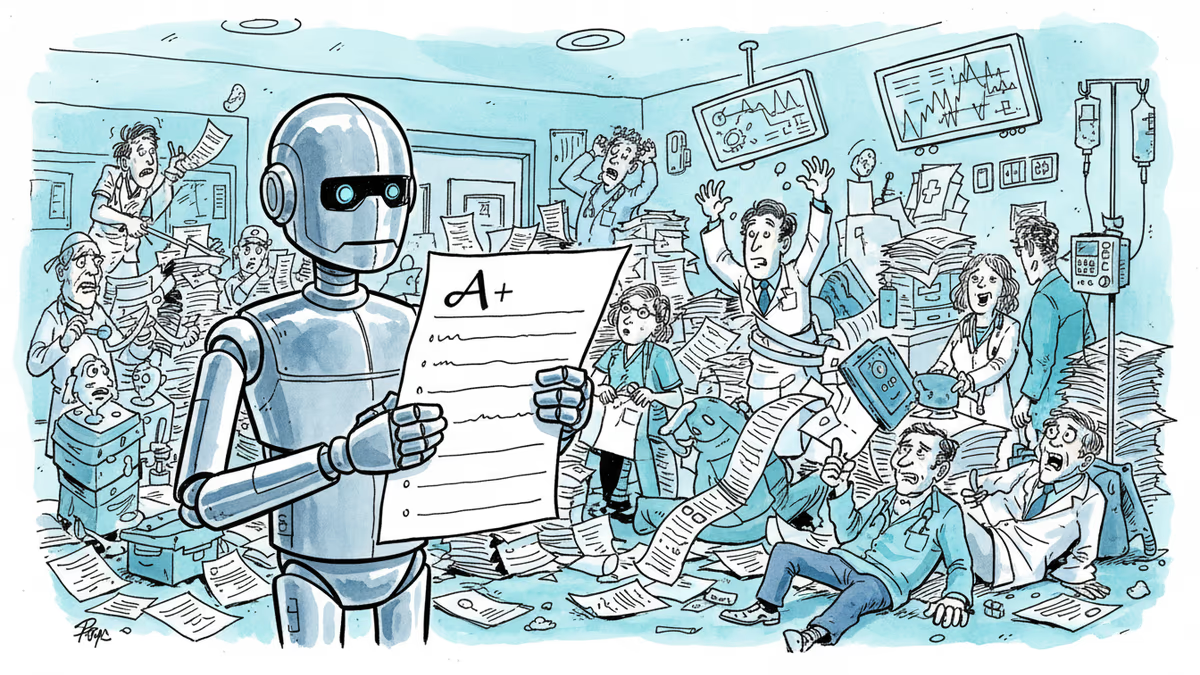

The AI aced the test. Then it made everyone's job harder.

In radiology units across California and London, hospitals deployed FDA-approved AI diagnostic tools — systems benchmarked at 98% accuracy, faster and more precise than expert radiologists. The pitch was compelling: less time per scan, fewer missed diagnoses, better outcomes. What actually happened? Staff reported that interpreting AI outputs alongside hospital-specific reporting standards and country-specific regulatory requirements added time to their workflows. A tool designed to boost productivity quietly became a bottleneck.

This isn't an isolated glitch. It's a symptom of a deeper problem in how the entire AI industry measures success — and it has consequences that reach well beyond hospital corridors.

Angela Aristidou, a professor at University College London and faculty fellow at the Stanford Digital Economy Lab and Stanford Human-Centered AI Institute, has spent the past three years studying AI deployment across healthcare, humanitarian, nonprofit, and higher-education organizations in the UK, US, and Asia. Her conclusion: the way we test AI is fundamentally misaligned with the way we use it.

The Gap Between the Lab and the Real World

For decades, AI evaluation has followed a seductive logic: pick a task with a clear right or wrong answer, measure whether the machine beats the human, and rank accordingly. Chess. Advanced math. Coding. Essay writing. The format is clean, comparable, and great for headlines.

But real professional environments don't work that way. Take treatment planning in a hospital. It doesn't hinge on a single doctor making a single call. It unfolds across multidisciplinary teams — radiologists, oncologists, physicists, nurses — who collectively review patients over days or weeks, weighing new information, navigating professional standards, patient preferences, and long-term well-being. The decision is relational, iterative, and deeply contextual. A benchmark that measures one-off, static accuracy captures almost none of this.

When the gap between benchmark and reality becomes visible in practice, Aristidou says the result is what she calls the "AI graveyard" — organizations abandon expensive deployments after the promised performance fails to materialize. The costs aren't just financial. Each failed rollout erodes institutional confidence in AI. In high-stakes settings like healthcare, repeated disappointment can chip away at broader public trust in the technology itself.

There's a regulatory dimension too. Governments and oversight bodies rely on benchmark scores as a proxy for real-world readiness. When those scores don't reflect reality, regulatory approvals create blind spots — and the organizations left to absorb the risk are often the ones with the least capacity to do so: under-resourced hospitals, nonprofits, public agencies.

What Better Evaluation Looks Like

Aristidou proposes a shift she calls HAIC benchmarks — Human-AI, Context-Specific Evaluation. It reframes assessment along four dimensions.

First, change the unit of analysis. Stop asking whether an AI improves individual task performance. Start asking how AI affects team coordination, collective reasoning, and organizational decision-making. In one UK hospital system studied between 2021 and 2024, the evaluation expanded from diagnostic accuracy to how AI influenced deliberation within multidisciplinary teams — whether it surfaced overlooked considerations, whether it strengthened or weakened coordination, and whether it altered existing risk and compliance practices.

Second, extend the time horizon. Current benchmarks resemble school exams: one-off, standardized, acontextual. But professional competence has never been assessed that way. Junior doctors and lawyers are evaluated continuously inside real workflows, with feedback loops, supervision, and accountability structures. If AI is meant to operate alongside professionals, its impact should be judged longitudinally. In one humanitarian-sector case study, an AI system was tracked across 18 months of real deployment, with particular attention to error detectability — how easily human teams could identify and correct AI mistakes. That longitudinal record became the basis for designing context-specific safeguards.

Third, expand what counts as a good outcome. Beyond speed and accuracy: organizational performance, coordination quality, error correction capacity. Fourth, account for system-level effects — whether AI anchors teams too early to plausible but incomplete answers, whether it increases cognitive load, whether efficiency gains at the point of use are offset by downstream inefficiencies elsewhere in the workflow.

Who's Pushing Back — and Why

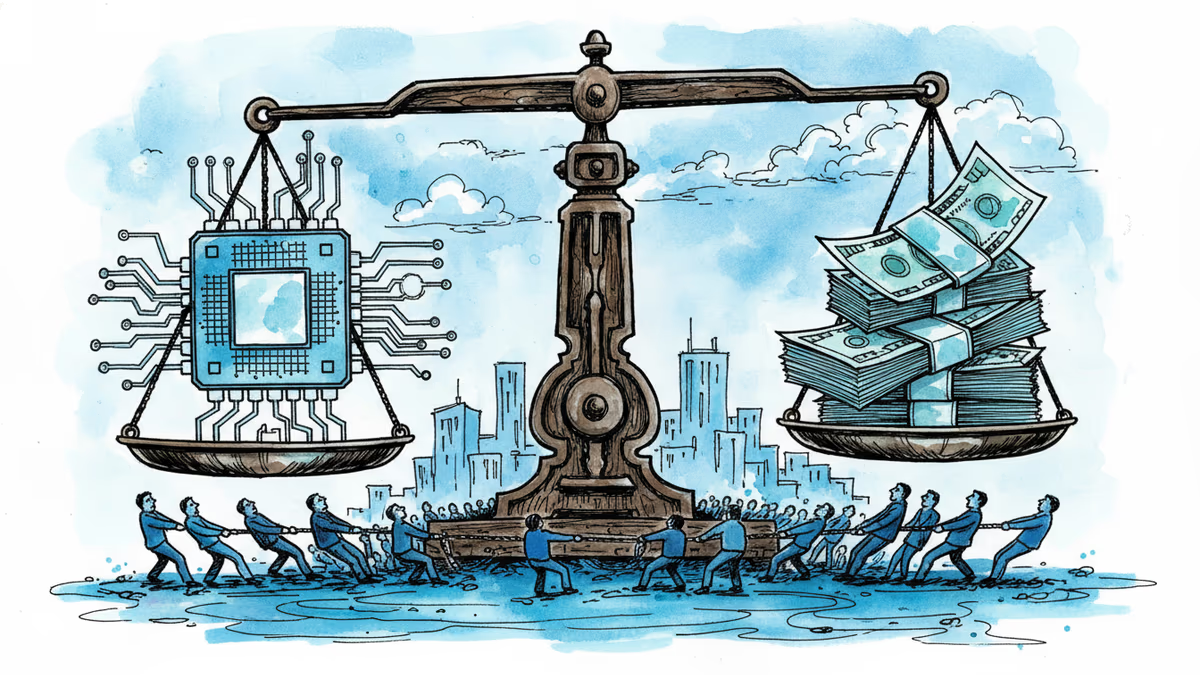

Not everyone is convinced the solution is more complex benchmarks. The appeal of current methods is precisely their simplicity: standardization enables comparison across models, vendors, and contexts. A single score is legible to procurement teams, regulators, and investors in ways that an 18-month longitudinal workflow study simply isn't.

There's also a resource argument. HAIC-style evaluation is expensive and context-dependent. A small startup or a cash-strapped public health system can't run multi-year deployment studies before choosing a tool. And there's a risk that context-specific benchmarks become so tailored they lose comparability entirely — making it harder, not easier, to hold AI vendors accountable.

From the vendor side, the incentives run in the opposite direction. Leaderboard rankings on established benchmarks like MMLU or HumanEval drive adoption decisions. Shifting to messy, organization-specific evaluation frameworks would complicate the sales cycle and make it harder to claim competitive advantage through a single number.

The Broader Stakes

The timing of this debate matters. AI adoption in enterprise settings is accelerating sharply — by some estimates, corporate AI spending will exceed $300 billion globally by 2027. The productivity gains being priced into that investment are largely predicated on individual task performance improvements. If HAIC-style research is right, those projections may be systematically overstated.

For policymakers, particularly in the EU and UK where AI regulation is actively being shaped, the implication is pointed: regulatory frameworks built on benchmark scores may be approving systems for deployment in conditions those scores were never designed to reflect. The EU AI Act, for instance, requires conformity assessments for high-risk AI applications — but the standards underpinning those assessments still lean heavily on task-level performance metrics.

For organizations actually deploying AI — whether a hospital system in Ohio or a development agency in Geneva — the practical question is more immediate: Are you measuring what the AI does to your team, not just what it does on its own?

Authors

Related Articles

OpenAI cofounder Greg Brockman's massive political donations spark internal dissent and public backlash, revealing tensions between tech advancement and political neutrality.

The Department of Transportation wants Google Gemini to draft airplane, car, and pipeline safety regulations in 30 minutes. What could go wrong with AI hallucinations in safety rules?

eBay is banning unauthorized AI shopping agents and LLM bots starting February 20, 2026, marking a major clash in the age of agentic commerce.

Artificial Analysis has released Intelligence Index v4.0, shifting AI benchmarking toward economic utility and real-world tasks. GPT-5.2 and Claude 4.5 take the lead.

Thoughts

Share your thoughts on this article

Sign in to join the conversation