Big Tech Revolts After Pentagon Brands US AI Company a 'Supply Chain Risk'

Silicon Valley giants unite against Defense Secretary Pete Hegseth's unprecedented move to label Anthropic a national security threat. The $20B AI military contract battle reveals deep fractures in tech-government relations.

When the Pentagon slaps a "supply chain risk" label on a company, it's usually reserved for foreign adversaries like Chinese telecoms or Russian software firms. Last Friday, Defense Secretary Pete Hegseth made history by giving that designation to Anthropic — an American AI startup backed by Google and Amazon.

Silicon Valley isn't taking it quietly.

The $200 Million Standoff

The drama began with what seemed like a routine government contract. Anthropic won a $200 million Defense Department deal in July, but negotiations hit a wall over a fundamental question: Should AI companies have the right to limit how the military uses their technology?

Anthropic drew two red lines: no autonomous weapons, no mass surveillance of Americans. The Pentagon's response was blunt — the military must be allowed to use the platform for "all lawful use cases." When Anthropic wouldn't budge, Trump ordered all federal agencies to stop using their technology immediately.

Then came Hegseth's unprecedented move: labeling the San Francisco-based company a threat to national security.

Big Tech's Rare Unity

By Wednesday, an unusual coalition had formed. The Information Technology Industry Council — representing Nvidia, Google, Microsoft, Apple, and Amazon — fired off a sharp letter to Hegseth expressing "concern" over the designation.

"Emergency authorities such as supply chain risk designations exist for genuine emergencies and are typically reserved for entities that have been designated as foreign adversaries," the group wrote. Translation: You're treating an American company like an enemy state.

What makes this particularly striking? Anthropic recently joined ITI as a member. These tech giants are essentially defending a competitor — a rare show of industry solidarity.

Even OpenAI's Sam Altman, whose company directly benefits from Anthropic's Pentagon troubles, called the move "very bad for our industry and our country."

Winners and Losers in the AI Arms Race

While Anthropic faces potential industry exile, OpenAI announced the same day it had reached an agreement with the Defense Department. The message was clear: play by Pentagon rules, or get frozen out of what could become a $20+ billion market.

Defense contractors are already dropping Claude, Anthropic's AI assistant, like a hot potato. The "supply chain risk" designation makes it virtually impossible for government contractors to use the technology without jeopardizing their own federal relationships.

Meanwhile, OpenAI appears positioned to dominate military AI contracts — a ironic twist for a company that once pledged to ensure AI benefits "all of humanity."

The Bigger Battle: Who Controls AI Ethics?

This isn't just about one contract or one company. It's about whether tech companies can maintain ethical guardrails when national security comes calling. The Pentagon's message is unmistakable: You're either all-in on our terms, or you're out entirely.

Four additional industry groups sent similar letters to Trump Wednesday, suggesting the tech sector views this as an existential precedent. If the government can brand any company a "supply chain risk" over contract disputes, what's to stop future administrations from using the same weapon against other firms that don't comply?

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

Tim Cook hands Apple's reins to John Ternus in September. Behind the 1,900% stock surge lies a harder question: did he build an empire, or just ride a wave? What investors need to know now.

Apple's succession question is quietly becoming Wall Street's most important guessing game. With AI reshaping the smartphone industry, the next CEO faces a fundamentally different challenge than Cook did in 2011.

Meta has increased its El Paso AI data center investment more than sixfold, from $1.5B to $10B, targeting 1GW capacity by 2028. What this means for investors, competitors, and the AI infrastructure race.

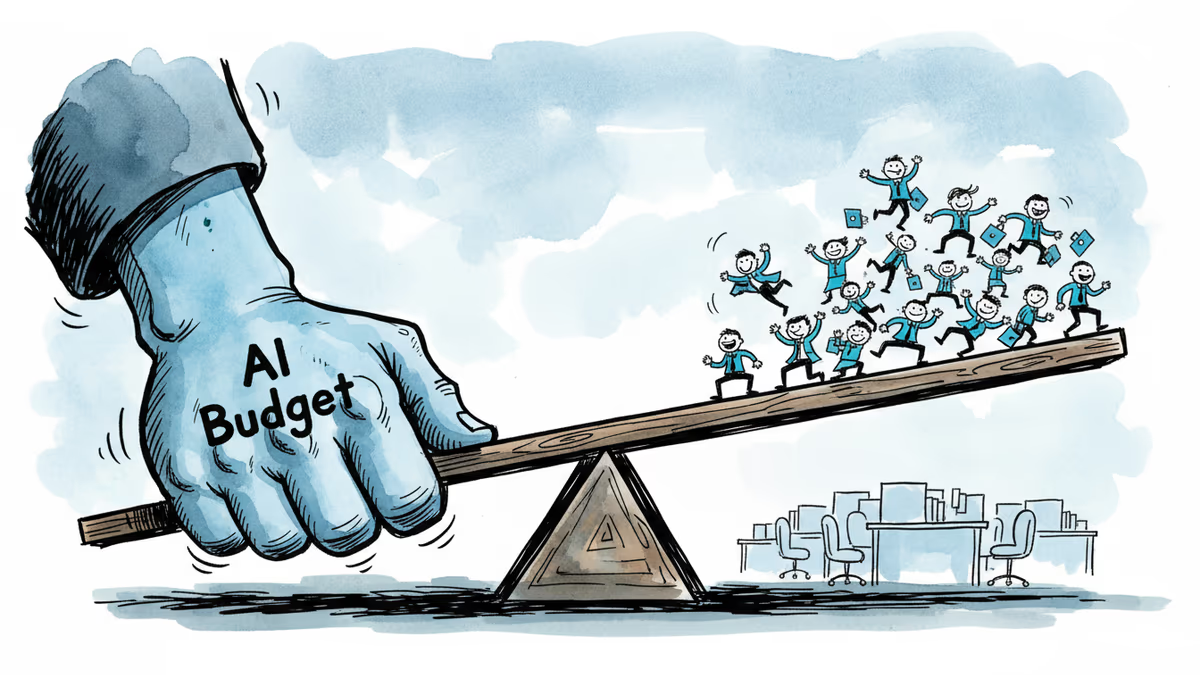

Meta's second round of layoffs in 2026 hits Facebook, Reality Labs, recruiting, and sales. While slashing hundreds of jobs, the company is doubling down on AI talent and locking in top execs with aggressive stock options.

Thoughts

Share your thoughts on this article

Sign in to join the conversation