OpenAI's Pentagon Deal: Where AI Safety Meets National Security

OpenAI unveils multi-layered protections in its US Defense Department partnership, raising questions about balancing AI innovation with military applications and ethical boundaries.

The company that gave us ChatGPT just drew a line in the sand—but it's written in pencil. OpenAI's announcement of "layered protections" in its Pentagon partnership represents a careful dance between innovation and ethics, profit and principle.

What's Really on the Table

OpenAI's multi-tier protection system sounds reassuring: technical safeguards, ethical reviews, and a firm "no" to autonomous weapons. The partnership focuses on cybersecurity and intelligence analysis—areas that feel safely removed from the battlefield.

But dig deeper, and the boundaries blur. Intelligence analysis can inform targeting decisions. Cybersecurity tools can become cyber weapons. The $18 billion the US government is spending on AI this year doesn't come with a guarantee that today's defensive tool won't become tomorrow's offensive capability.

The Money Trail Tells a Story

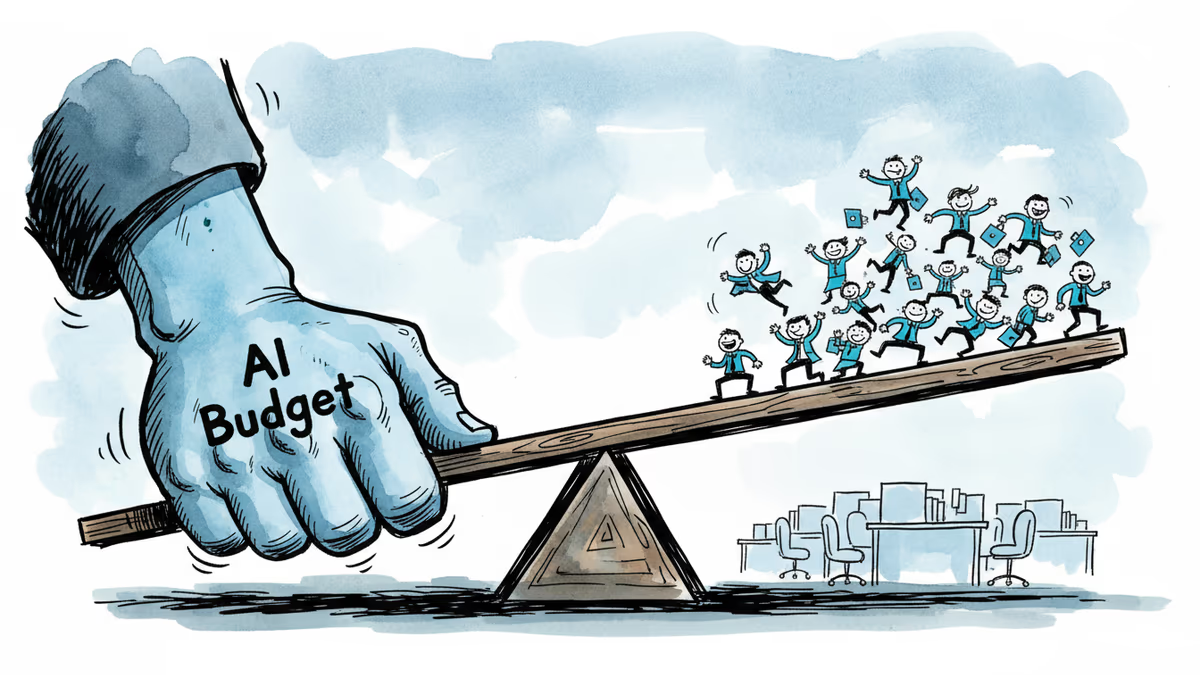

OpenAI's valuation hit $157 billion in its latest funding round, but that astronomical figure comes with astronomical costs. Training advanced AI models requires massive computing power and talent—both expensive. Government contracts offer stable, long-term revenue streams that venture capital can't match.

Yet the financial calculus isn't simple. Google walked away from the Pentagon's Project Maven in 2018 after employee protests, choosing brand integrity over government dollars. OpenAI's bet is that careful implementation can avoid that fate, but history suggests the line between "safe" military AI and controversial applications has a way of shifting.

The Global Domino Effect

While OpenAI sets up guardrails, competitors in China and elsewhere face no such constraints. Baidu and Alibaba operate in an environment where commercial and military applications blend seamlessly. This creates a prisoner's dilemma: can American AI companies afford to self-limit while rivals don't?

The partnership also signals to allies and adversaries that the US is serious about maintaining AI supremacy. For NATO partners developing their own AI strategies, OpenAI's approach may become a template. For competitors, it's a signal to accelerate their own military AI programs.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

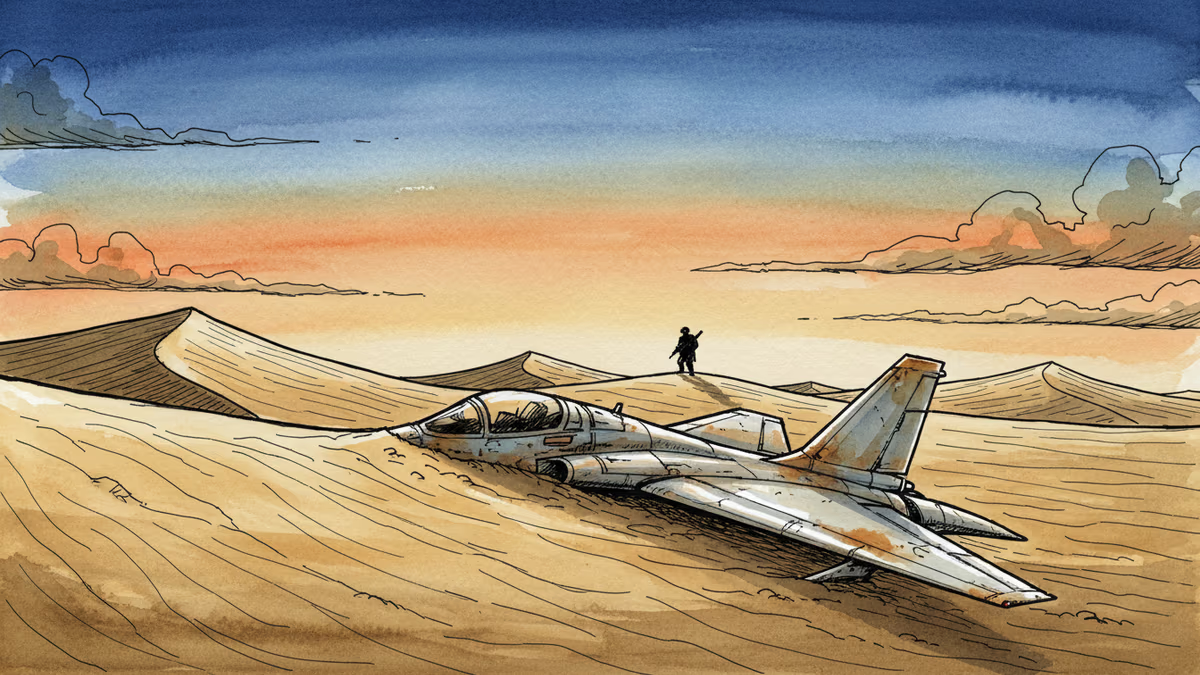

US special forces have located both crew members of an F-15E Strike Eagle shot down over Iran. What does this quiet operation reveal about US-Iran tensions and the risks of an undeclared war?

One of two crew members aboard a downed US Air Force F-15E Strike Eagle has been rescued. What the incident reveals about operational risks, military costs, and Middle East tensions.

Russia is nearing completion of phased weapons, food, and medicine deliveries to Iran. What this means for Middle East stability, energy markets, and the future of Western sanctions.

Meta's second round of layoffs in 2026 hits Facebook, Reality Labs, recruiting, and sales. While slashing hundreds of jobs, the company is doubling down on AI talent and locking in top execs with aggressive stock options.

Thoughts

Share your thoughts on this article

Sign in to join the conversation