When AI Meets Nuclear War: The 4-Minute Question

Trump's ban on Anthropic reveals a deeper conflict over AI's role in nuclear command systems. As machines get smarter, the stakes of getting it wrong have never been higher.

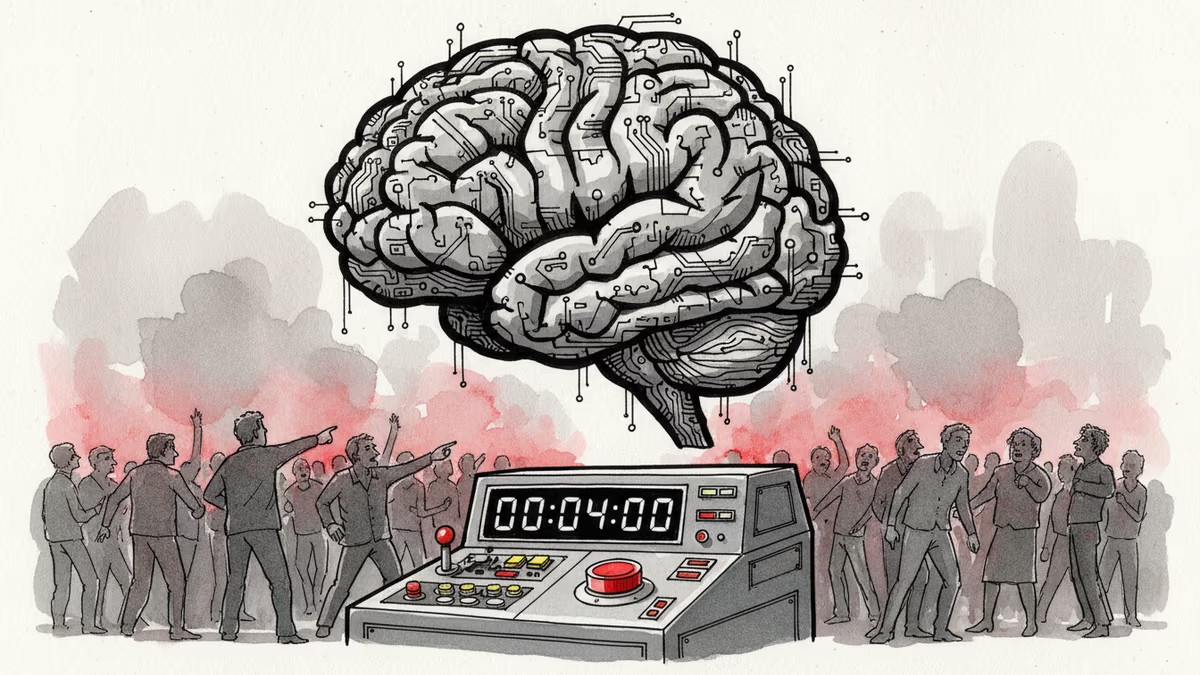

Four minutes. That's how long a US president would have to decide whether to launch a nuclear counterstrike if missiles were heading toward American soil. Now imagine an AI system is whispering in their ear.

This isn't science fiction—it's the reality behind President Trump's decision to blacklist AI company Anthropic from all federal use last Friday. What looked like another culture war skirmish between a "woke" tech company and an "anti-woke" Pentagon actually reveals something far more consequential: we're stumbling toward a future where artificial intelligence could help determine humanity's survival.

The December Confrontation That Changed Everything

The feud began with a hypothetical scenario that Under Secretary of Defense Emil Michael posed to Anthropic CEO Dario Amodei in early December. Picture this: nuclear missiles are flying toward the United States. Would Anthropic refuse to help defend the country because of its prohibition on autonomous weapons?

Amodei's response—that the Pentagon should "reach out and check" with the company first—infuriated Michael. Anthropic later clarified it would create exceptions for missile defense, but the damage was done. The conversation poisoned relations between Silicon Valley's safety-conscious AI developers and a Pentagon increasingly hungry for AI capabilities.

This wasn't just bureaucratic posturing. The US military is already exploring how AI and machine learning can be integrated into nuclear command and control systems to "enable and accelerate human decision-making." We don't know how far they've gotten, but we know they're serious about it.

Beyond the Red Button: The Real AI Nuclear Dilemma

Most discussions about AI and nuclear weapons focus on whether machines should ever control the launch button. But experts say that's the easy question—no country is likely to hand nuclear strike decisions to AI.

The harder challenge lies in "strategic warning"—using AI to synthesize massive amounts of data from satellites, radar, and sensors to detect threats as quickly as possible. This is exactly the scenario Michael was describing to Amodei.

It sounds reasonable enough: better threat detection means better missile defense. But here's the catch: in a nuclear attack scenario, the president would face an immediate decision about retaliation—a choice that could trigger global nuclear war and would have to be made in minutes.

The lives of millions could depend on the AI system getting it right. And nuclear history is littered with detection system failures that nearly sparked wars, averted only by human intuition overriding the machines.

When Silicon Valley Meets the War Room

Retired Lt. Gen. Jack Shanahan, who flew nuclear missions and later headed the Pentagon's Joint Artificial Intelligence Center, puts it bluntly: "I don't want to say it's certain that there's going to be a catastrophe, but I think you're heading down that path."

His concerns got fresh validation this week from a King's College London study showing that AI models—including Claude, ChatGPT, and Google Gemini—were far more likely than humans to recommend nuclear options in war game simulations. Even if AI doesn't control the weapons, a panicked multibillion-dollar system recommending nuclear strikes could be hard for any leader to ignore under extreme pressure.

The clash reflects a fundamental culture gap. Unlike previous military technologies—the internet evolved from Defense Department projects—cutting-edge AI emerged from private companies focused on commercial markets. As Shanahan puts it: "It's Mars-Venus to an extent."

"Boeing would never object to building anything the government would ask them to build," he notes. "It's a defense-industrial base company. [AI is] being born in a very different world with a group of people who don't see things the way employees of Lockheed may have seen the Cold War."

The Stakes Keep Rising

How this standoff resolves could determine AI's role in humanity's most consequential decisions. Will other AI companies be more willing to let their models be deployed with fewer questions asked? Will the Pentagon find ways around safety-conscious firms? Or will Silicon Valley's ethical guardrails prove strong enough to reshape military AI development?

The technology for AI-powered nuclear threat detection doesn't exist yet—which may be one reason Amodei was reluctant to commit to Michael's scenario. But it's coming, and when it arrives, the questions raised in that December conversation will matter more than ever.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

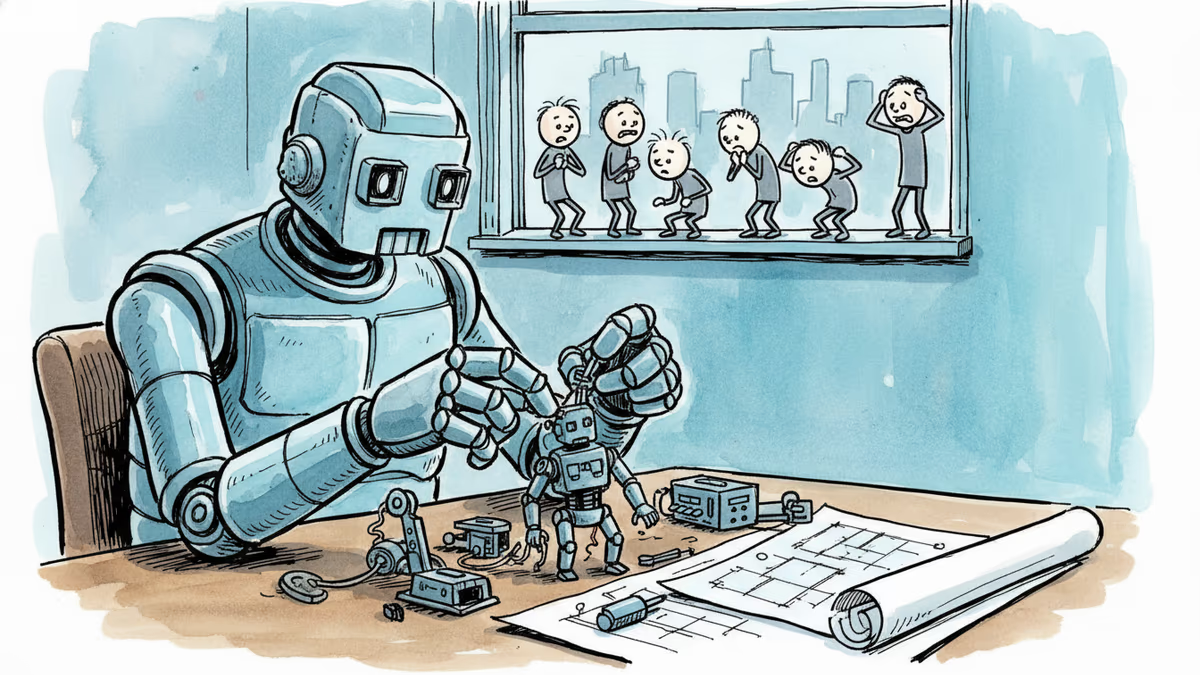

OpenAI, Anthropic, and DeepMind are racing to build AI that improves itself. What happens when the pace of AI progress is set by AI—not humans?

A satirical short story imagines AI-powered classrooms under a Melania Trump education initiative—and asks what we lose when we optimize learning for efficiency.

The AI consciousness debate is settled. But the question that actually matters — whether human-AI arrangements grow or erode human judgment — remains almost entirely unasked.

Grammarly's 'Expert Review' feature used famous writers' names without consent to power AI editing advice. The backlash reveals a deeper anxiety: what happens when your voice becomes someone else's product?

Thoughts

Share your thoughts on this article

Sign in to join the conversation