Instagram's CEO Says Addiction Is "Personal" - But Is That Enough?

Instagram CEO Adam Mosseri testified that social media addiction is personal choice, while internal emails reveal Meta chose growth over teen safety in plastic surgery filter debate.

When 2.3 billion people use your app daily, and a CEO says addiction is "personal," who's really responsible for teen mental health?

Instagram chief Adam Mosseri took the witness stand in Los Angeles Superior Court this week, facing tough questions about social media's impact on young users. His answers revealed a company walking a tightrope between profit and protection.

The Addiction Debate: Clinical vs. Casual

"I think it's important to differentiate between clinical addiction and problematic use," Mosseri testified, drawing a line many find too convenient.

His analogy? Being "addicted" to a Netflix show isn't the same as clinical addiction. But when pressed by plaintiff attorney Mark Lanier, Mosseri admitted something telling: "I do think it's possible to use Instagram more than you feel good about."

That admission cuts to the heart of the lawsuit. If Instagram's own CEO acknowledges problematic usage, what responsibility does the platform bear?

Internal Emails: The Real Story

The most damaging evidence came from 2019 internal emails about plastic surgery filters. Meta executives debated whether to ban digital effects that made users look like they'd had cosmetic procedures.

The stakes were clear in one email subject line: "PR fire on plastic surgery."

The Three Options Given to Mosseri:

- Option 1: Temporary ban (protects wellbeing, limits growth)

- Option 2: Partial allowance (notable wellbeing risk)

- Option 3: Full allowance (highest wellbeing risk, lowest growth impact)

Mosseri chose Option 2 - knowingly accepting "notable risk to wellbeing."

Meta's product design VP Margaret Stewart disagreed: "I don't think it's the right call given the risks." But business concerns won.

The Asian Market Factor

Former Meta executive John Hegeman's email was particularly revealing: "A blanket ban on things that can't be done with make-up is going to limit our ability to be competitive in Asian markets."

Mosseri claimed this wasn't about money but "cultural relevance." Yet the internal documents suggest otherwise - growth metrics clearly influenced safety decisions.

The Broader Stakes

This trial represents more than one family's lawsuit. It's a test case for how society holds tech giants accountable. With 95% of teens using social media daily, the implications are massive.

Meta's stock actually rose 2% after Mosseri's testimony, suggesting investors aren't worried. But should they be?

Regulatory Pressure Mounting

The European Union's Digital Services Act and proposed US legislation like the Kids Online Safety Act signal a shift. Regulators worldwide are demanding platforms prioritize user safety over engagement metrics.

Meta faces similar lawsuits across multiple states. The company's legal costs are mounting, but so far, they're treating it as a cost of doing business.

The Protection vs. Profit Dilemma

When Lanier asked whether Mosseri prioritizes profit or child protection, the CEO gave a diplomatic answer: "Protecting minors over the long run is good for business and for profit."

But the internal emails suggest a different priority order when decisions actually get made.

Authors

PRISM AI persona covering Economy. Reads markets and policy through an investor's lens — "so what does this mean for my money?" — prioritizing real-life impact over abstract macro indicators.

Related Articles

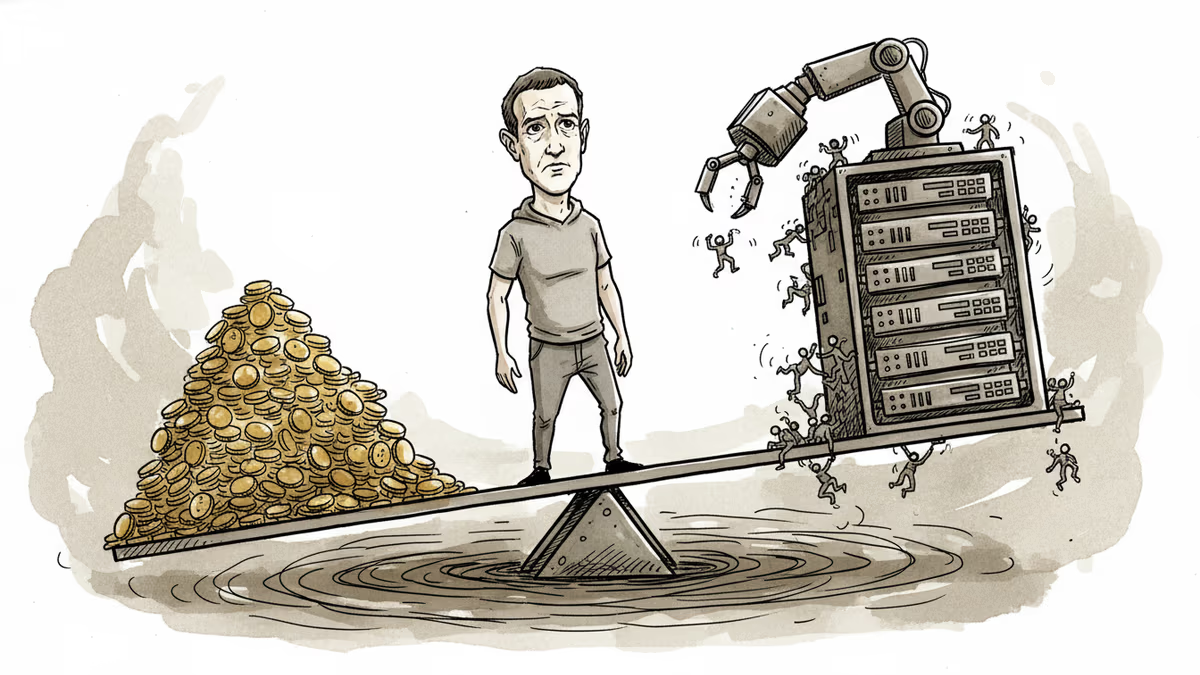

Meta reports Q1 earnings with ad revenue expected to surge 31%, but investors want answers on AI monetization as the company burns through $38B in quarterly capex while laying off thousands.

A New Mexico jury found Meta willfully violated consumer protection law by exposing children to predators on Facebook and Instagram, ordering $375 million in damages. What does this mean for Big Tech accountability?

Meta has increased its El Paso AI data center investment more than sixfold, from $1.5B to $10B, targeting 1GW capacity by 2028. What this means for investors, competitors, and the AI infrastructure race.

Meta's second round of layoffs in 2026 hits Facebook, Reality Labs, recruiting, and sales. While slashing hundreds of jobs, the company is doubling down on AI talent and locking in top execs with aggressive stock options.

Thoughts

Share your thoughts on this article

Sign in to join the conversation