When AI Decides Who Dies: The New Kill Chain

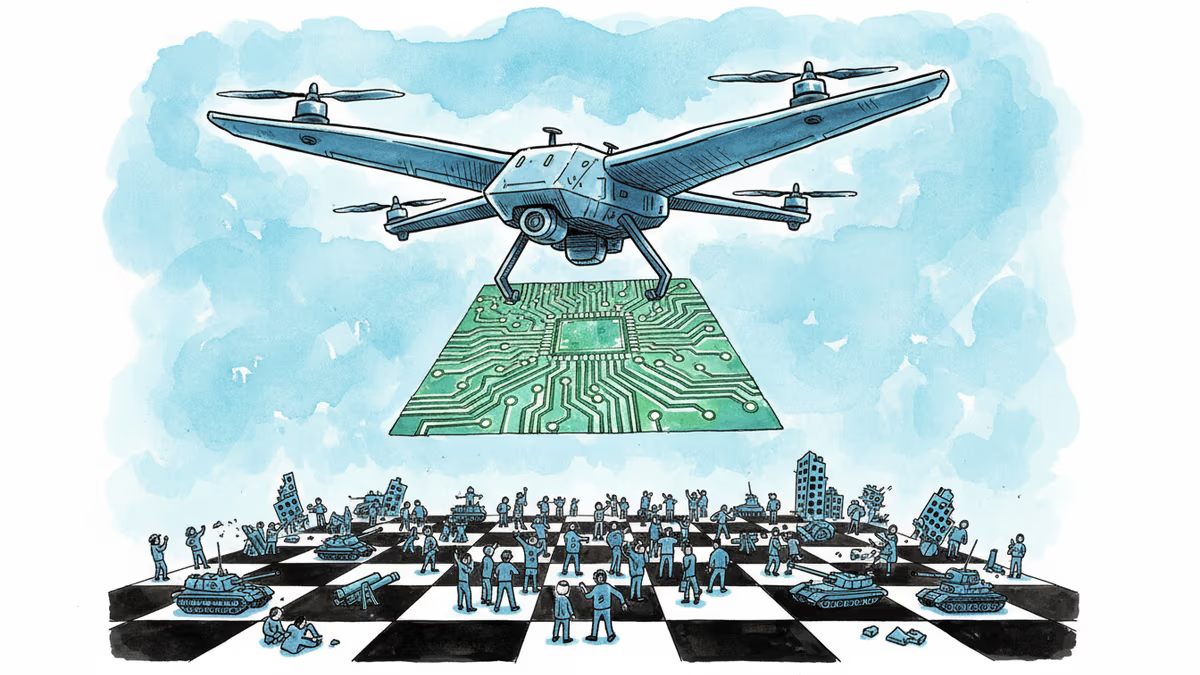

The US military is integrating AI into its targeting systems, compressing the "kill chain" from hours to seconds. What happens when machines help decide who lives and who dies?

A targeting decision that once took a team of analysts 72 hours to verify can now be processed in under 20 seconds. That's not a hypothetical from a Pentagon think tank. That's the operational reality the US military has been quietly building toward—and is now, by most accounts, already deploying.

Welcome to the AI-driven kill chain.

What the Kill Chain Actually Means

In military doctrine, the "kill chain" is the sequence of steps required to identify, track, target, and engage an enemy. Traditionally, it's a deeply human process: intelligence officers pore over satellite imagery, analysts cross-reference signals data, lawyers review compliance with rules of engagement, and commanders authorize strikes. Each step is a checkpoint. Each checkpoint takes time.

AI is collapsing that timeline. Machine learning systems can now ingest vast streams of sensor data—radar feeds, drone footage, communications intercepts—and surface actionable targeting recommendations faster than any human analyst. The US Department of Defense has poured billions into programs like Project Maven, which uses computer vision to analyze drone footage, and JADC2 (Joint All-Domain Command and Control), a sweeping initiative to link every sensor and shooter across all military branches into a single AI-assisted network.

The logic is straightforward: in modern warfare, speed is survival. If an adversary's missile battery is mobile, you have minutes—not hours—to act. China and Russia are investing in similar systems. Falling behind, the argument goes, isn't just a tactical disadvantage. It's existential.

Why This Moment Matters

The acceleration didn't happen in a vacuum. The wars in Ukraine and Gaza have served as live laboratories for AI-assisted targeting, each conflict stress-testing what these systems can and cannot do. Israel's reported use of an AI system called "Lavender" to generate target lists in Gaza drew sharp scrutiny from human rights organizations, raising questions about how much human judgment actually remained in the loop. The US has watched closely.

Meanwhile, the 2024 National Defense Authorization Act pushed the Pentagon to accelerate AI integration across warfighting domains. Defense contractors—Palantir, Anduril, Shield AI, and a growing constellation of startups—are competing for contracts worth tens of billions of dollars. Palantir's AI Platform (AIP) has already been demonstrated in live military exercises, reportedly reducing mission planning cycles from days to hours.

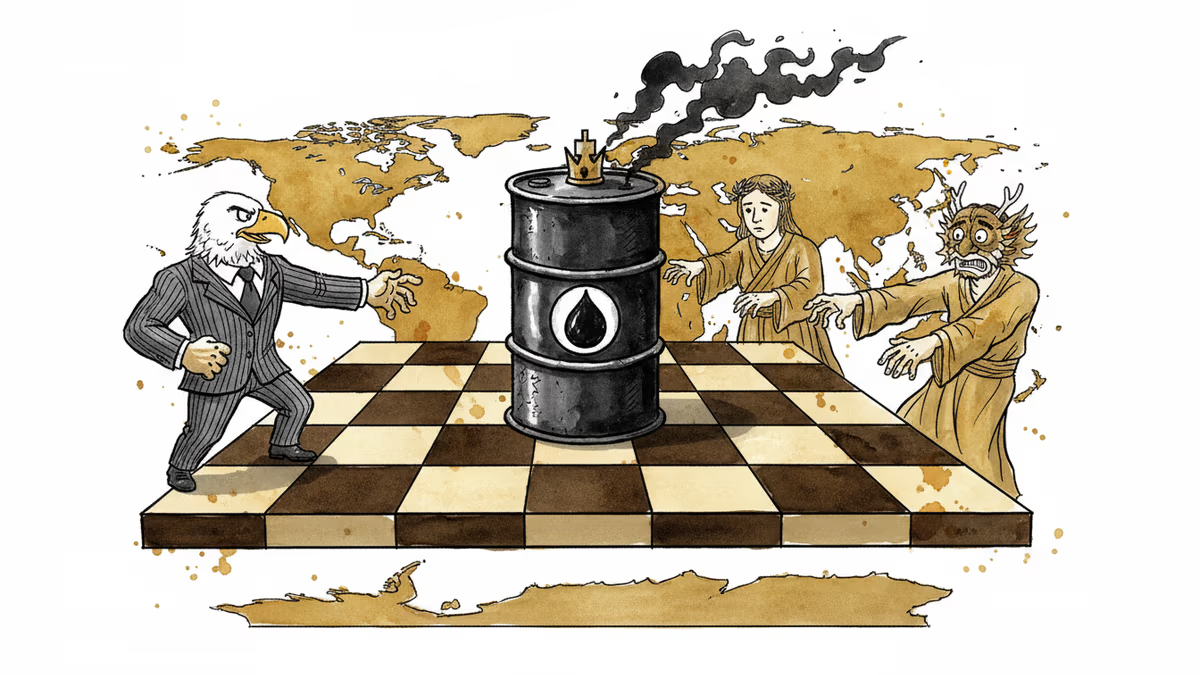

The timing also intersects with a broader shift in US strategic posture. With defense budgets under pressure and a stated need to project force across the vast distances of the Indo-Pacific—a potential confrontation with China over Taiwan being the scenario every planner has in mind—AI isn't a luxury. It's being positioned as a force multiplier that lets a smaller, faster force outmaneuver a numerically superior one.

The Uncomfortable Arithmetic

Here's where the story gets harder to tell cleanly.

Proponents argue that AI targeting systems, when properly designed, can actually reduce civilian casualties. Humans under stress make errors. They misidentify targets. They act on incomplete information colored by fear and fatigue. An AI system, in theory, can hold more variables simultaneously, flag potential civilian presence, and apply rules of engagement more consistently than a soldier in the fog of war.

But critics—including a growing number of AI researchers, ethicists, and former military officers—push back hard. The core problem is accountability. When an AI system recommends a strike and a human approves it in under 30 seconds, how meaningful is that human judgment? Is it oversight, or is it a rubber stamp that launders machine decisions with the appearance of human responsibility?

There's also the question of training data. AI systems learn from historical patterns. But warfare is not static. An algorithm trained on one adversary's behavior, in one terrain, in one conflict, may perform unpredictably when conditions shift. Garbage in, catastrophic out—and in this domain, the errors aren't misclassified spam emails.

Paul Scharre, a former Army Ranger and now one of the most cited voices on autonomous weapons, has drawn a distinction between AI-assisted targeting (where humans remain genuinely in the loop) and AI-automated targeting (where speed renders human oversight nominal). The line between the two, he argues, is blurring faster than policy can track.

Who's Watching the Watchers?

The stakeholder map here is unusually complex.

The Pentagon wants speed and precision, and is under congressional pressure to deploy AI capabilities before adversaries do. Its internal ethics guidelines—the DoD AI Principles adopted in 2020—require that AI systems be "traceable" and that humans retain "appropriate levels of judgment." What "appropriate" means in a 10-second targeting window remains conveniently undefined.

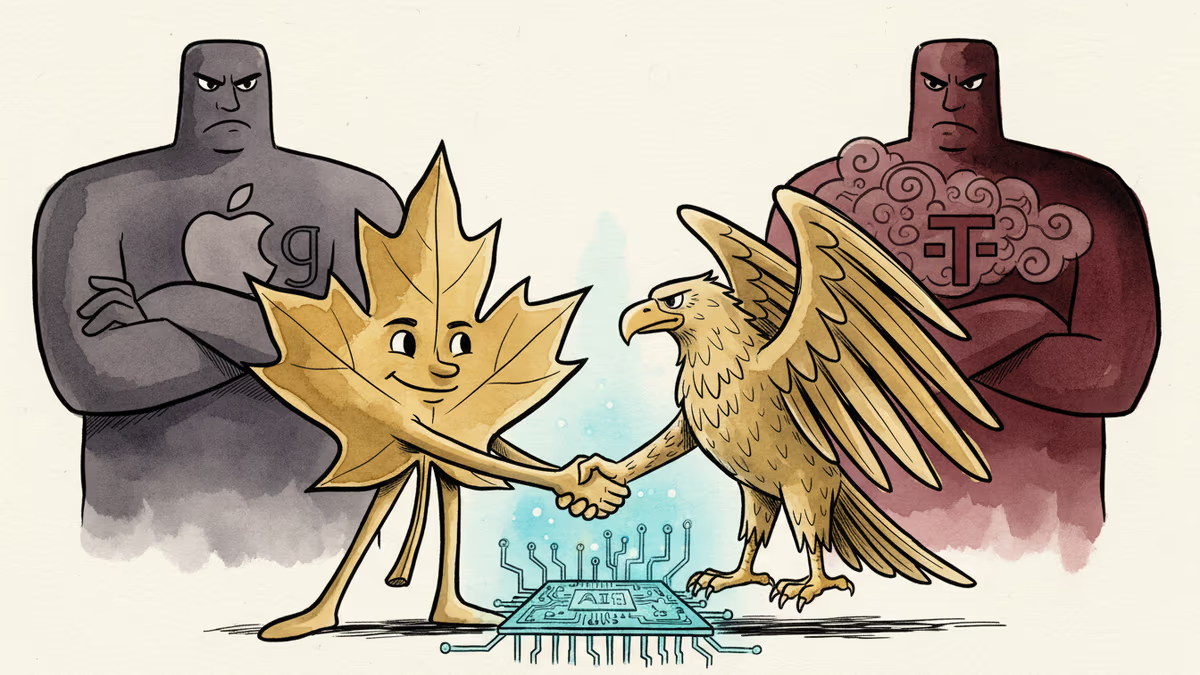

Defense contractors have obvious commercial incentives to sell systems and expand their footprint. But several, including Google (which withdrew from Project Maven in 2018 after employee protests), have learned that their civilian workforce has strong opinions about what applications they'll build. The internal tension inside tech companies between government contracts and ethical commitments is far from resolved.

Allies and partners face a different calculus. NATO members operating alongside US forces will increasingly interact with AI-assisted command systems they didn't build and can't fully audit. Who bears legal and moral responsibility when a joint operation produces a targeting error?

International law scholars note that existing frameworks—the Geneva Conventions, International Humanitarian Law—were written for human decision-makers. They require distinction (between combatants and civilians), proportionality, and precaution. Whether an AI system can genuinely satisfy those requirements, or merely simulate doing so, is a question that no international body has authoritatively answered.

Adversaries are watching and adapting. If China or Russia observe that US AI systems are trained to flag certain signatures as hostile, they will engineer around those signatures. The cat-and-mouse dynamic of electronic warfare is now being played at the level of machine learning models.

The Deeper Shift Nobody Wants to Name

Strip away the technical language and the policy euphemisms, and you arrive at a genuinely uncomfortable place: humanity is in the early stages of delegating lethal decisions to systems that optimize for outcomes we specify, but whose reasoning we cannot fully explain.

This isn't science fiction. It's procurement policy.

The question isn't whether AI will be part of future warfare—that decision has effectively been made, by the US, by China, by Russia, by Israel, and by a dozen other states. The question is what constraints will govern it, who will enforce those constraints, and whether the international community can agree on any of this before a catastrophic failure forces the conversation.

History offers a cautionary parallel. When aerial bombing was introduced in the early 20th century, military planners promised precision. The reality, across two world wars and every conflict since, was far messier. Technology rarely performs in war as it performs in demonstrations.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

A gunman opened fire at a media event, forcing the evacuation of a president and entire cabinet. The motive is unknown. But the questions it raises go far beyond security.

Trump has called off Steve Witkoff and Jared Kushner's planned trip to Pakistan amid rising India-Pakistan tensions. What does Washington's absence signal for South Asia's most volatile flashpoint?

As conflict reshapes Middle East oil flows, the US emerges as a key beneficiary. But Europe and Asia are asking a harder question: is American energy independence just a new form of dependency?

Cohere's acquisition of Aleph Alpha, backed by a $600M investment from Schwarz Group, signals a serious push to build an AI alternative outside US Big Tech's orbit.

Thoughts

Share your thoughts on this article

Sign in to join the conversation