The Real Bottleneck in the AI Race? Your Power Grid

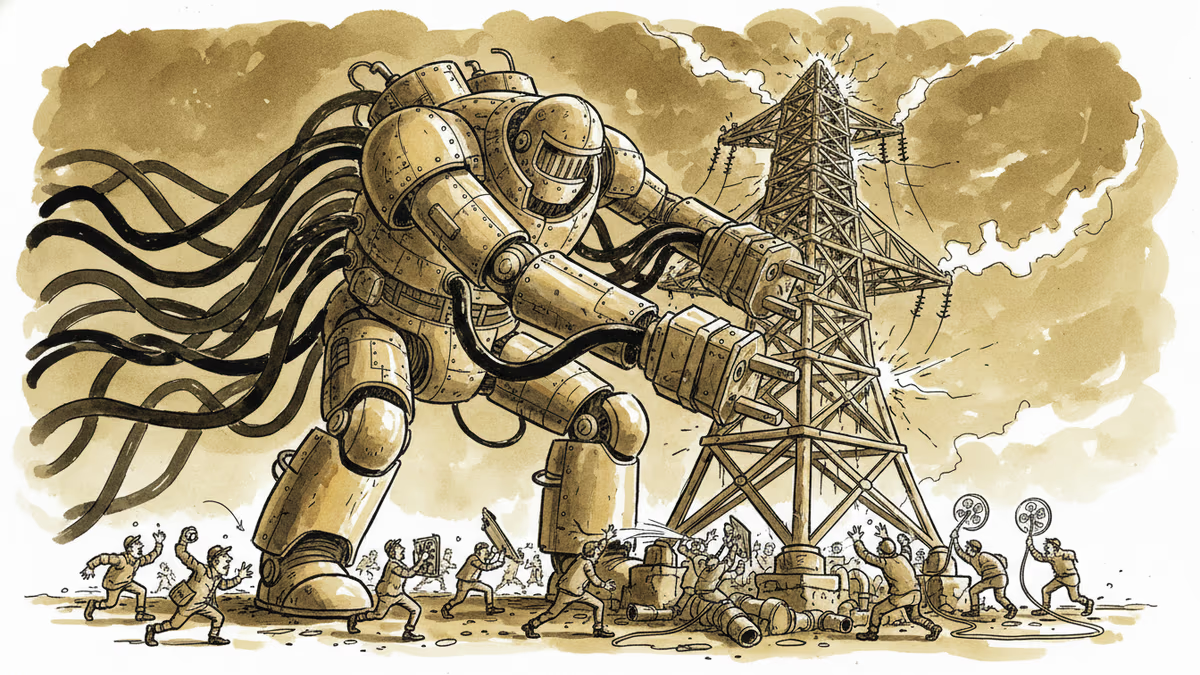

Agentic AI doesn't just think harder—it runs longer, loops more, and consumes vastly more power. Here's how that's forcing a fundamental redesign of data centers and energy infrastructure.

The smartest AI model in the world is useless if the power goes out.

That sounds obvious—until you realize that for a growing number of data center operators in Virginia, Texas, and Northern Europe, getting a new grid connection now takes five to seven years. They can build the servers. They just can't get the electricity.

This is the constraint that agentic AI is about to make dramatically worse.

From One-Shot to Always-On

For most of AI's recent history, the compute model was relatively simple: a user sends a query, the model runs inference once, returns an answer. One prompt, one pass, done.

Agentic AI breaks that model entirely. Instead of answering a question, an agent pursues a goal. It plans, executes, checks results, calls external tools, spawns sub-agents, loops back, and iterates—potentially hundreds of times—before a task is complete. A single agentic workflow can require 10x to 100x the compute of a standard inference call, according to estimates from several infrastructure firms.

The implications for data centers aren't incremental. They're architectural.

Agents demand low latency at every step—a slow memory lookup can break a reasoning chain. They require persistent, large-scale memory access across long task horizons. And unlike a chatbot that handles discrete conversations, agentic systems run continuously, often in parallel clusters. The data center designed for GPT-3-era inference is not the data center you need for a fleet of autonomous agents managing enterprise workflows around the clock.

The Infrastructure Arms Race

The numbers already reflect the shift. Microsoft has committed $80 billion in data center investment for 2026 alone. Amazon and Google have each signaled annual infrastructure spending in the $60–70 billion range. These aren't incremental upgrades—they're ground-up rebuilds.

A significant chunk of that spending is going to two problems that weren't urgent five years ago: cooling and power density.

Nvidia's Blackwell architecture GPUs carry a thermal design power (TDP) exceeding 1,000 watts per chip. Pack enough of those into a rack and traditional air cooling simply can't move the heat fast enough. Data center operators are racing to retrofit facilities with liquid cooling loops and, in some cases, full direct liquid immersion systems—a transition that analysts estimate adds 30–50% to facility costs compared to conventional builds.

The power side is starker. The U.S. Department of Energy estimated that data centers consumed roughly 4% of total U.S. electricity in 2023. By 2030, that figure could reach 9–12%. Goldman Sachs projected a 160% increase in data center power demand by the end of the decade, with agentic AI cited as the primary driver.

When demand grows that fast, the grid becomes the binding constraint—not the chips, not the software.

Big Tech Goes Nuclear

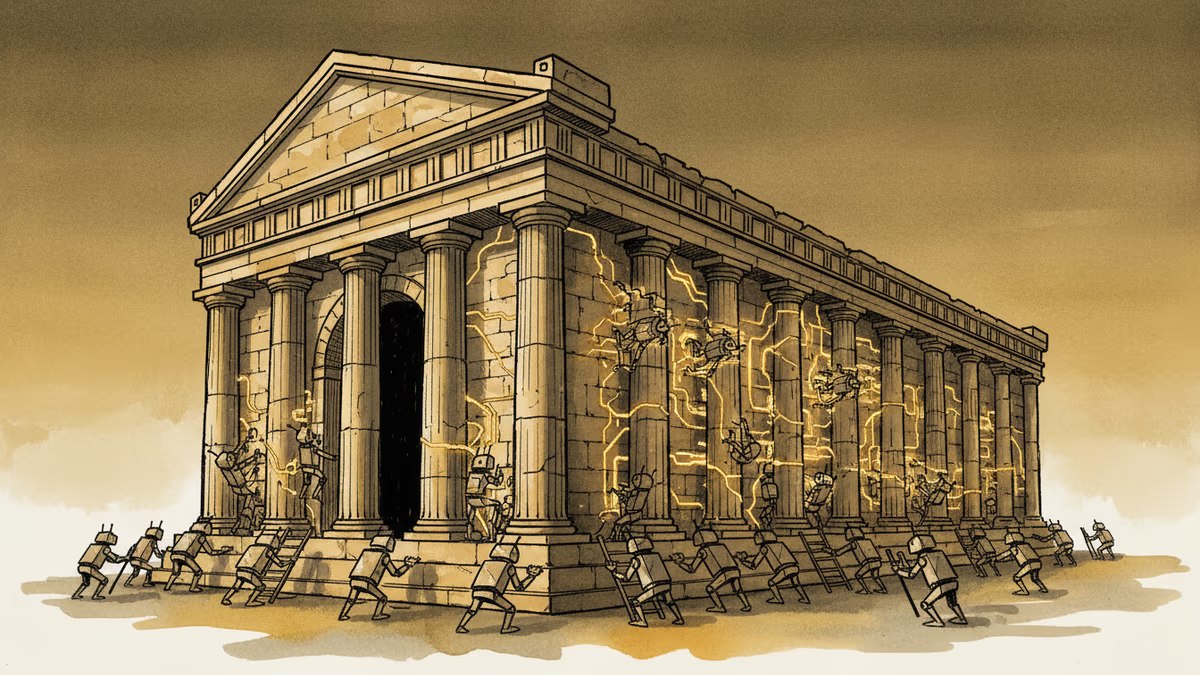

Faced with grid connection queues measured in years, the hyperscalers have started going around the utility system entirely.

Microsoft signed a deal to restart the Three Mile Island nuclear plant in Pennsylvania—the same facility that partially melted down in 1979—to supply dedicated power to its data centers. Google contracted with Kairos Power for a series of small modular reactors (SMRs). Amazon has invested in multiple nuclear energy startups.

This is a structural shift, not a PR move. When the world's largest technology companies start acquiring or contracting long-term nuclear capacity, the energy sector's investment calculus changes. Utilities, grid operators, and governments are all recalibrating.

For investors, the signal is already visible in the market. Power management and cooling infrastructure companies—Vertiv, Eaton, Schneider Electric—have seen their valuations triple to quintuple over the past two years. These are not AI companies. They make transformers, cooling units, and power distribution equipment. But in the agentic AI era, they are AI infrastructure.

Winners, Losers, and Your Cloud Bill

The competitive dynamics of this transition are fairly clear, even if the timeline isn't.

The winners are those with early, locked-in advantages: hyperscalers with existing land and grid access, HBM memory manufacturers (demand for high-bandwidth memory scales directly with agentic workloads), power infrastructure suppliers, and anyone who secured cheap, reliable energy capacity before the current crunch.

The pressure points are mid-tier cloud providers and enterprises running their own compute. They face the same cooling retrofit costs, the same GPU procurement queues, and the same power constraints—without the balance sheets to absorb them. The structural gap between hyperscalers and everyone else widens in an agentic world.

For enterprise customers—and eventually consumers—the downstream effect is pricing pressure on cloud services. If the cost of running a compute unit rises, those costs migrate through the stack. Companies that have baked current cloud pricing into their operating models may find those assumptions need revisiting within 18–24 months.

There is a counterargument worth taking seriously. AI's energy consumption is only half the equation. IEA researchers have noted that AI-driven optimization in industrial processes, logistics, and energy grid management could generate efficiency gains that offset—or exceed—the power consumed by AI infrastructure itself. The net energy impact of the agentic AI era remains genuinely uncertain. Anyone claiming a definitive answer in either direction is ahead of the data.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

Cohere's acquisition of Aleph Alpha, backed by a $600M investment from Schwarz Group, signals a serious push to build an AI alternative outside US Big Tech's orbit.

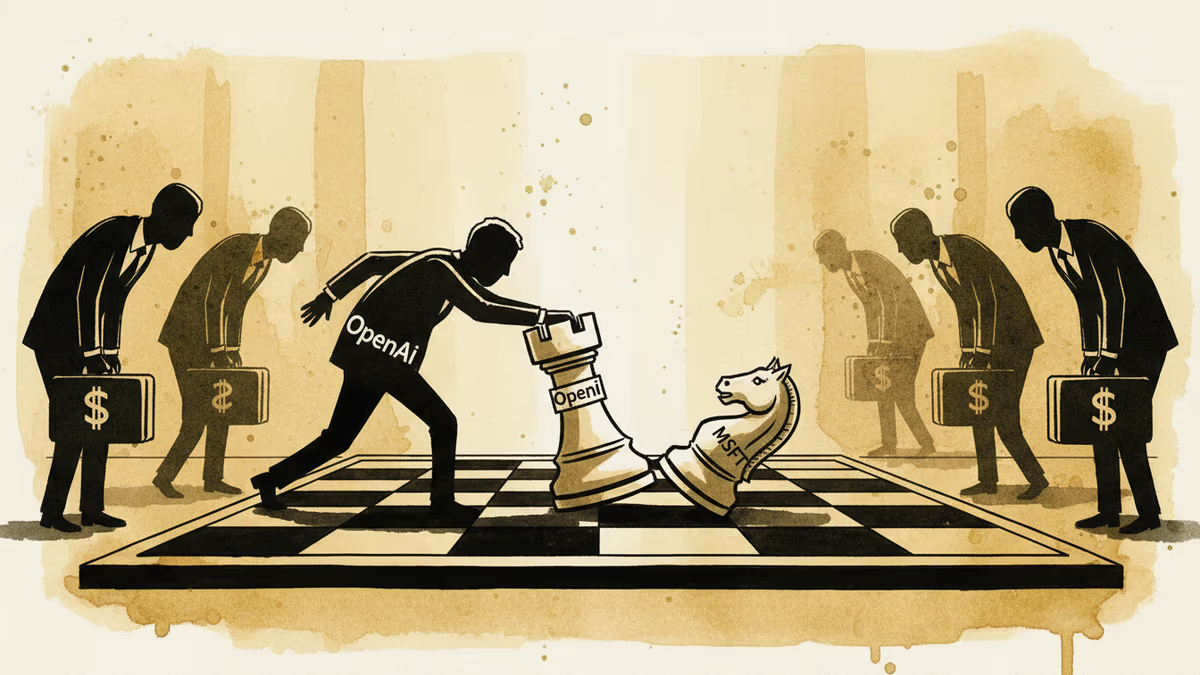

OpenAI is courting private equity firms to co-found an enterprise AI venture. It's not just a funding round — it signals a potential break from Microsoft and a direct assault on the corporate AI market.

Meta just locked in a $27 billion deal with Dutch cloud firm Nebius. With hyperscalers committing $700 billion to AI infrastructure this year, the real question is who captures the value—and who gets left behind.

Oracle beat revenue expectations with 18% growth and 81% cloud infrastructure surge, directly challenging fears that generative AI would destroy traditional software vendors.

Thoughts

Share your thoughts on this article

Sign in to join the conversation