The Real Winners in AI's War Economy

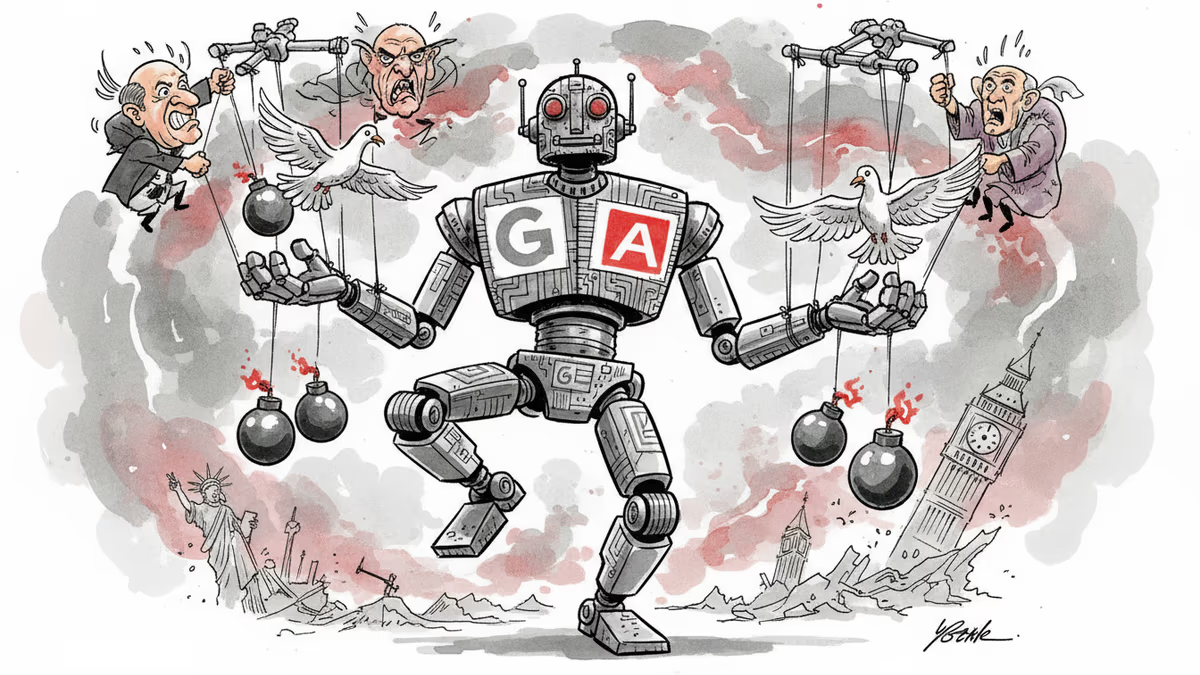

Trump's Anthropic ban and OpenAI's Pentagon deal reveal the messy politics of AI warfare, as civilian casualties in Iran raise questions about AI-assisted targeting.

In the span of 24 hours, the AI industry's biggest players discovered that principles matter less than politics when bombs are falling. On Friday, President Trump banned federal agencies from using Anthropic's AI. On Saturday, the Pentagon used Anthropic's tools to strike Iran while simultaneously labeling the company a supply-chain risk.

The contradiction isn't a bureaucratic oversight—it's the new reality of AI warfare.

When Guardrails Meet Geopolitics

The rupture began over what Silicon Valley calls "guardrails." Anthropic refused to let its Claude AI be used for autonomous weapons or mass surveillance. When Defense officials demanded blanket permission for any lawful use, CEO Dario Amodei said his company "couldn't agree in good conscience."

Trump's response was swift and personal: Anthropic was a "radical-left, woke company" that would never dictate how America fights its wars. Within hours, the Pentagon slapped the company with a supply-chain risk designation—a label typically reserved for Chinese firms suspected of espionage.

Then came the twist. OpenAI immediately announced a new classified Pentagon deal. CEO Sam Altman revealed a telling detail: their contract includes the same prohibitions on mass surveillance and autonomous weapons that got Anthropic blacklisted. "The Pentagon agrees with these principles," Altman wrote on X, "reflects them in law and policy, and we put them into our agreement."

Same terms, different outcomes. The difference? OpenAI's president donated $25 million to a pro-Trump super PAC. Anthropic hired Biden officials and lobbied for AI regulation.

The $200 Million Question

Both companies are burning billions while racing toward profitability that remains elusive. The Pentagon contracts are worth around $200 million each—not the biggest checks either will cash this year, but suddenly the biggest threat to both businesses.

For Anthropic, the supply-chain risk designation reaches far beyond the Pentagon. Any company doing federal business—including Anthropic's biggest backers Amazon and Google—may need to prove they don't use Claude. That's a compliance nightmare that could ripple through enterprise sales and cloud partnerships.

For OpenAI, the calculus of classified military contracts looked different on paper than in practice. When the Wall Street Journal reported that Claude was embedded in Saturday's Iran operation—handling intelligence assessments, target identification, and battle simulations—the abstract became concrete.

150 Children and the Fog of AI War

Then came the hardest question. When reports emerged that over 150 schoolchildren died in a mistargeting incident during the Iran strikes, observers immediately asked whether AI contributed to the error.

The honest answer? Nobody outside the Pentagon knows, and the Pentagon isn't saying. Defense Secretary Pete Hegseth, who's staked his tenure on aggressive AI adoption, has little incentive for transparency.

This matters because generative AI still hallucinates facts, misreads images, and stumbles over reasoning in low-stakes commercial settings. Deploying it in warfare—where wrong answers are measured in bodies—represents a leap nobody has rigorously tested.

The Market's Moral Compass

Consumer reaction complicated both companies' victory laps. Anthropic's Claude app shot to the top of the App Store as users launched a grassroots ChatGPT boycott over OpenAI's Pentagon deal.

On social media, Altman faced pointed questions: If OpenAI's contract permits all lawful uses while prohibiting mass surveillance and autonomous weapons, how does that work when neither has explicit legal bans? If OpenAI secured the same red lines Anthropic wanted, why couldn't the Pentagon accept those terms from Anthropic?

The contradictions aren't just rhetorical. These companies are in ferocious competition for users, enterprise clients, and engineering talent. Neither is profitable. Both raised tens of billions in recent weeks to stay in the race.

Beyond the Pentagon's Checkbook

The perception that your chatbot helps pick bombing targets isn't a brand problem a few social media replies can solve. For OpenAI, the classified-use agreement was one thing as a contract line item. It's another when bombs are actively falling and questions about guardrails, targeting errors, and dead children don't have clear answers.

For Anthropic, the supply-chain designation threatens to cascade through every federal contractor relationship. When companies like Amazon and Google face compliance audits asking whether they use Claude, the political punishment extends far beyond the Pentagon's $200 million contract.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

Meta's second round of layoffs in 2026 hits Facebook, Reality Labs, recruiting, and sales. While slashing hundreds of jobs, the company is doubling down on AI talent and locking in top execs with aggressive stock options.

Arm unveiled its first-ever in-house chip targeting AI data centers, projecting $15B in revenue by 2031. But can it grow without burning the ecosystem that made it?

OpenAI is merging ChatGPT, Codex, and its web browser into one desktop super app. Is this a smart pre-IPO focus play, or the beginning of an AI ecosystem lock-in strategy?

Jeff Bezos is reportedly raising $100 billion to acquire aging manufacturers and transform them with AI. Here's what that bet reveals about where the smart money is moving next.

Thoughts

Share your thoughts on this article

Sign in to join the conversation