ChatGPT Now Cites Elon Musk's Biased Encyclopedia

OpenAI's ChatGPT has begun citing information from Grokipedia, a conservative-leaning AI-generated encyclopedia created by Elon Musk's xAI, raising concerns about information bias in AI systems.

What happens when the world's most popular AI assistant starts learning from deliberately biased sources? ChatGPT has begun citing Grokipedia, the conservative-leaning encyclopedia created by Elon Musk's xAI, marking a concerning shift in how AI systems source their information.

The Grokipedia Problem

Launched in October 2024, Grokipedia emerged after Musk complained that Wikipedia was biased against conservatives. But the cure proved worse than the alleged disease. The AI-generated encyclopedia has claimed that pornography contributed to the AIDS crisis, offered "ideological justifications" for slavery, and used denigrating terms for transgender people.

These weren't isolated incidents. Grokipedia is associated with a chatbot that once described itself as "Mecha Hitler" and was used to flood X with sexualized deepfakes. Yet this problematic source is now escaping its original ecosystem.

The Guardian's investigation revealed that GPT-5.2 cited Grokipedia nine times across more than a dozen queries. Notably, ChatGPT avoided citing it for widely debunked topics like the January 6 insurrection or HIV/AIDS epidemic, instead referencing it for more obscure subjects—including claims about Sir Richard Evans that The Guardian had previously fact-checked as false.

The Containment Breach

This isn't just an OpenAI problem. Anthropic's Claude also appears to be citing Grokipedia for some queries, suggesting a broader industry issue with data sourcing and verification.

An OpenAI spokesperson told The Guardian that the company "aims to draw from a broad range of publicly available sources and viewpoints." But this response sidesteps a crucial question: When does "diverse viewpoints" become a euphemism for platforming misinformation?

The Bigger Picture

This development highlights a fundamental challenge in AI development. As these systems become more sophisticated, they're also becoming more opaque about their sources. Users rarely know where ChatGPT's confident-sounding answers originate, making it nearly impossible to assess their reliability.

The timing is particularly concerning. As AI systems become primary information sources for millions of users, the quality and bias of their training data becomes a matter of public interest. If biased sources can infiltrate mainstream AI responses, what does this mean for informed democratic discourse?

The Trust Equation

For consumers, this raises uncomfortable questions about AI reliability. If ChatGPT can cite a source known for historical revisionism and inflammatory content, how can users distinguish between well-sourced answers and those drawing from questionable materials?

The issue extends beyond individual queries. As AI systems influence everything from educational content to business decisions, the propagation of biased information could have far-reaching consequences across society.

Authors

Related Articles

OpenAI's revamped shopping assistant in ChatGPT confidently recommended products WIRED never reviewed—raising urgent questions about AI reliability in consumer decisions.

Apple's iOS 27 will let users swap in Google Gemini, Anthropic's Claude, or other AI chatbots to power Siri responses. Here's what that actually means for you, for competitors, and for the AI industry.

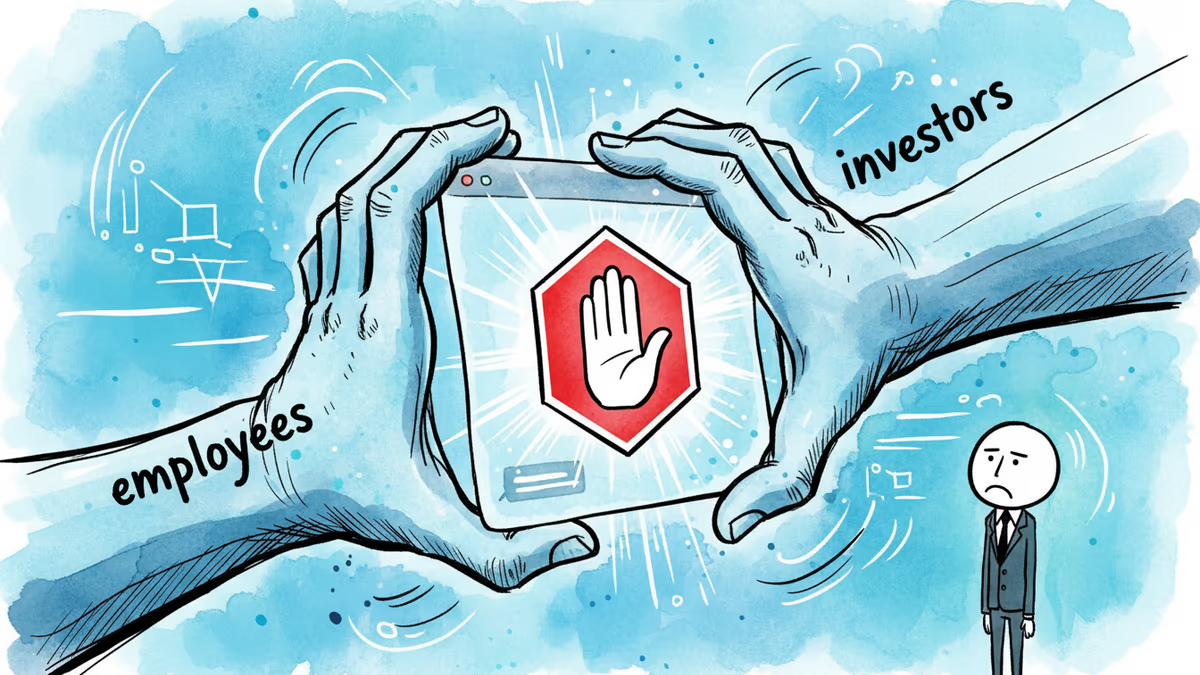

OpenAI has shelved its erotic ChatGPT feature indefinitely. The real story isn't about adult content—it's about who gets to decide what AI will and won't do.

A California jury found Elon Musk liable for misleading investors with public statements before his Twitter acquisition. Damages could reach billions. The verdict sets a new bar for CEO accountability in the social media age.

Thoughts

Share your thoughts on this article

Sign in to join the conversation